This post written by Mark Hall, Xiang Fan, Yuriy Solodkyy, Bat-Ulzii Luvsanbat, and Andrew Pardoe.

Precompiled headers can reduce your compilation times significantly. They’ve worked reliably for millions of developers since they were introduced 25 years ago to speed up builds of MFC apps. Precompiled headers are widely used: they are enabled by default for new Visual C++ projects created in the IDE and similarly provide substantial performance wins in our intellisense architecture.

How do precompiled headers speed up your build? For a given project, most source files share a common set of header files (especially software built for Windows). Many of those header files don’t change frequently. Precompiled headers allow the compiler to save the result of compiling a group of headers into a PCH file that can be used in place of those header files in subsequent compilations. If you want to learn more, this document talks about the benefits of precompiled headers and how to use them in your projects.

Precompiled headers work great as a “set it and forget it” feature. They rarely need attention when upgrading compilers, for example. However, due to their nature there are rare situations where things can go wrong, and it can be difficult to figure out why. This article will help you get past some recent issues customers have run into while using precompiled headers with the Visual C++ compiler.

Overview

You might see intermittent build failures with these error codes and messages when creating or using PCH files with the MSVC compiler:

- fatal error C3859: virtual memory range for PCH exceeded; please recompile with a command line option of ‘-ZmXXX’ or greater

- fatal error C1076: compiler limit: internal heap reached; use /Zm to specify a higher limit

- fatal error C1083: Cannot open include file: ‘xyzzy’: No such file or directory

There are multiple reasons why the compiler might be failing with these diagnostics. All these failures are the result of some kind of memory pressure in virtual memory space that is exhibited when the compiler tries to reserve and allocate space for PCH files at specific virtual memory addresses.

One of the best things you can do if you’re experiencing errors with PCH files is to move to a newer Visual C++ compiler. We have fixed many PCH memory pressure bugs in VS 2015 and VS 2017. Visual Studio 2017 contains the compiler toolset from VS 2015.3 as well as the toolset from VS 2017 so it’s an easy migration path to Visual Studio 2017. The compiler that ships in the 2017 version 15.3 provides improved diagnostics to help you understand what’s happening if you encounter these intermittent failures.

Even with the latest compilers, as developers move to build machines with large numbers of physical cores they still encounter occasional failures to commit memory from the OS when using PCH files. As your PCH files grow in size, it’s important to optimize for the robustness of your build as well as build speed. Using a 64-bit hosted compiler can help, as well as adjusting the number of concurrent compiles using the /MP compiler switch and MSBuild’s /maxcpucount: switch.

Areas affecting PCH memory issues

Build Failures related to PCH use typically have one of the following causes:

- Fragmentation of the virtual memory address range(s) required by the PCH before CL.EXE is able to load it into memory.

- Failure of the Windows OS under heavy loads to increase the pagefile size within a certain time threshold.

Failure to automatically increase the pagefile size

Some developers utilizing many-core (32+) machines have reported seeing the above intermittent error messages during highly parallel builds with dozens of CL.EXE processes active. This is more likely to happen when using the /maxcpucount (/m) option to MSBUILD.EXE in conjunction with the /MP option to CL.EXE. These two options, used simultaneously, can multiply the number of CL.EXE processes running at once.

The underlying issue is a potential file system bottleneck that is being investigated by Windows. In some situations of extreme resource contention, the OS will fail to increase the virtual memory pagefile size even though there is sufficient disk space to do so. Such resource contention can be achieved in a highly parallelized build scenario with many dozens of CL.EXE processes running simultaneously. If PCHs are being used, each CL.EXE process will make several calls to VirtualAlloc(), asking it to commit large chunks of virtual memory for loading the PCH components. If the system page file is being automatically managed, the OS may time out before it can service all the VirtualAlloc() calls. If you see the above error messages in this scenario, manually managing the pagefile settings may resolve the problem.

Manually managing the Windows pagefile

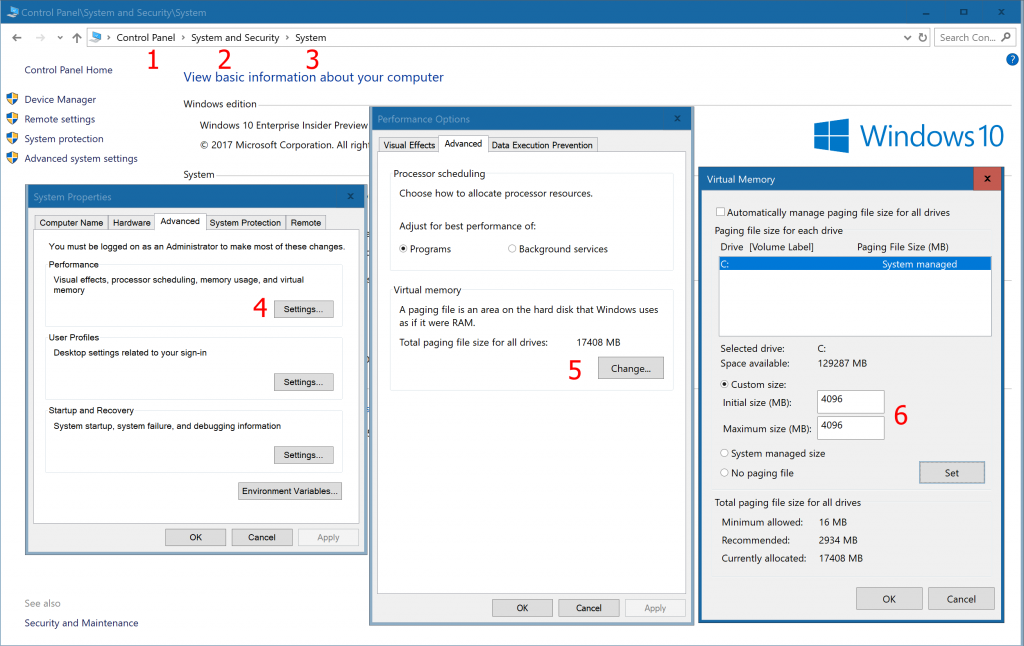

Here’s how you adjust the virtual memory settings on Windows 10 (the procedure is similar for older versions of Windows.) The goal is to set the pagefile settings so that they are large enough to handle the size of all simultaneous VirtualAlloc() calls made by every CL.EXE process that is trying to load a PCH. You can do a back-of-the-envelope calculation by multiplying the size of the largest PCH file in the build by the number of CL.EXE processes observed in the task manager during a build. Be sure to set the initial size equal to the maximum size so that Windows never has to resize the pagefile.

- Open the Control Panel

- Select System and Security

- Select System

- In the Advanced tab of the System Properties dialog, select the Performance “Settings” button

- Select the Virtual Memory “Change” button on the Advanced tab

- Turn off “Automatically manage paging file size for all drives” and set the Custom size. Note that you should set both the “initial size” and “maximum size” to the same value, and you should set them to be large enough to avoid the OS exhausting the page file limit.

Addressing unbalanced compiler architecture, processors, and memory usage

Most problems with memory usage and precompiled headers come from large PCH files being used in multiple CL.EXE processes running simultaneously. These suggestions will help you adjust the compiler architecture and processor usage so that you can use an appropriate amount of memory for the size of the PCH being used.

Changing the compiler’s host architecture

If your PCH file is large (250 MB or more) and you’re receiving the above out-of-memory error messages when using the x86-hosted compiler, consider changing to the x64-hosted compiler. The x64-hosted compiler can use more (physical and virtual) memory than the x86-hosted compiler. You can produce apps for any architecture with x64-hosted tools.

To change from the compiler’s host architecture from the command line, just run the appropriate command environment shortcut (e.g., “x64 Native Tools Command Prompt”.) You can verify that you’ve got the right environment by typing cl /Bv on the command line.

If you’re using MSBuild from the command line you can pass /p:PreferredToolArchtecture=x64 to MSBuild. If you’re building with MSBuild from within Visual Studio, you can edit your .vcxproj file to include a PropertyGroup containing this property. There are instructions on how to add the PropertyGroup under the section “Using MSBuild with the 64-bit Compiler and Tools” on this page.

If you’re using the /Zm switch on your compile command line, remove it. That flag is no longer required to accommodate large PCH files in Visual Studio 2015 and forward.

Changing the number of processors used in compilation

When the /MP compiler option is used, the compiler will build with multiple processes. Each process will compile one source file (or “translation unit”) and will load its respective PCH files and compiler DLLs into the virtual memory space reserved by that process. On a machine with many cores this can quickly cause the OS to run out of physical memory. For example, on a 64-core machine with a large PCH file (e.g., 250 MB) the consumed physical memory (not the virtual memory) can easily exceed 16 GB. When physical memory is exhausted the OS must start swapping process memory to the page file, which (if automatically managed) may need to be grown to handle the requests. When the number of simultaneous “grow” requests reaches a tipping point, the file system will fail all requests it cannot service within a certain threshold.

The generally stated advice is that you should not exceed the number of physical cores when you parallelize your compile across processes. Although you may achieve better performance by oversubscribing, you should be aware of the potential for these memory errors and dial back the amount of parallelism you use if you see the above errors during builds.

The default setting of /MP is equal to the number of physical cores on the machine, but you can throttle it back by setting it to a lower number. For example, if your build is parallelized in two worker processes on a 64-core machine you may want to set /MP32 to use 32 cores for each worker process. Note that the MSBuild /maxcpucount (or /m) setting refers to the number of MSBuild processes. Its value is effectively multiplied by the number of processes specified by the compiler’s /MP switch. If you have /maxcpucount:32 and /MP defaulting to 32 on a 32-core machine, you will have up to 1024 instances of the compiler running concurrently.

Throttling back the number of concurrent compiler processes may help with the intermittent fatal errors described above.

Reducing the size of your PCH

The larger your PCH file, the more memory it consumes in every instance of the compiler that runs during your build. It is common for PCH files to contain many headers files that aren’t even referenced. You may also find that your PCH files grow when you upgrade to a new compiler toolset. As the library headers grow in size from version to version, PCH files that include them grow as well.

Note that while PCH files up to 2 GB in size are theoretically possible, any PCH over 250 MB should be considered large, and thus more likely to contain unused header files and impede scaling to large build machines.

Use of “#pragma hdrstop PCH-file-name” requires the compiler to process the input file up to the location of the hdrstop, which can cause a small amount of memory fragmentation to occur before the PCH is loaded. This may cause the PCH to fail to load if the address range required by a component of the PCH remains in use at that time. The recommended way to name the PCH file is via the command line option /FpPCH-file-name which helps the compiler to reserve the memory earlier in the process execution.

Ignoring the /Zm flag

Prior to VS2015, the PCH was composed of a single, contiguous virtual address range. If the PCH grew beyond the default size, the /Zm flag had to be used to enable a larger maximum size. In VS2015, this limitation was removed by allowing the PCH to comprise multiple address ranges. The /Zm flag was retained for the #pragma hdrstop scenario that might work only with a PCH containing a single contiguous address range. The /Zm flag should not be used in any other scenario, and the value reported by fatal error C3859 should be ignored. (We are improving this error message, see below.)

Future Improvements

We are working to make PCH files more robust in the face of resource contention failures and to make the errors emitted more actionable. In Visual Studio 2017 version 15.3 the compiler emits a detailed message that provides more context for compiler error C3859. For example, here’s what one such failure will look like:

C3859: virtual memory range for PCH exceeded; please recompile with a command line option of '-ZmXX' or greater note: PCH: Unable to get the requested block of memory note: System returned code 1455 (ERROR_COMMITMENT_LIMIT): The paging file is too small for this operation to complete. note: please visit https://aka.ms/pch-help for more details

In closing

Thank you to the hundreds of people who provide feedback and help us improve the C++ experience in Visual Studio. Most of the issues and suggestions covered in this blog post result from conversations we had because you reached out to our team.

If you have any feedback or suggestions for us, let us know. We can be reached via the comments below, via email (visualcpp@microsoft.com) and you can provide feedback via Help > Report A Problem in the product, or via Developer Community. You can also find us on Twitter (@VisualC) and Facebook (msftvisualcpp).

Severity Code Description Project File Line Suppression State

Error C2857 '#include' statement specified with the /Ycstdafx.h command-line option was not found in the source file line 692 - there is only 691 lines in the file.

Your blog drones on about pre-complied headers for long enough to put me to sleep but the only mention of "off" is for virtual memory. This is in keeping with much of what I see from Microsoft. Someone is "forced" to write some documentation or a blog and just cut and pastes something together without giving it much thought. My recent experience of C++ in Visual Studio can be summed up in...

The author notes that /MP also allows to adjust the number of cores to use. It should be noted that this is limited by a design flaw in Visual Studio (up to version 2019, 16.3.10). The property MultiProcessorCompilation, which translates into /MP, is implemented as a bool and thus won’t accept numbers. So you have to set this to false and specify /MPn literally in the Additional Options tab instead, where n denotes the max. number of cores.

This blog post completely ignores the biggest problem with Microsoft's precompiled headers implementation:

It falsely presumes that all code files in a "project" load the same actual headers and defines in the same order as the code file for which the PCH file was generated (typically stdafx.cpp in MFCATL projects).

This causes two practical problems:

1. If a "project" contains files that need contradicting definitions (such as compiling with/without -DUNICODE), half the files will be incorrectly compiled.

2. It easily misleads Visual Studio users to forget including needed headers if those headers were included in the code file that has the "create PCH" compiler...

Problem #1 is actually easy to solve. Specify two cpp files that compile the headers with the desired settings:

a) pch.h + pchMbcs.cpp compile without -DUNICODE into pchMbcs.pch

b) pch.h + pchUnicode.cpp compile with -DUNICODE into pchUnicode.pch

c) other cpp files use either pchMbcs.pch or pchUnicode.pch as precompiled header in their project settings.

Note that pchMbcs.cpp and pchUnicode.cpp contain nothing but #include “pch.h”

They just serve as triggers for the creating the two pch files.

We had a similar scenario for files needing to compile with or without /clr:nostdlib

Well is it possible to find the cause of a PCH problem?

PCH do not work when there's a NATVIS file in the project (even if it is excluded from build... I have the same problem as https://developercommunity.visualstudio.com/content/problem/450187/visual-studio-2017-adding-a-custom-debugging-data.html, https://social.msdn.microsoft.com/Forums/en-US/b1c30682-ff9e-404c-a7a8-7b2c88132ed6/visual-studio-2017-adding-a-custom-debugging-data-visualizer-natvis-file-causes-a-perpetual?forum=vcgeneral)

PCH do not work with VStudio 16.1.1 (https://developercommunity.visualstudio.com/content/problem/592613/new-in-vs-1611-problem-with-precompiled-header-pdb.html)

So it would be great if a developer has the chance to find out WHY the PCH usage fails, possibly finding a workaround or solution. This seems a non-common, but nasty problem, and unless MS can fix these issues, us developers have to deal with it. I understand there are a lot of...