Introduction

In March 2020, ML.NET added support for training Image Classification models in Azure. Although the image classification scenario was released in late 2019, users were limited by the resources on their local compute environments. Training in Azure enables users to scale image classification scenarios by using GPU optimized Linux virtual machines.

This post will show how to train a custom image classification model in Azure to categorize flowers using ML.NET Model Builder. Then, you can leverage your existing .NET skills to consume the trained model inside a C# .NET Core console application. Best of all, little to no prior machine learning knowledge is required. Let’s get started!

ML.NET Model Builder

ML.NET is an open-source and cross-platform machine learning framework for .NET developers. Model Builder is a tool in Visual Studio that provides a graphical user interface that uses Automated Machine Learning (Auto ML) to train and consume custom ML.NET models for your .NET applications.

Prerequisites

To follow along, you’ll need the following prerequisites:

- Visual Studio 2019 16.6 preview 2 or later

- Azure account. If you don’t have one, you can sign up for a free Azure account.

- .NET Core cross-platform development workload

To use Model Builder, make sure to enable the preview feature. In the Visual Studio Menu Bar, select Tools > Options > Environment > Preview Features. Then, check the Enable ML.NET Model Builder box.

Model training workflow

The process of training models usually consists of the steps outlined below:

- Get the data

- Create a .NET Core application. This can be a console, desktop, or web application.

- Choose a scenario

- Configure your training environment

- Load the data

- Train the model

- Evaluate the model

- Add the code to make predictions

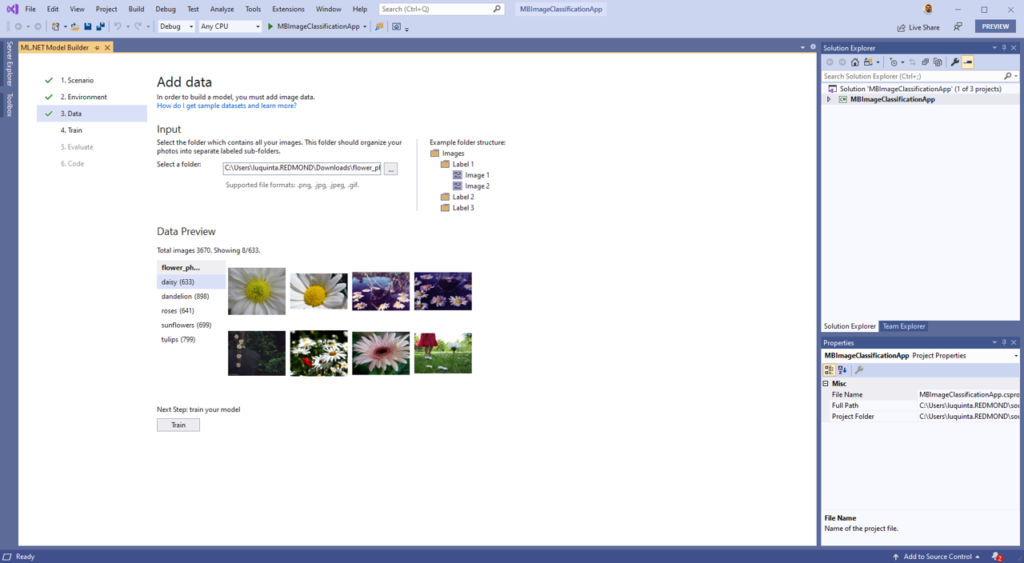

Add the data

The dataset used in this guide is based on the TensorFlow flower photos dataset. All images in this archive are licensed under the Creative Commons By-Attribution License, available at: https://creativecommons.org/licenses/by/4.0/

The full license information is provided in the LICENSE.txt file which is included as part of the same image set.

The dataset is divided into separate subfolders, one for each flower category:

- Daisy

- Dandelion

- Roses

- Sunflowers

- Tulips

Download the data anywhere on your PC and unzip it.

Model Builder requires data to be in a certain format. This dataset is already in the correct format. For more information, see Microsoft Docs to learn more about loading data with Model Builder.

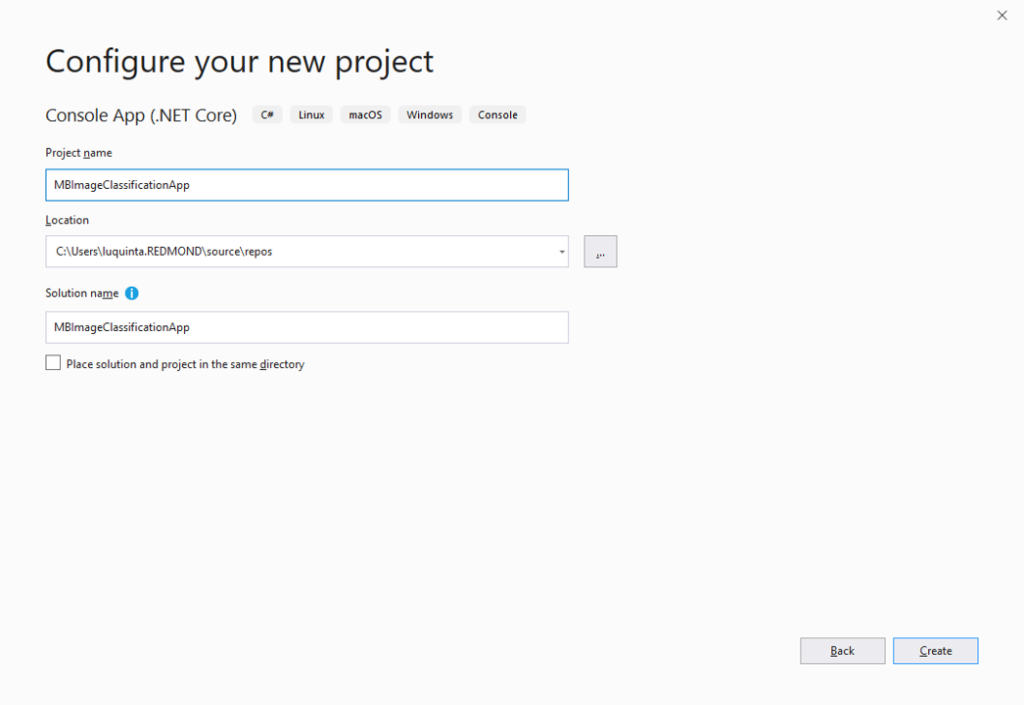

Create a .NET Core console application

The application that consumes the model is a C# .NET Core console application.

Open Visual Studio and create a new C# .NET Core console application. In this case, I’ve called my application MBImageClassificationApp.

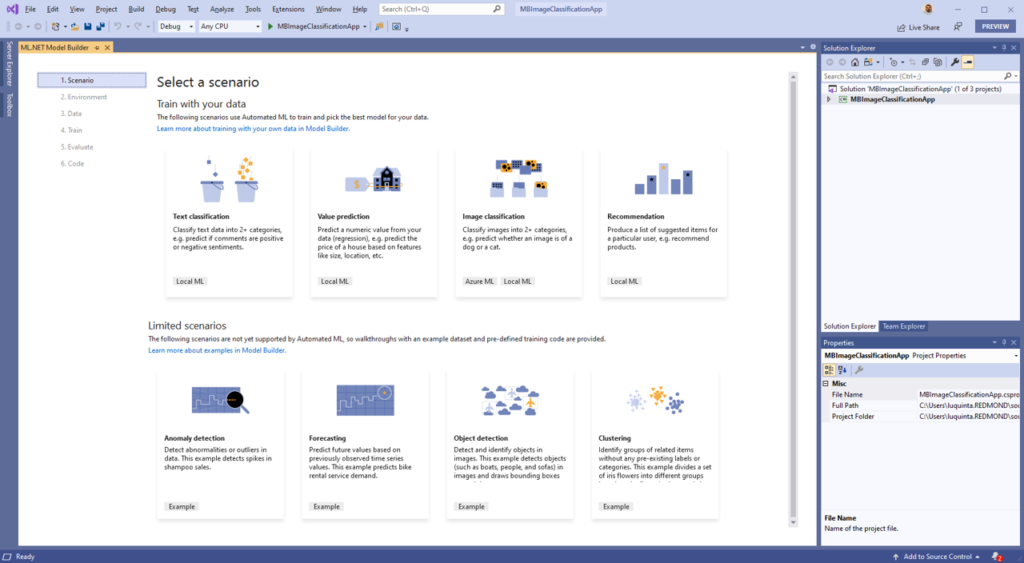

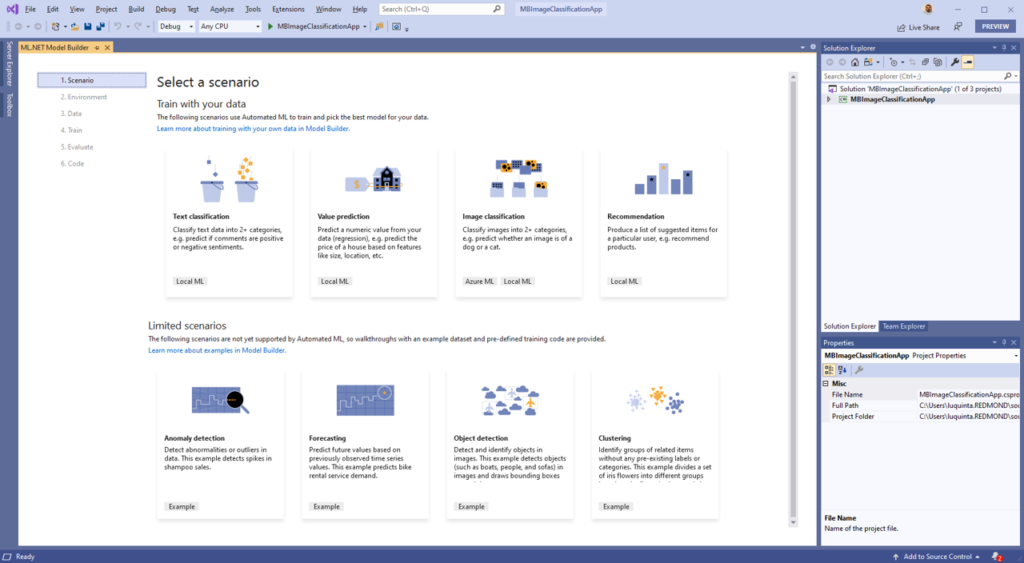

Choose a scenario

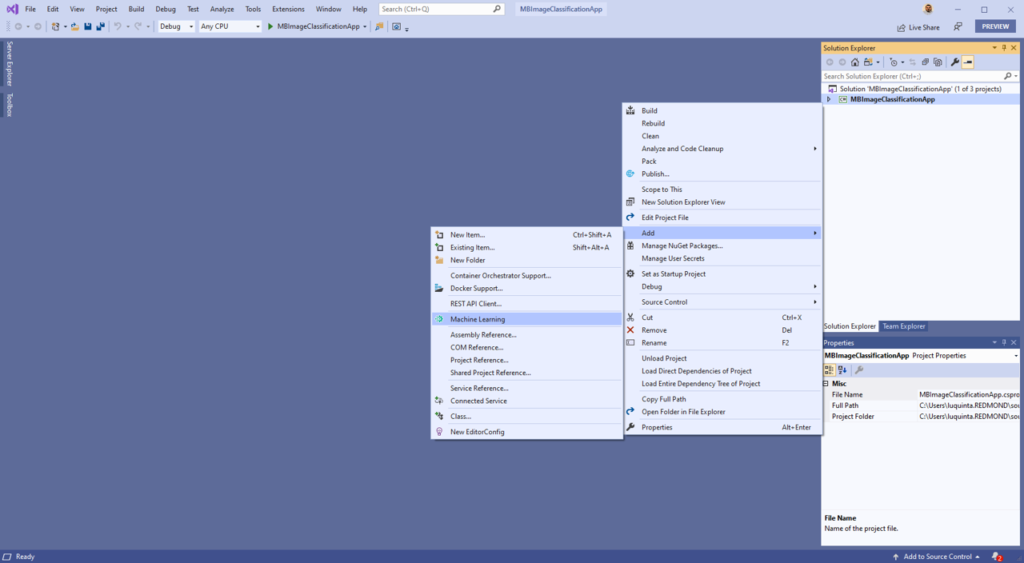

In Solution Explorer, right-click your newly created console application project and select Add > Machine Learning to launch Model Builder.

Once you’re presented with the scenario screen, select Image Classification and proceed to the next step.

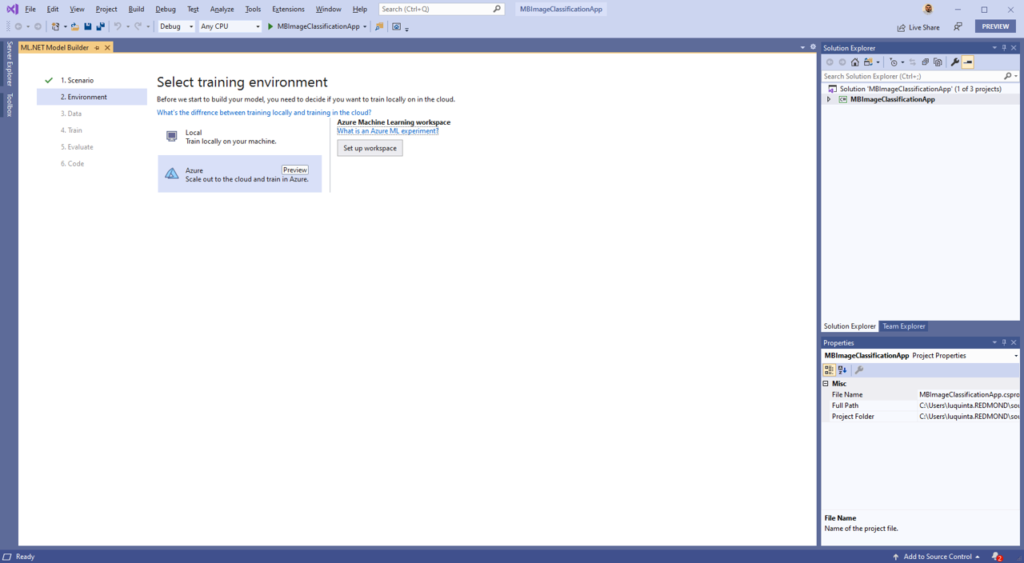

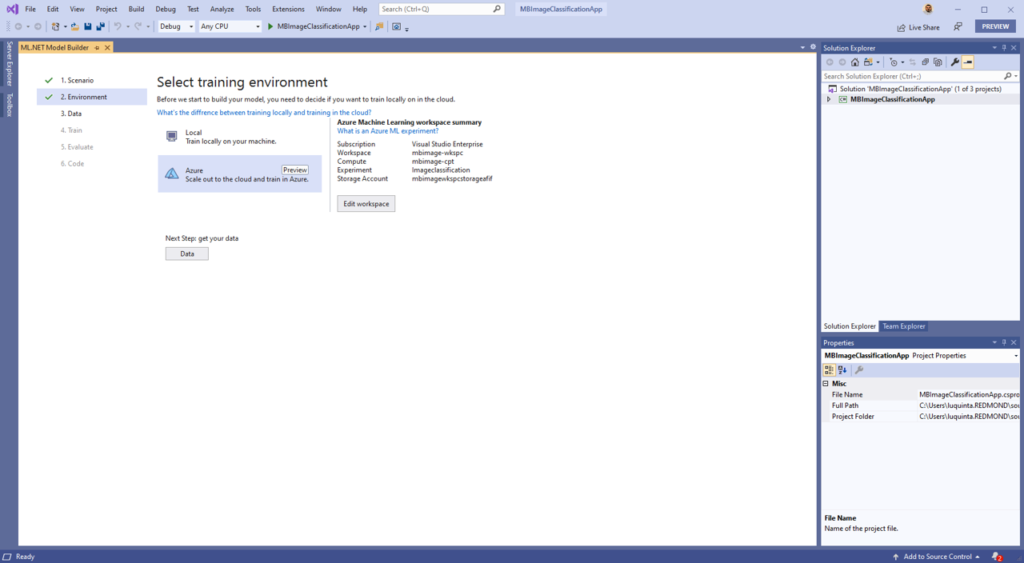

Configure your training environment

For image classification scenarios, you can choose between training locally or in the cloud. For this sample we’ll train the model in Azure. For scenarios that train on thousands of images and require a large amount of resources, it is recommended to use Azure, which provides GPU optimized virtual machines for training.

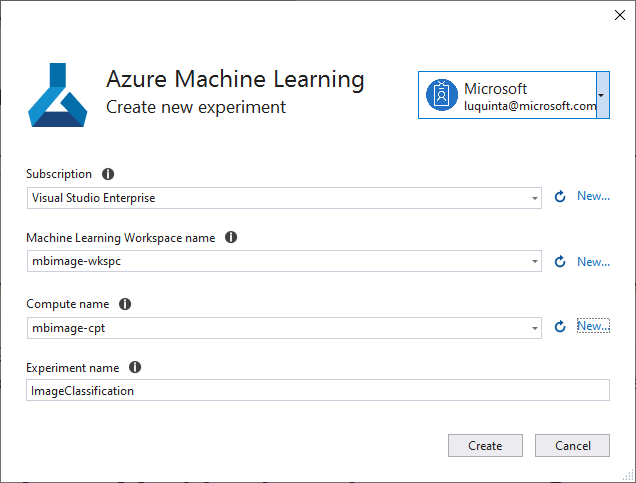

Create Azure Machine Learning experiment

Choose Azure form the list of training environments. Then, select the Set up workspace button to configure your environment.

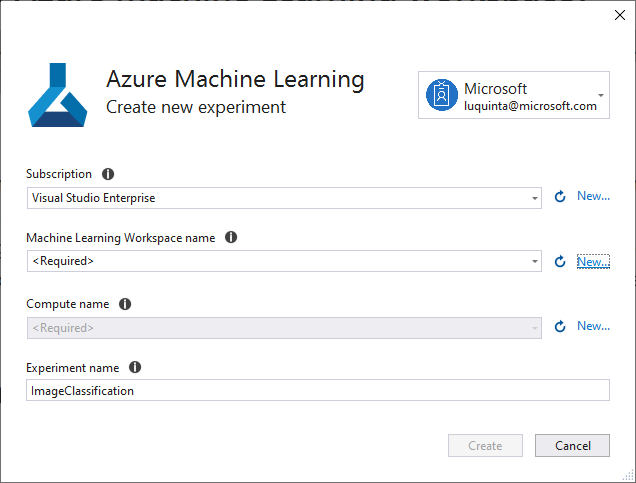

An Azure Machine Learning experiment is a resource that needs to be created before running Model Builder training on Azure. The experiment encapsulates the configuration and results for one or more machine learning training runs.

Set up Azure environment

To create an Azure Machine Learning experiment, you first need to configure your environment on Azure. An experiment needs an Azure subscription, workspace, and compute to run on.

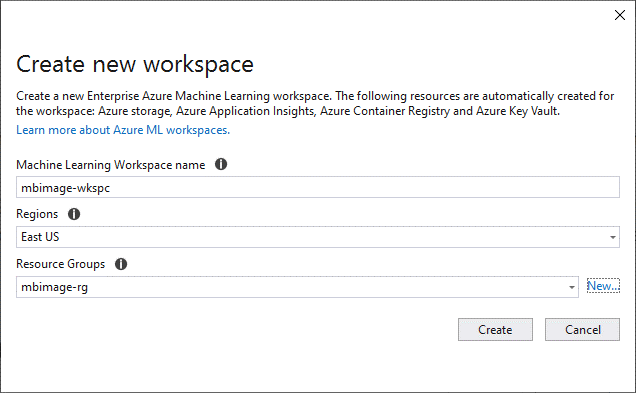

In the Create New Experiment dialog, choose your Azure subscription. Then, select or create a new Azure Machine Learning workspace. A workspace is an Azure Machine Learning resource that provides a central place for all Azure Machine Learning resources and artifacts created as part of a training run.

When you create a new workspace, the following resources are provisioned:

- Azure Machine Learning Enterprise workspace

- Azure Storage

- Azure Application Insights

- Azure Container Registry

- Azure Key Vault

As a result, this process may take a few minutes.

When you choose a region, it’s recommended that you select a location close to where you or your customers are.

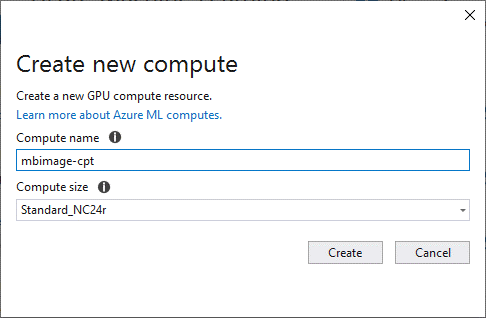

Once you’ve chosen or created your workspace, choose or create a new Azure Machine Learning compute. An Azure Machine Learning compute is a cloud-based Linux VM used for training. Learn more about compute types supported by Model Builder. This process may take a few minutes.

In the Create New Experiment dialog, leave the default experiment name and select Create.

The first experiment is created and its name is registered in the workspace. Any subsequent runs – if the same experiment name is used – are logged as part of the same experiment. Otherwise, a new experiment is created.

If you’re satisfied with your configuration, select the Data button to move to the next step.

Load the data

In the Add data screen, load your data by selecting the button next to the Select a folder text box and use the file explorer to find the unzipped directory containing the subdirectories with images.

Once the data is loaded, select the Train button to train your model.

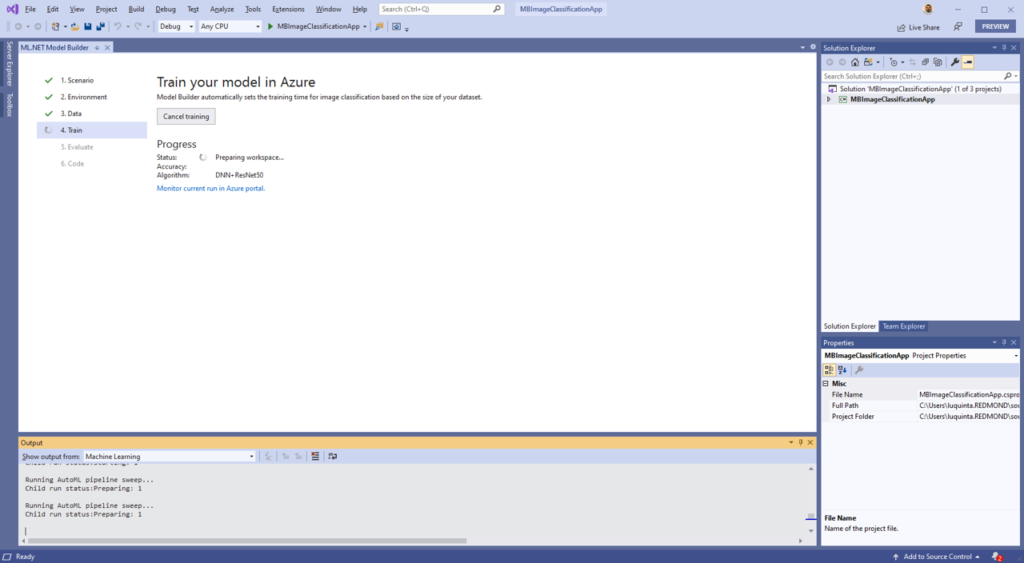

Train the model

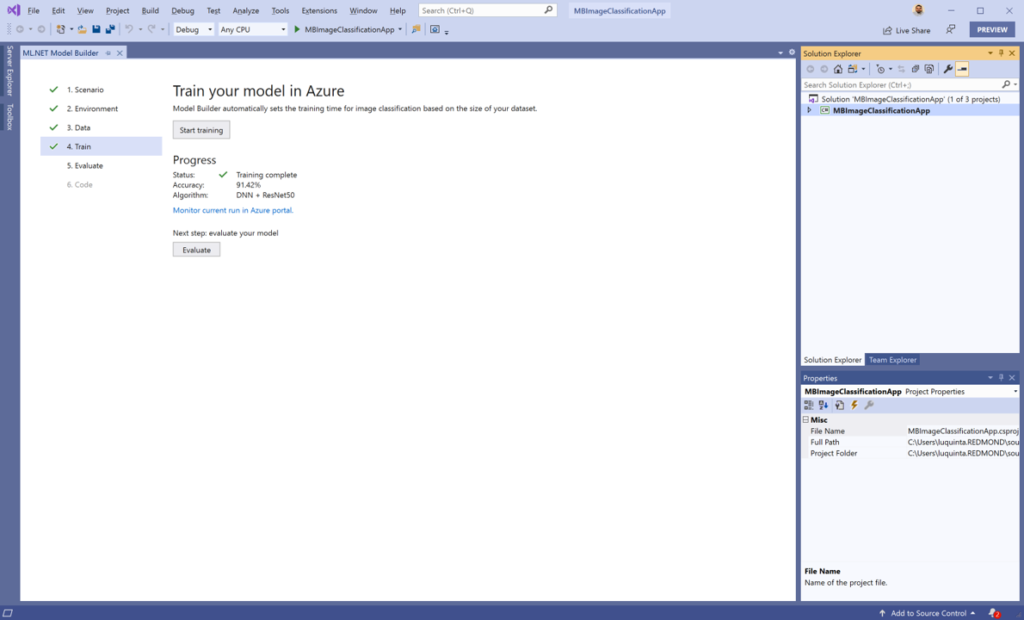

In the Model Builder train screen, select the Start training button to start training your model.

At this point, your data is uploaded to Azure Storage and the training process begins. The algorithm used to train these models is a Deep Neural Network based on the ResNet50 architecture. The training process takes some time and the amount of time may vary depending on the size of compute selected as well as the amount of data.

For this sample of 3670 images, training took about 30 minutes. The first time a model is trained in Azure, you can expect a slightly longer training time because resources have to be provisioned. You can track the progress of your runs in the Azure Machine Learning portal by selecting the “Monitor current run in Azure portal” link in Visual Studio.

Once the model is trained, select the Evaluate button to move to the next step.

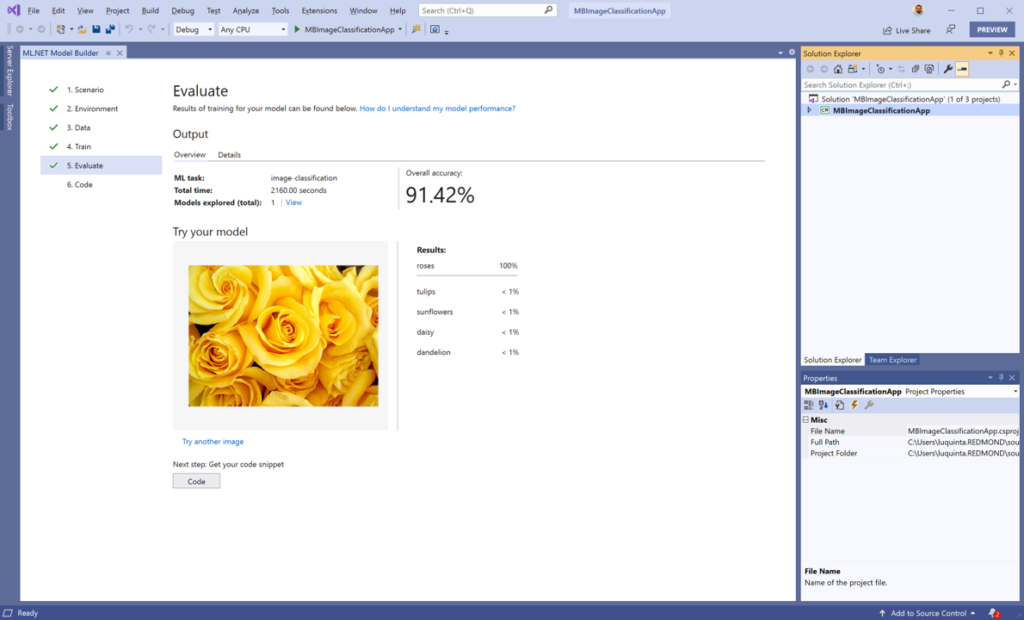

Evaluate your model

In the evaluate screen, you are able to get an overview of the results from the training process such as how long the model took to train as well as the accuracy. Additionally, you can use the “Try your model” experience to quickly check whether your model is performing as expected. All you need to do is provide an image, preferably one that the model did not use as part of training. The model classifies the image and provides the list of categories along with their probabilities with the predicted value at the top of the list.

If you’re satisfied with your model, select the Code button to add the code to make predictions.

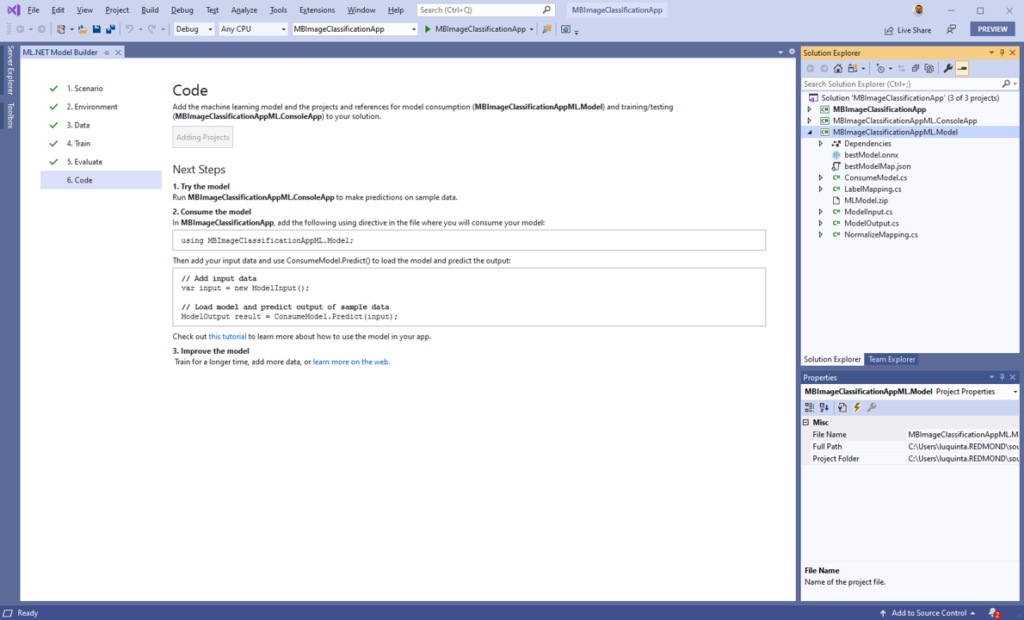

Add the code to make predictions

In the code screen, select the Add Projects button to add the auto-generated projects to your solution.

Two projects are added to your solution with the following suffixes:

- ConsoleApp: A C# .NET Core console application that provides starter code to build the prediction pipeline and make predictions.

- Model: A C# .NET Standard application that contains the data models that define the data schema of input and output model data as well as the following assets:

- bestModel.onnx: A serialized version of the model in Open Neural Network Exchange (ONNX) format. ONNX is an open-source format for AI models that supports interoperability between frameworks like ML.NET, PyTorch, and TensorFlow.

- bestModelMap.json: A list of categories used when making predictions to map the model output to a text category.

- MLModel.zip: A serialized version of the ML.NET prediction pipeline that uses the serialized version of the model bestModel.onnx to make predictions and maps outputs using the bestModelMap.json file.

Use the machine learning model

Open the Program.cs file from your console application. In this case, my console application is MBImageClassificationApp.

Then, replace the code with the following:

using System;

using MBImageClassificationAppML.Model;

namespace MBImageClassificationApp

{

class Program

{

static void Main(string[] args)

{

ModelInput image = new ModelInput

{

ImageSource = "Pink_Tulips.jpg"

};

var prediction = ConsumeModel.Predict(image);

Console.WriteLine($"Actual: tulips | Predicted: {prediction.Prediction}");

}

}

}

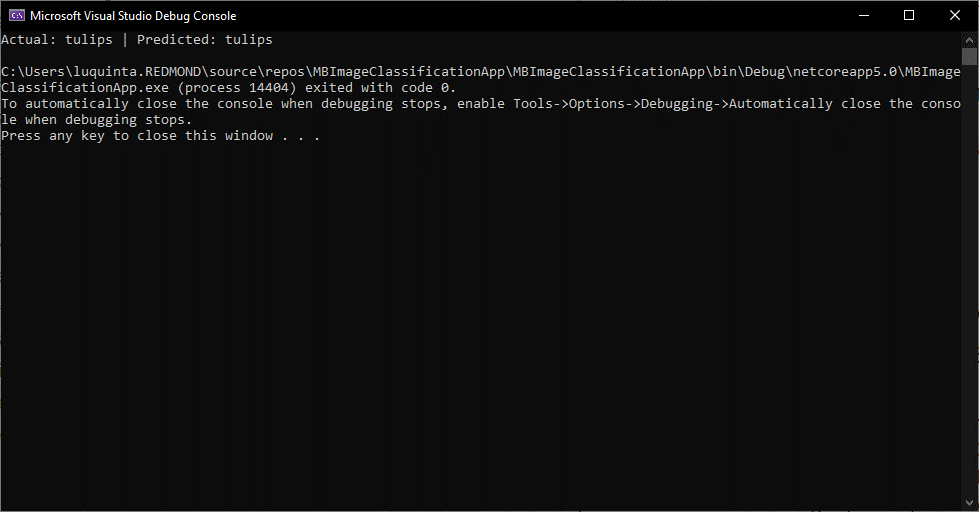

The first thing I’ve done is added a using statement to reference the MBImageClassificationAppML.Model project. To test my application, I’ve downloaded an image of tulips called Pink_Tulips from the internet and included it in my MBImageClassificationApp project. The model has not seen this image before. A new instance of ModelInput is created and the path of the image is used as the value for the ImageSource property.

Then, ConsumeModel.Predict, a helper method that loads the MLModel.zip file and uses PredictionEngine, a convenience API to make predictions on a single instance of data, is called with the sample image as input. Finally, both the actual class the image belongs to, as well as the predicted flower category, are printed out to the console.

When you run the application, you should see output similar to the following in the console:

Clean up resources

Azure Machine Learning Enterprises are currently in preview. Therefore, there is no additional surcharge at the moment. Training this model cost less than $0.50. Depending on your scenario, your costs may vary depending on the amount of data and size of your compute. For more information on pricing, see the Linux Virtual Machine Scale Sets pricing page.

If you no longer plan to use the Azure resources you’ve created, delete them. This prevents you from being charged for unutilized resources that are still running.

- Navigate to the Azure portal and select Resource groups in the portal menu.

- From the list of resource groups, select the resource group you created.

- Select Delete resource group and follow the instructions in the prompt to delete your resource group.

Share your feedback

Since Model Builder is still in Preview, your feedback is super important in driving the direction of this tool and ML.NET in general. We would love to hear your feedback!

If you run into any issues, please let us know by creating an issue in our GitHub repo.

Virtual ML.NET Conference

Join the community May 29-30 for a free online conference.

The conference will have a full-day hands-on workshop, followed by another day of sessions.

Sign up to attend or submit a talk.

See you there!

Additional resources

Watch this video to see how quick it is to train and consume an image classification model to recognize clothing garments and accessories in a Blazor web application.

Check out this end-to-end sample that uses satellite images to determine land use.

Learn more about Model Builder from Microsoft Docs.

Not currently using Visual Studio? Try out the ML.NET CLI.

Hi, I'm using VS2019 Pro version 16.6.1, so I should assume that I can use ML.NET. I've also downloaded the ML.NET example codes from github and was able to run and compile them. However, going through this article, I could not find the Moel Builder option when I right clicked on my console project and choose Add. I saw from your screen capture that it falls under "REST api client" and before "COM reference", but in my case I only see "REST api client" followed by "COM reference", no "Model Builder". I gone into tools/options/Environment/Preview features to check "enable ML.net...

Sorry, only came to realise I’ve to install the model builder extension in VS to see that menu item able to train now 😀

Do we need a payed subscription in Azure, or the free trial is enough?!

same question as mekki ahmedi

Nice to see image classification models becoming easier to create. Will object detection be available too in the future?

I’m just a simple C# developer. Looking at the tutorials and docs on how to use Tensorflow/Pytorch, Python, all the algorithms and the different types of datasets makes me avoid using ML in my applications for now.

Hi, Luis.

How can I use URL instead of filename when creating instance of the ModelInput:

new ModelInput { ImageSource = "Pink_Tulips.jpg" }Thank you

Hi,

So this particular model only supports image files as input. What you can do is use HttpClient to download the image from the web and save it to a temp file. Then, use the temp file path for the ImageSource property.