DirectML at GDC 2019

Introduction

Last year at GDC, we shared our excitement about the many possibilities for using machine learning in game development. If you’re unfamiliar with machine learning or neural networks, I strongly encourage you to check out our blog post from last year, which is a primer for many of the topics discussed in this post.

This year, we’re furthering our commitment to enable ML in games by making DirectML publicly available for the first time. We continuously engage with our customers and heard the need for a GPU-inferencing API that gives developers more control over their workloads to make integration with rendering engines easier. With DirectML, game developers write code once and their ML scenario works on all DX12-capable GPUs – a hardware agnostic solution at the operator level. We provide the consistency and performance required to integrate innovations in ML into rendering engines.

Additionally, Unity announced plans to support DirectML in their Unity Inference Engine that powers Unity ML Agents. Their decision to adopt DirectML was driven by the available hardware acceleration on Windows platforms while maintaining control of the data locality and the execution flow. By utilizing the regular graphics pipeline, they are saving on GPU stalls and have full integration with the rendering engine. Unity is in the process of integrating DirectML into their inference engine to allow developers to take advantage of metacommands and other optimizations available with DirectML.

We are very excited about our collaboration with Unity and the promise this brings to the industry. Providing developers fast inferencing across a broad set of platforms democratizes machine learning in games and improves the industry by proving out that ML can be integrated well with rendering work to enable novel experiences for gamers. With DirectML, we want to ensure that applications run well across all Windows hardware and empower developers to confidently ship machine learning models on lightweight laptops and hardcore gaming rigs alike. From a single model to a custom inference engine, DirectML will give you the most out of your hardware.

Why DirectML

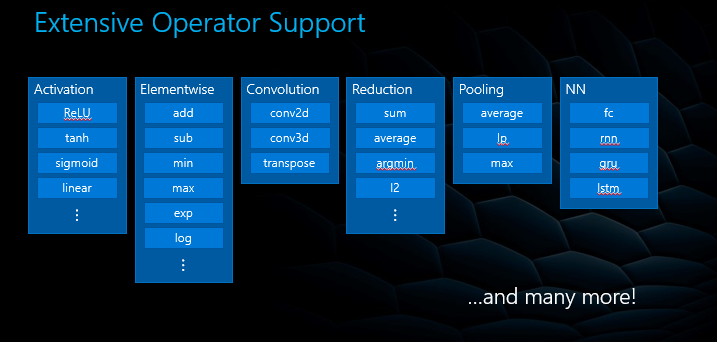

Many new real-time inferencing scenarios have been introduced to the developer community over the last few years through cutting edge machine learning research. Some examples of these are super resolution, denoising, style transfer, game testing, and tools for animation and art. These models are computationally expensive but in many cases are required to run in real-time. DirectML enables these to run with high-performance by providing a wide set of optimized operators without the overhead of traditional inferencing engines.

To further enhance performance on the operators that customers need most, we work directly with hardware vendors, like Intel, AMD, and NVIDIA, to directly to provide architecture-specific optimizations, called metacommands. Newer hardware provides advances in ML performance through the use of FP16 precision and designated ML space on chips. DirectML’s metacommands provide vendors a way of exposing those advantages through their drivers to a common interface. Developers save the effort of hand tuning for individual hardware but get the benefits of these innovations.

DirectML is already providing some of these performance advantages by being the underlying foundation of WinML, our high-level inferencing engine that powers applications outside of gaming, like Adobe, Photos, Office, and Intelligent Ink. The API flexes its muscles by enabling applications to run on millions of Windows devices today.

Getting Started

DirectML is available today in the Windows Insider Preview and will be available more broadly in our next release of Windows. To help developers learn this exciting new technology, we provided a few resources below, including samples that show developers how to use DirectML in real-time scenarios and exhibit our recommended best practices.

Documentation: https://docs.microsoft.com/en-us/windows/desktop/direct3d12/dml

Samples: https://github.com/microsoft/DirectML-Samples

If you were unable to attend our GDC talk this year, slides containing more in-depth information about the API and best practices will be available here in the coming days. We will be releasing the super-resolution demo featured in this deck as an open source sample, coming soon. Stay tuned to the GitHub account above.

Light

Light Dark

Dark

1 comment

Will Intel 4th and 5th generations also support DirectML? Actually, those architecture are stuck with WDDM 2.0 drivers, so will DML require updated drivers to support the last driver model?