Secure software supply chain with Azure Pipelines artifact policies

We are announcing a preview capability for Azure Pipelines allowing you to define artifact policies that are enforced before deploying to critical environments such as production. You will be able to define custom policies that are evaluated against all the deployable artifacts in a given pipeline run and block the deployment if the artifacts don’t comply. At launch, we support container images and Kubernetes environments; support for other artifact types and target environment resources will be added in the next months.

This feature is currently in private preview, and if you’d like to participate, please drop us a note.

Teams know how valuable and sensitive production environments are, and changes to it usually require following multiple checklists suggested by the security, operations and engineering teams, among others. However, sometimes the protocol is not fully respected; and when that happens, audit teams raise red flags, and after some root causing and soul searching, teams settle with an updated process that prevents the lapse from happening and most likely increasing their mean time to deliver (MTD).

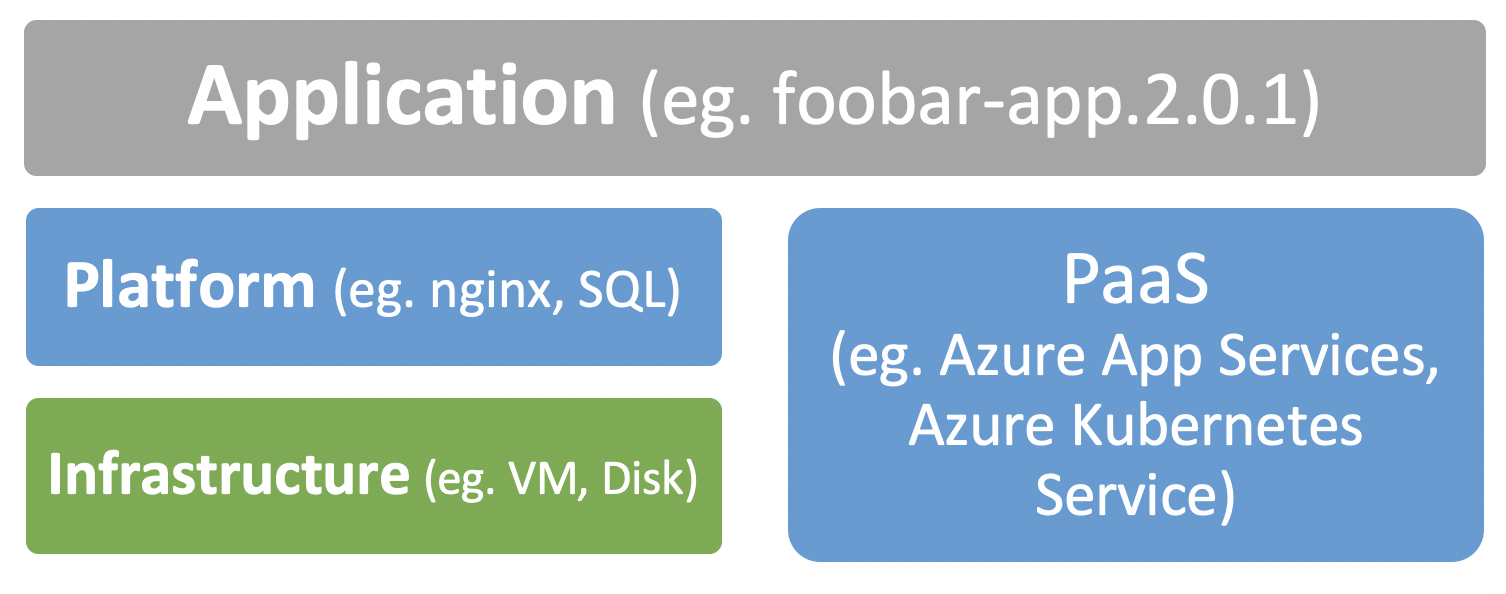

At a high level, an application environment can be described as 3 layers: infrastructure, application platform, application.

Each of these layers can be described as code and with their own set of best practices to imbibe. We’ll be focusing on the application layer in this article, which is updated most frequently and is the interface for external systems and users to interact with.

So, when it comes to checklist in the context of an application update, what are the usual suspects?

For example:

- Allow application binaries from trusted sources

- Application binaries has been built from trusted source control repository

- Allow production deployment only when an application has been deployed and tested in a staging environment

- Static analysis tools such as code coverage, lint have been run (with acceptable thresholds)

- Application bits has run specific tests (with acceptable thresholds)

- No known vulnerabilities found above severity level: medium

- Green light from a custom tool

This is a supply chain problem where the goods (application artifact) to be delivered (to production system) goes through various waypoints (build, test, analysis) and the shipment is tracked in a waybill (record of where the artifact originated from, processes it’s been through). Not surprisingly, the term Software supply chain has been picking up in recent years. Let’s say we have an artifact with all the attributions related to the build, tests and other processes it’s been through, and there are policies defined by the teams collectively: can we now eliminate the manual intervention before production deployment? Here’s how artifact policies can be put to work.

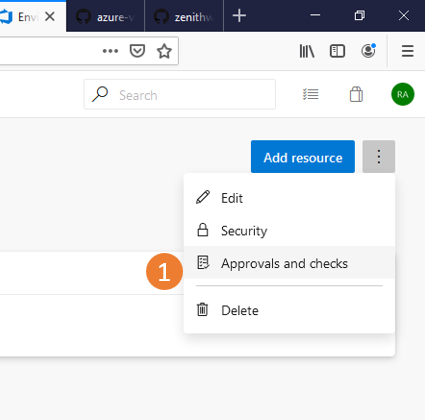

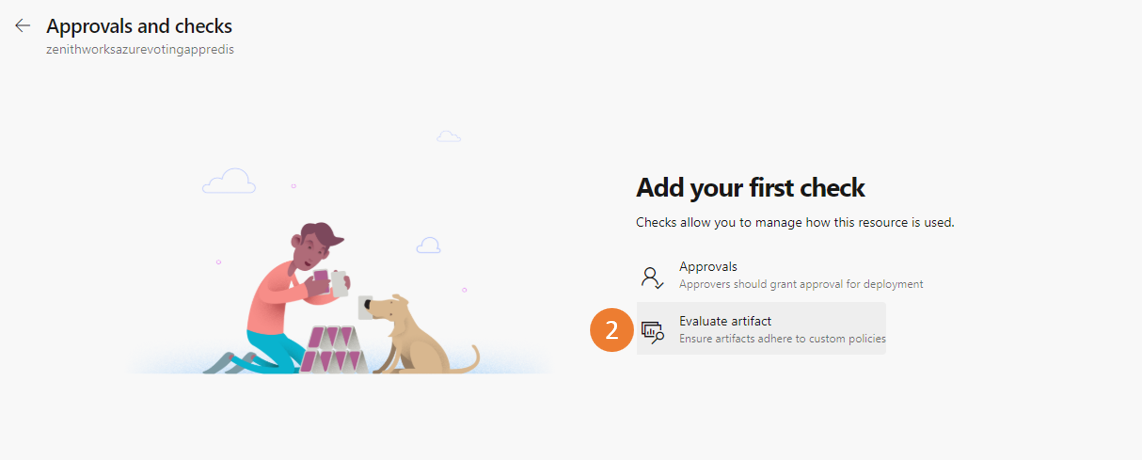

The artifact policy is configured as a Check on an Environment.

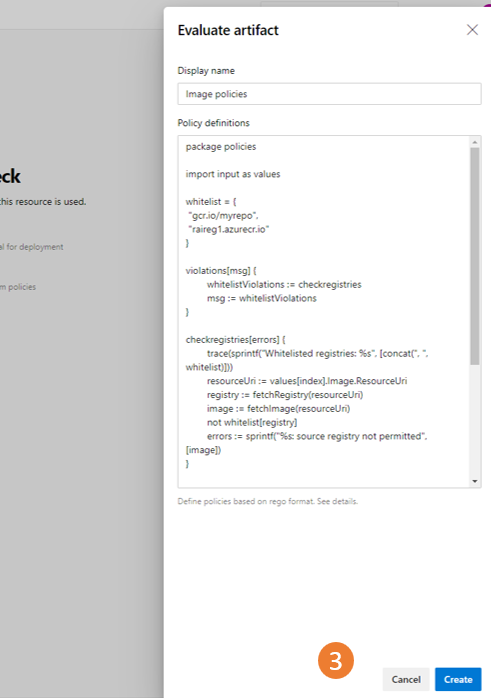

Check configuration lets you specify custom policies to enforce; we have a set of examples to help you get started. The policy is evaluated with the Open Policy Agent.

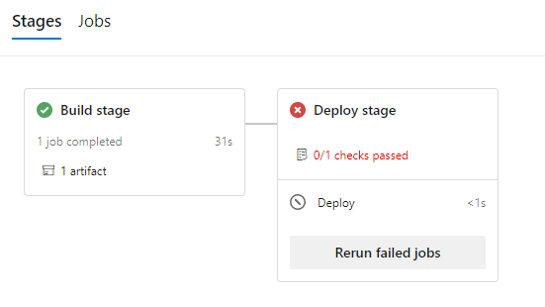

After you define a policy, when a container image is built, tested or deployed, metadata is automatically attributed to the resulting artifact (the container image); you can even add custom metadata if desired. When an environment with the Artifact policy Check configured is running, custom policies are evaluated even before the deployment stage is run. As part of the evaluation, metadata for all the pipeline images (images either consumed via resources or built in any previous stages) is retrieved and the policy evaluated for all deployable image. If the images comply, they can be deployed, otherwise the pipeline halts with an error, as per this example:

And there it is, Azure Pipeline captured artifact’s provenance and provided a mechanism to enforce custom policies before they could be deployed to a production environment – instantly, without you having to manually validate them every single time!

You can learn more by looking at the artifact policy check documentation and reading about writing a custom policy. Additionally, you can check out sample policies.

If you have any feedback, get in touch by posting on our Developer Community or reaching out on Twitter at @AzureDevOps.

Light

Light Dark

Dark

1 comment

Git is an incredibly effective way to collaborate on application development. Developers collaborate in feature branches and Pull Requests(PRs).