Microsoft announced the new Azure Data Lake services for analytics in the cloud that includes a hyper-scale repository, a new analytics service built on YARN that allows data developers and data scientists to analyze all data, and HDInsight, a fully managed Hadoop, Spark, Storm and HBase service. Azure Data Lake Analytics includes U-SQL, a language that unifies the benefits of SQL with the expressive power of your own code. U-SQL’s scalable distributed query capability enables you to efficiently analyze data in the store and across relational stores such as Azure SQL Database. In this blog post I will outline the motivation for U-SQL, some of our inspiration, and design philosophy behind the language, and show you a few examples of the major aspects of the language.

Why U-SQL?

If you analyze the characteristics of Big Data analytics, several requirements arise naturally for an easy to use, yet powerful language:

- Process any type of data. From analyzing BotNet attack patterns from security logs to extracting features from images and videos for machine learning, the language needs to enable you to work on any data.

- Use custom code easily to express your complex, often proprietary business algorithms. The example scenarios above may all require custom processing that is often not easily expressed in standard query languages, ranging from user defined functions, to custom input and output formats.

- Scale efficiently to any size of data without you focusing on scale-out topologies, plumbing code, or limitations of a specific distributed infrastructure.

How do existing Big Data languages stack up to these requirements?

SQL-based languages (such as Hive and others) provide you with a declarative approach that natively does the scaling, parallel execution, and optimizations for you. This makes them easy to use, familiar to a wide range of developers, and powerful for many standard types of analytics and warehousing. However, their extensibility model and support for non-structured data and files are often bolted on and harder to use. For example, even if you just want to quickly explore your data in a file or remote data source, you need to create catalog objects to schematize file data or remote sources before you can query them, which reduces your agility. And although SQL-based languages often have several extensibility points for custom formatters, user-defined functions, and aggregators, they are rather complex to build, integrate, and maintain, with varying degrees of consistency in the programming models.

Programming language-based approaches to process Big Data, for their part, provide an easy way to add your custom code. However, a programmer often has to explicitly code for scale and performance, often down to managing the execution topology and workflow such as the synchronization between the different execution stages or the scale-out architecture. This code can be difficult to write correctly, and optimized for performance. Some frameworks support declarative components such as language integrated queries or embedded SQL support. However, SQL may be integrated as strings and thus lacking tool support, the extensibility integration may be limited or – due to the procedural code that does not guard against side-effects – hard to optimize, and does not provide for reuse.

Taking the issues of both SQL-based and procedural languages into account, we designed U-SQL from the ground-up as an evolution of the declarative SQL language with native extensibility through user code written in C#. This unifies both paradigms, unifies structured, unstructured, and remote data processing, unifies the declarative and custom imperative coding experience, and unifies the experience around extending your language capabilities.

U-SQL is built on the learnings from Microsoft’s internal experience with SCOPE and existing languages such as T-SQL, ANSI SQL, and Hive. For example, we base our SQL and programming language integration and the execution and optimization framework for U-SQL on SCOPE, which currently runs hundred thousands of jobs each day internally. We also align the metadata system (databases, tables, etc.), the SQL syntax, and language semantics with T-SQL and ANSI SQL, the query languages most of our SQL Server customers are familiar with. And we use C# data types and the C# expression language so you can seamlessly write C# predicates and expressions inside SELECT statements and use C# to add your custom logic. Finally, we looked to Hive and other Big Data languages to identify patterns and data processing requirements and integrate them into our framework.

In short, basing U-SQL language on these existing languages and experiences should make it easy for you to get started and powerful enough for the hardest problems.

Show me U-SQL!

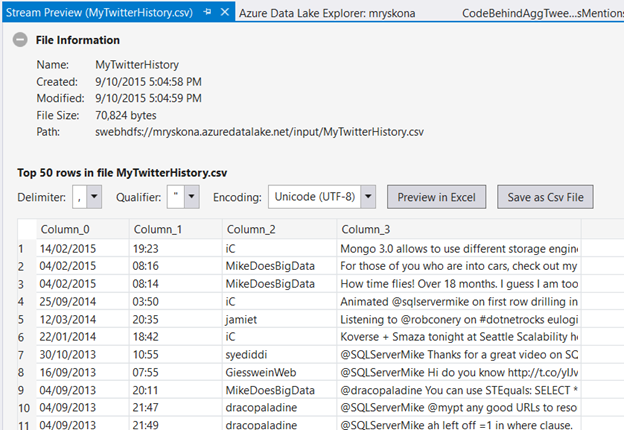

Let’s assume that I have downloaded my Twitter history of all my tweets, retweets, and mentions as a CSV file and placed it into my Azure Data Lake Store.

In this case I know the schema of the data I want to process, and for starters I want to just count the number of tweets for each of the authors in the tweet “network”:

@t = EXTRACT date string

, time string

, author string

, tweet string

FROM “/input/MyTwitterHistory.csv”

USING Extractors.Csv();

@res = SELECT author

, COUNT(*) AS tweetcount

FROM @t

GROUP BY author;

OUTPUT @res TO “/output/MyTwitterAnalysis.csv”

ORDER BY tweetcount DESC

USING Outputters.Csv();

The above U-SQL script shows the three major steps of processing data with U-SQL:

- Extract data from your source. Note that you just schematize it in your query with the EXTRACT statement. The datatypes are based on C# datatypes and I use the built-in Extractors library to read and schematize the CSV file.

- Transform using SQL and/or custom user defined operators (which we will cover another time). In the example above, it is a familiar SQL expression that does a GROUP BY aggregation.

- Output the result either into a file or into a U-SQL table to store it for further processing.

Note that U-SQL’s SQL keywords have to be upper-case to provide syntactic differentiation from syntactic C# expressions with the same keywords but different meaning.

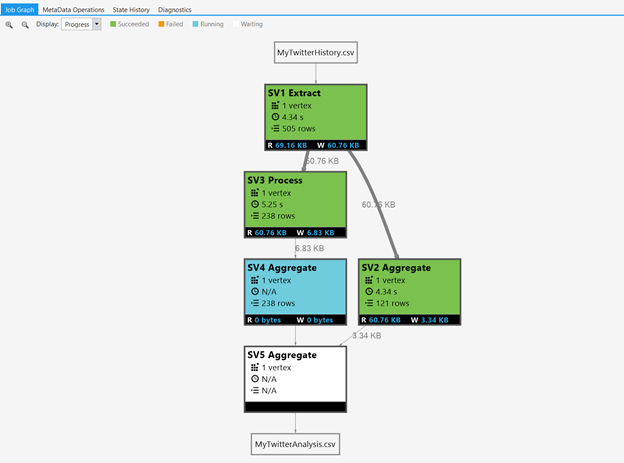

Also notice that each of the expressions are assigned to a variable (@t and @res). This allows U-SQL to incrementally transform and combine data step by step expressed as an incremental expression flow using functional lambda composition (similar to what you find in the Pig language). The execution framework, then, composes the expressions together into a single expression. That single expression can then be globally optimized and scaled out in a way that isn’t possible if expressions are being executed line by line. The following picture shows you the graph generated for the next query in this blog post:

Going back to our example, I now want to add additional information about the people mentioned in the tweets and extend my aggregation to return how often people in my tweet network are authoring tweets and how often they are being mentioned. Because I can use C# to operate on the data, I can use an inline C# LINQ expression to extract the mentions into an ARRAY. Then I turn the array into a rowset with EXPLODE and apply the EXPLODE to each row’s array with a CROSS APPLY. I union the authors with the mentions, but need to drop the leading @-sign to align it with the author values. This is done with another C# expression where I take the Substring starting at position 1.

@t = EXTRACT date string

, time string

, author string

, tweet string

FROM “/input/MyTwitterHistory.csv”

USING Extractors.Csv();

@m = SELECT new SQL.ARRAY<string>(

tweet.Split(‘ ‘).Where(x => x.StartsWith(“@”))) AS refs

FROM @t;

@t = SELECT author, “authored” AS category

FROM @t

UNION ALL

SELECT r.Substring(1) AS r, “mentioned” AS category

FROM @m CROSS APPLY EXPLODE(refs) AS Refs(r);

@res = SELECT author

, category

, COUNT(*) AS tweetcount

FROM @t

GROUP BY author, category;

OUTPUT @res TO “/output/MyTwitterAnalysis.csv”

ORDER BY tweetcount DESC

USING Outputters.Csv();

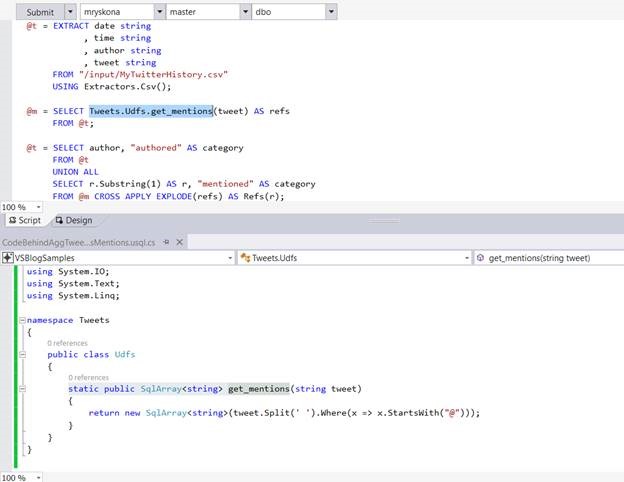

As a next step I can use the Azure Data Lake Tools for Visual Studio to refactor the C# code into C# functions using the tool’s code-behind functionality. When I then submit the script, it automatically deploys the code to the service.

I can also deploy and register the code as an assembly in my U-SQL metadata catalog. This allows me and other people to use the code in future scripts. The following script shows how to refer to the functions, assuming the assembly was registered as TweetAnalysis:

REFERENCE ASSEMBLY TweetAnalysis;

@t = EXTRACT date string

, time string

, author string

, tweet string

FROM “/input/MyTwitterHistory.csv”

USING Extractors.Csv();

@m = SELECT Tweets.Udfs.get_mentions(tweet) AS refs

FROM @t;

@t = SELECT author, “authored” AS category

FROM @t

UNION ALL

SELECT Tweets.Udfs.cleanup_mentions(r) AS r, “mentioned” AS category

FROM @m CROSS APPLY EXPLODE(refs) AS Refs(r);

@res = SELECT author

, category

, COUNT(*) AS tweetcount

FROM @t

GROUP BY author, category;

OUTPUT @res

TO “/output/MyTwitterAnalysis.csv”

ORDER BY tweetcount DESC

USING Outputters.Csv();

Because I noticed that I need to do a bit more cleanup around the mentions besides just dropping the @ sign, the assembly also contains a cleanup_mentions functions that does additional processing beyond dropping the @.

This is why U-SQL!

I hope you got a glimpse at why we think U-SQL makes it easy to query and process Big Data and that you understand our thinking behind the language. Over the next couple of weeks we will be expanding more on the language design philosophy and provide more sample code and scenarios over at our Big Data topic in the Azure blog. We’ll also dive in deeper into many of the additional capabilities such as:

- Operating over set of files with patterns

- Using (Partitioned) Tables

- Federated Queries against Azure SQL DB

- Encapsulating your U-SQL code with Views, Table-Valued Functions, and Procedures

- SQL Windowing Functions

- Programming with C# User-defined Operators (custom extractors, processors)

- Complex Types (MAP, ARRAY)

- Using U-SQL in data processing pipelines

- U-SQL in a lambda architecture for IOT analytics

U-SQL makes Big Data processing easy because it:

- Unifies declarative queries with the expressiveness of your user code

- Unifies querying structured and unstructured data

- Unifies local and remote queries

- Increases productivity and agility from Day 1 for YOU!

Not Just U-SQL – Azure Data Lake provides Productivity on All Your Data

U-SQL is just one of the ways that we are working to make Azure Data Lake the most productive environment for authoring, debugging and optimizing analytics at any scale. With rich support for authoring and monitoring Hive jobs, a C# based authoring model for building Storm jobs for real time streaming, and supporting every stage of the job lifecycle from development to operationalization, the Azure Data Lake services let you focus more on the questions you want to answer than spending time debugging distributed infrastructure. Our goal is to make big data technology simpler and more accessible to the greatest number of people possible: big data professionals, engineers, data scientists, analysts and application developers.

Get started with Big Data processing in Azure using HDInsight, sign up for joining the Azure Data Lake Analytics Preview, and give us your feedback!

|

Michael Rys (@MikeDoesBigData) Michael has been doing data processing and query languages since the 1980s. Among other things he has been representing Microsoft on the XQuery and SQL design committees and has taken SQL Server beyond relational with XML, Geospatial and Semantic Search. Currently he is working on Big Data query languages such as SCOPE and U-SQL when he is not enjoying time with his family under water or at autocross. |

0 comments