Summary: Microsoft PFE, Georges Maheu, optimizes the Windows PowerShell script he presented yesterday.

Microsoft Scripting Guy, Ed Wilson, is here. Our guest blogger today is Georges Maheu. Georges presented a script yesterday to gather Windows services information in an Excel spreadsheet. Although the script did an adequate job for a small number of computers, it does not scale well for hundreds of computers.

Note: All of the files from today, in addition to files for the entire week are in a zip file in the Script Repository. Georges’ team blog can be found at PFE Blog OpsVault.

Take it away Georges…

Today, we look at scaling up yesterday’s script. As seen, the script makes an inventory of all services running on your servers and identifies those that are using non-standard accounts. The inventory is presented in an Excel spreadsheet.

The first version of the script was linear and simple but somewhat slow; it works well for a small to mid-size environment, but it does not scale well for larger environments.

When doing security assessments, most of the time, only a subset of computers is considered. The objective of an assessment is to locate a few examples of items to correct, not to make a complete inventory. However, when doing remediation planning, a complete inventory is required to evaluate the potential impact of applying modifications to the environment. The script presented yesterday is fine for doing assessments or complete inventory of smaller environments. To make a complete inventory of a larger environment, the script performance needs to be optimized.

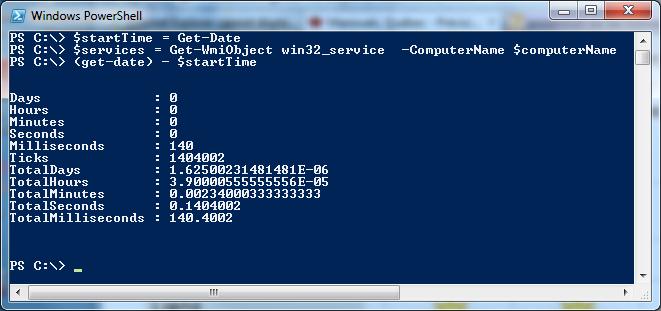

Unfortunately, I am not aware of profiler tools for Windows PowerShell. A profiler is a tool that enables you to compute the time spent in each line of code when running a script. There are other ways of estimating where most of your script spends its time. One of them is to create your own timers and track time at various locations.

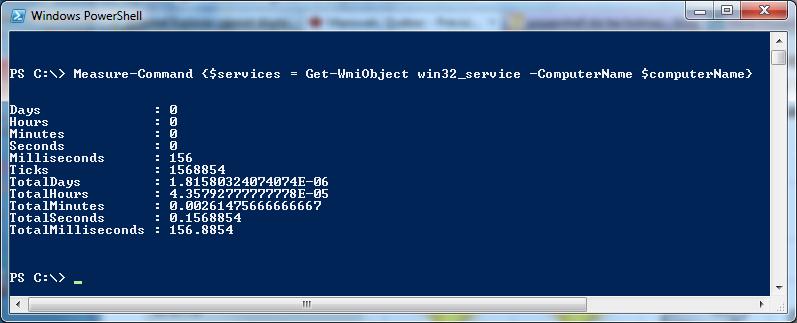

Another option is to use the Measure-Command cmdlet.

After you instrument the original script, the following statistics are generated:

WMI query: ~ 0.25 seconds for responding local computer

WMI query: ~ 1.00 seconds for responding remote computer

WMI query: ~ 20.00 seconds for non-responding computer

Ping dead computer: ~2.5 seconds

Writing sheet headers: ~ 1.5 seconds

Writing sheet data: ~ 120 seconds

The two main pain points are making a WMI query on a non-responding , which takes about 20 seconds, and writing the data to Excel. If the target computers are servers, these should be few and far apart. However, if these are workstations, investing more time may be needed to address the non-responding computer issues.

In any case, the big gain is obviously with writing data to Excel. After doing some research, the fastest way to write to Excel seems to be to write a range of data rather than writing it cell by cell. Several techniques could be used to achieve this data transfer. Let’s explore two of them…

First method: CSV files

The result of the WMI query can be exported into a CSV file with the Export-CSV cmdlet, and then read directly from Excel with the $excel.workbooks.open() method. Although this looks simple, there is some gymnastics and plumbing involved to get this working. We will look into this further later this week.

Second method: Clipboard

The result of the WMI query can be copied to the clipboard and then pasted into each spreadsheet. This method also avoids having to write the spreadsheet header.

The first step is to load the clipboard class:

Add-Type -Assembly PresentationCore

Warning: The PresentationCore class is pretty fussy about its housing. It needs to know in which state its apartment is!

if ($host.Runspace.ApartmentState -notlike “STA”)

{

powershell -STA -file $myInvocation.myCommand.definition

}

Then the original code is modified with the following lines:

$services = Get-WmiObject win32_service `

-ComputerName $computerName

if (($error.count -eq 0))

{

$data = ($services `

| Select-Object $properties `

| ConvertTo-Csv -Delimiter “`t” -NoTypeInformation) `

-join “`r`n”

[Windows.Clipboard]::setText($data)

#Const xlPasteAll = -4104

$computerSheet.range(‘a1’).pasteSpecial(-4104)

[Windows.Clipboard]::setText(””) #clear the buffer

$computerSheet.usedRange.entireColumn.autoFit() `

| Out-Null

. . .

The WMI query is the same as before. The new element is the $data transformation. This step is required to allow pasting the services data to an appropriate format for Excel. If $services is pasted directly as follows:

[Windows.Clipboard]::setText($services)

Only partial data is copied and not parsed into columns. Excel understands the CSV format; therefore, the $service data is converted into the CSV format with the ConvertTo-CSV cmdlet. The CSV data is then converted into one big string with the –join method. Et voilà, the script is done. Well almost…

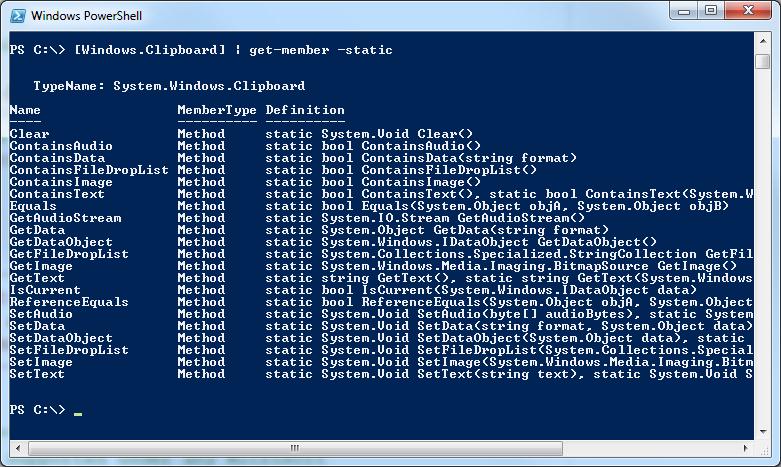

The static method SetText from the Windows.Clipboard class is used to copy data to the clipboard and then the office automation PasteSpecial method is used to paste it into Excel.

A neat trick that my good friend, Ed Wilson, showed me some time ago is how to find static methods with the Get-Member cmdlet. If [Windows.Clipboard] is sent to get-member, only a subset of the methods available is obtained. But if the Static parameter is used; the following additional methods will be displayed:

The Select-Object cmdlet is used to change the order in which the columns are output. If you remember, in the original script, variables like $columnCaption were used to determine column position.

$computerSheet.cells.item($row,$columnCaption) = $service.Caption

In this script, an array combined with the Select-Object cmdlet is used. Thereby, another modification has to be made:

$properties = `

“Name”,`

“StartName”,`

#removed some properties for brevity

“WaitHint”

Using an array this way avoids printing the headers as needed in the previous version of the script. To change the column order, simply change the order of the properties in the array.

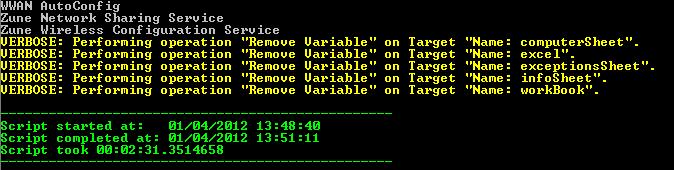

As you may recall, a test run in my home lab gathered data from 50 computers in 90 minutes with the original script. This new and improved version does the same 50 computers in less than three minutes, and it is 43 lines shorter!!

But wait, there is more…

The next challenge will be to scale this script from 50 servers to thousands of servers. Tomorrow, we will explore how we can again dramatically improve the performance of this script from three minutes down to 45 seconds by leveraging some Windows PowerShell 2.0 features.

~ Georges

Once again, thank you, Georges, for sharing with us today. The zip file you will find in the Script Repository has all the files and scripts from Georges this week. Please join us tomorrow for Part 3 in the series.

I invite you to follow me on Twitter and Facebook. If you have any questions, send email to me at scripter@microsoft.com, or post your questions on the Official Scripting Guys Forum. See you tomorrow. Until then, peace.

Ed Wilson, Microsoft Scripting Guy

0 comments