Premier Developer Consultant, Sana Noorani, shares data migration strategies and considerations for Dynamics 365.

In the second post of my Dynamics 365 implementation series (see first post), I will discuss the data migration effort that is necessary in the implementation process. The purpose of this series is for me to review the process and experiences my client has had with implementing an enterprise ERP system for their 50-year-old, multi-billion dollar company.

Throughout the past year, I have been able to see the company grow and implement an ERP solution called Dynamics 365 for Finance and Operations (previously known as Dynamics AX). The company first implemented the Finance portion of the product in release 1 (R1). Now, during release 2 (R2), they are working on implementing the Operations segment of Dynamics.

Environments

Throughout a typical Dynamics project, various environments are setup to prevent the existing environments from being affected by the changes being pushed to an environment. This is the model most firms follow when they have mastered the release sequence in their CI/CD.

The data migration efforts begin in one environment. Once the data is loaded correctly, it is tested by the data migration team. We have a Microsoft resource working with a resource from the client’s side to test in conjunction. In this example, the data is loaded into an environment we call Convdat. This is a staging environment in which the groundwork is laid out. After the data migration team validates the data, the business stakeholders are expected to test the data.

Once this work is completed, the changes are pushed to other environments including the Master environment.

Data Migration Process

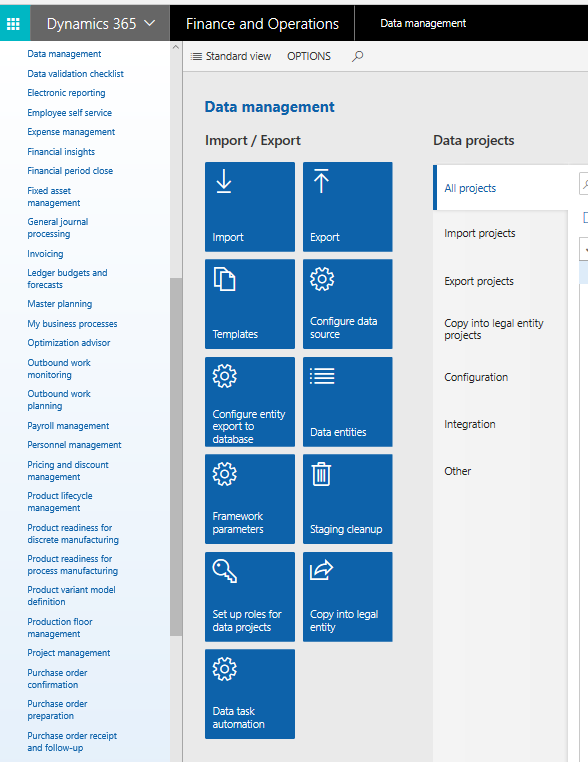

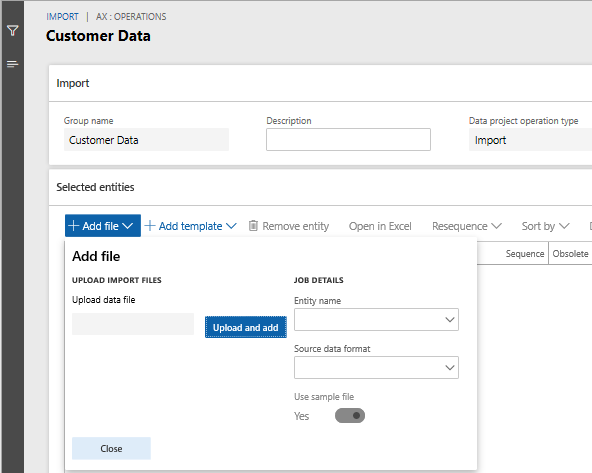

I sat down with our data migration lead, Christian Cabrera, and worked with him to better understand the process. The bulk of the data migration work is done in the workspace called “Data management.”

As solution modeling takes place, integration design documents are written in order to identify parts of the solution that can be sectioned off into a deliverable unit. For example, the client might have a piece they need to integrate into the transportation module of D365. Therefore, the integration design document will serve as a guide to address what part of the need is being met in the solution. Additionally, the design document serves as a guide to the development team that then needs to customize D365 to meet the needs of the client.

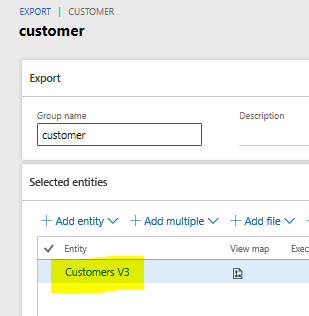

Export an Entity

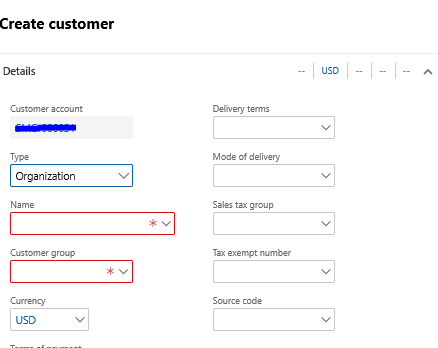

Before the data is analyzed, the fields in D365 can be exported based off of the entity that is being analyzed. For instance, we may begin in the Customer module. Within the customer module, there are a few entities we want to analyze. One of them is the Customer V3 entity.

I can export the native D365 fields from the customer module into an excel document, so that I can get a better idea of what fields I can expect to map to in the Customer module. Below is a snippet:

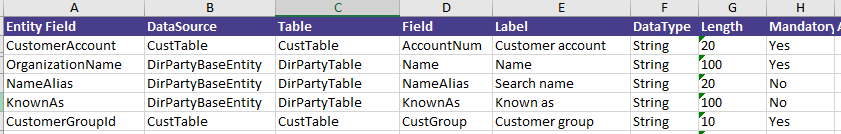

The fields give you a better idea of what the client’s system can map their data fields to. Additionally, we typically take sample Contoso data and then provide it to the client so that they get a better idea of what kind of data is expected in a particular field. You can think of this as an XML sample. This is particularly important for the next exercise: field-to-field mapping.

Field to Field Mapping

The first step for the field-to-field mapping is to have an exported list of all the available fields in D365 for that particular entity. In this case, we are looking at the Customer V3 entity.

The list of fields that is generated by D365 is a list of the frontend and backend fields. An example of a backend field would be a computed field. An example of a frontend field would be a value you would see on the D365 UI. See the snippet below:

The client’s customer fields are compared to the D365 entity fields. The information dissected includes the entity field, the data type, the length, and whether the field is mandatory or optional in native D365.

Microsoft asks the business stakeholders some important questions to help come to a consensus of if a field needs to be migrated:

- Is this field needed?

- Why is it needed?

- What is it used for?

- Does the D365 field provided work for the way your system functions?

On the last question, if the customer says no, then an alternative field is considered. If the alternative field can be repurposed, then that is what the client can do. Otherwise, a new field needs to be added to D365 and it is customized accordingly.

Import an Entity

Once the fields that are needed are determined, the sample Contoso data is then replaced with the client’s data. One of the ways to upload this data is through Excel. All the data is provided by the client, prepped, and then added to the spreadsheet.

The rule of thumb is to upload around 10 lines of data first to ensure that the system is uploading the data successfully without errors. If there are errors, they must be resolved prior to uploading additional data. Once the errors are resolved and the 10 records can be uploaded successfully, then you can increase scope to perhaps 50k lines at a time and mass import it into D365.

An important tip to note is that D365 must be configured to accept the data in some of the entities. For instance, if there’s an enum field type called address types, the enum is empty until it is populated with the possible values your client would want to see. After this, data can be successfully uploaded into this field.

Business Validation

After the client’s data is imported, the client must look over the data and test it. They have expectations of how their data should behave. By having them test the data in D365, they are able to get hands-on experience working with the system, and they are able to catch issues, if any exist.

Conclusion

Now that we have taken a look at how data migration is typically handled in D365, we can now pivot in my next post to discuss how integral testing is to the integration effort.

Very helpful.

How do you know the field is mandatory or not? Where does the information come from?

Could you share the field to field mapping?

Looking forward to your reply.

Thank you.

Did you learn whether this information is available? I was after the data types of the fields along with their size and whether they could be null and if they were constrained by a list of values.

Thank You

Hi,

thanks for your blog.

Could you give me a quick hint how to export the native fields?

Thank you.

Regards Marcus