How Can I Calculate CO2eq emissions for my Azure VM?

In this post we will look at two different Azure virtual machines, run software on them and calculate the CO2eq emissions of the software. In my previous posts I have written about how one can go about measuring the power consumption of a backend service. This post is about how to put the tools to work. If you have not read my previous post on power consumption, I recommend going there and then coming back to this post, as we will extend the concepts presented there! Let’s go!

Power Consumption

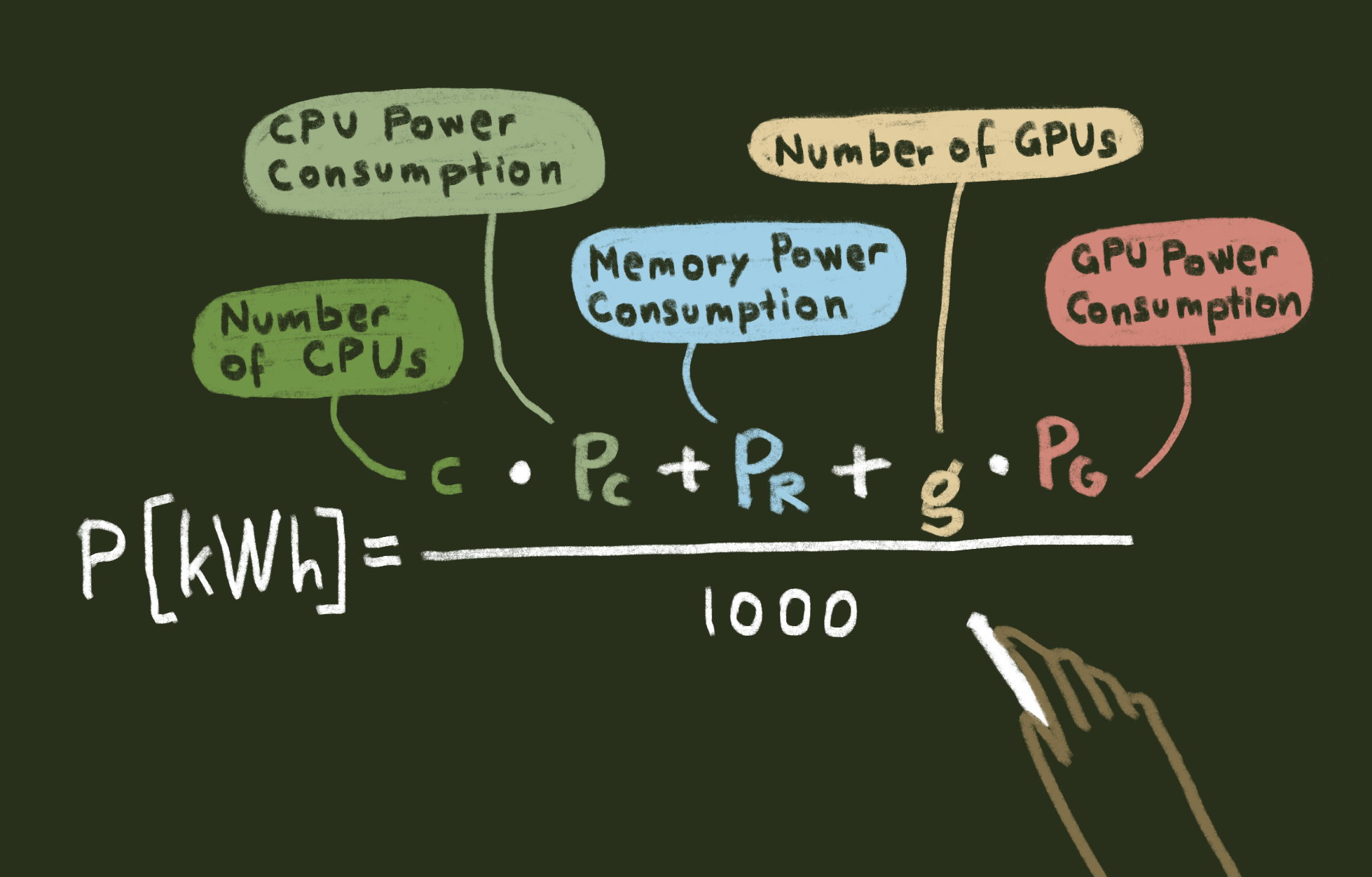

We will use this formula for calculating power consumption:

P will be the overall power in kW and P[kW]=(c∙P_c+ P_r+g∙P_g)/1000. Where P_c the power consumption of the CPU, P_g is the power consumption of the GPU and P_r is the power consumption of the memory. I will be using the thermal design power (TDP) of the hardware components to estimate the power consumption. Remember that this is an estimate and measuring the exact power consumption directly is more accurate, but not always available to us. If you wish to get the kWh, multiply the power consumption P = (c∙P_c+ P_r+g∙P_g)/1000 by the amount of hours you are interested in. Example for a 4 hour time period the energy consumption in kWh can be expressed as E[kWh]=4*(c∙P_c+ P_r+g∙P_g)/1000.

To make the example easier, we will pretend to run software and use fake loads on the various components. The same method can be applied to real software. You can measure the load of different components, for example, by using this method for measuring power consumption, presented by my colleague Scott Chamberlin.

Example 1 – A General Purpose Machine

For the first example, I chose a general-purpose machine for Azure, which would be suitable for small to medium sized business applications. I picked Standard_D2s_v3 from the Dsv3 series, which uses an Intel Xeon Platinum 8171M (Skylake) CPU that has a TDP of 165 W and 2 vCPU cores.

Since there is no GPU, P_g will be 0. The memory energy consumption is much smaller than the CPU consumption, which essentially means P >= (2*P_c).

Let’s assume the following load on the CPU for a 24-hour period:

| Time | 00.00-04.00 | 04.00-08.00 | 08.00-12.00 | 12.00-16.00 | 16.00-20.00 | 20.00-00.00 |

| P_g | 0 | 0 | 0 | 0 | 0 | 0 |

| P_r | 0 | 0 | 0 | 0 | 0 | 0 |

| TDP of CPU [W] | 165 | 165 | 165 | 165 | 165 | 165 |

| Load of CPU [%] | 30% | 50% | 80% | 90% | 50% | 30% |

| E1 = P_c * hours = (2 * TDP * load * hours)/1000 [kWh] | 0.369 | 0.66 | 1.056 | 1.188

|

0.66 | 0.369 |

This means we get: E1 = 4.302 kWh for a 24-hour period.

This is a measure of how much energy is consumed by the software over this specific time period.

Example 2 – A Machine for GPU Intense Workloads

For the second example, let us consider a VM that would be more suitable for a GPU intense workload, like a machine learning application or experimentation. I picked the Standard_NC6s_v3 from the NCv3 series. This VM is powered by NVIDIA Tesla V100 GPUs that has a TDP of 250 W.

For this VM type there are no CPU cores initially, which yields P_c = 0 and once again, the memory energy consumption is much smaller than the GPU consumption, which essentially means P >= P_g.

Let’s assume the following load on the GPU for a 24-hour period:

| Time | 00.00-04.00 | 04.00-08.00 | 08.00-12.00 | 12.00-16.00 | 16.00-20.00 | 20.00-00.00 |

| P_c | 0 | 0 | 0 | 0 | 0 | 0 |

| P_r | 0 | 0 | 0 | 0 | 0 | 0 |

| TDP of GPU [W] | 250 | 250 | 250 | 250 | 250 | 250 |

| Load of GPU [%] | 30% | 50% | 80% | 90% | 50% | 30% |

| E2 = P_g * hours = (1 * TDP * load * hours)/1000

[kWh] |

0.3 | 0.5 | 0.8 | 0.9 | 0.5 | 0.3 |

This means we get: E2 = 3.3 kWh for a 24-hour period.

What about cooling?

Now you may wonder, what about the cooling of a data center? For this example, we can assume that all data centers have a Power Usage Effectiveness (PUE) value. The PUE is a ratio that determines how effective a datacenter is at utilizing its energy. For example, a PUE of 2.0 means for every 1 kWh of electricity that reached the server, the data center needs 2 kWh to account for waste and other services like cooling. In 2015, Microsoft’s average PUE for its new datacenters was 1.125 so that is the number we use, even though it may not be 100% accurate. This number changes slowly over time with new improvements to data center design, so you can generally consider it constant when measuring power consumption over time.

To adjust the power consumption based on the PUE we need to multiply E1 and E2 with the PUE. Our new E1 is then 4.83975 kWh and our new E2 is then 3.7125 kWh.

Going from kWh to CO2eq

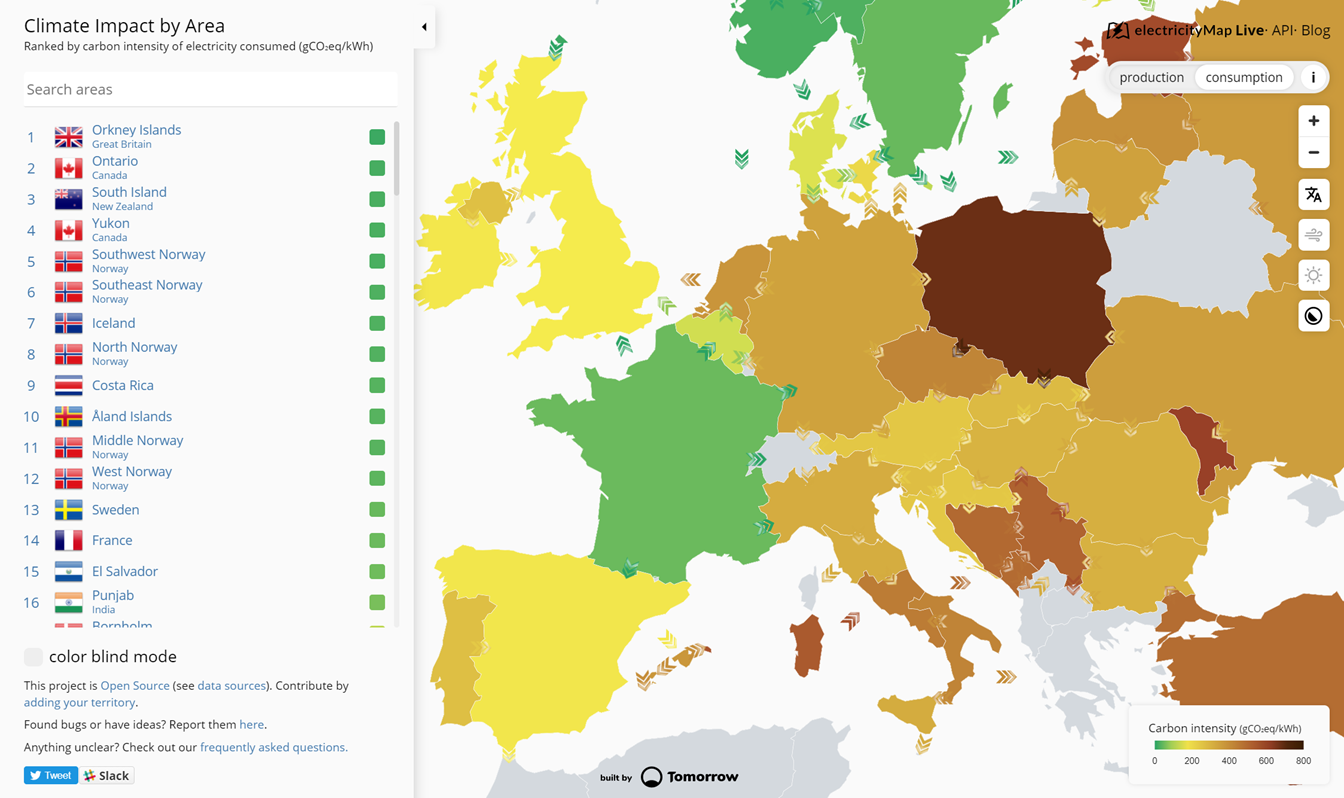

Now we know the amount of energy our software consumes! Next, we need to multiply the amount of energy by the carbon intensity, which is a measure of the grams of CO2eq emitted per kWh. Azure has been carbon neutral since 2012, meaning the emissions are offset, which is great for our customers, but not a very fun example when calculating. To make this an end-to-end example let’s take the general energy mix of the region in question as our carbon intensity. I’m living in Norway, so I’ll assume we are using the Azure region Norway East. According to electricitymap.org, which uses EU data, the carbon intensity is approximately 30 grams per kWh right now. Choosing Norway also makes our math easier as the carbon intensity is fairly constant over the day thanks to the use of hydropower.

Multiplying E1 = 4. 83975 kWh with 30 grams/kWh gives a total of 145.1925 grams of CO2eq emissions for our general-purpose machine over a 24-hour period. For our GPU intense machine, we multiply E2 = 3.7125 kWh with 30 grams/kWh to get 111.375 grams of CO2eq emissions over the same 24-hour period. This is roughly the same as driving Tesla model 3 for 22 km, according to their charging estimator.

Take Action

When running on serverless, there are sustainability gains to be had, which I have not covered in this post. Read this blog post about adopting a serverless architecture to help reduce CO2 emissions by my colleague Srinivasan to learn more.

Light

Light Dark

Dark

3 comments

Thanks for detailed description!

A note:

Please don’t mix and swap Power, Energy.

P(ower) is the amount of energy per time. [Watt] [J/S]

E(nergy) is the amount energy 🙂 [J] or [kWh]

So P * T(ime) = E if T is expressed in h, then you go from W(att) to -> Wh in a simply way.

That is what you described, just in a very sketchy and inexact way.

A processor has a TDP ~ [P]ower. By [T]ime it consumes [E]nergy 🙂

Thank you for your comment, I’m glad you liked the post! And you are absolutely correct that the terminology was a bit muddy. I have made some corrections now and hopefully it is much clearer now!

Thanks for this amazing explanation !