We’ve been hard at work improving the Chat Copilot reference application to demonstrate how you can use Semantic Kernel to build a fully featured chat experience. Now that its more feature complete, we’re elevating it to its own repo here: github.com/microsoft/chat-copilot.

We’ve also given it its own section on our learn site so we can better share what it can do and how you can use it. If you haven’t played around with Chat Copilot in awhile, here are the most notable updates we’ve given it recently.

Skip the waitlist: test ChatGPT plugins in Chat Copilot

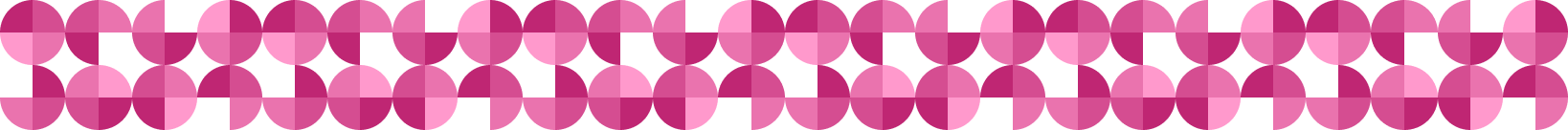

In a previous blog post, we demonstrated how you could create a ChatGPT plugin and run it using Semantic Kernel. The next logical step is to actually test the plugin in a chat experience to ensure that it can help real user needs. Unfortunately, OpenAI has a waitlist for developers interested in testing and deploying plugins with ChatGPT.

To get around this, we’ve added the ability to add and enable ChatGPT plugins within Chat Copilot. By selecting the plugin button in the top right of the app, you can add your ChatGPT plugins by simply pasting in their URLs.

Afterwards, you can ask Chat Copilot to complete tasks using your plugins. For example, in the following screenshot, we ask the Chat Copilot agent to perform some math using the Math Plugin we demonstrate how to build in our documentation.

For full instructions on how to add and test your own ChatGPT plugins in Chat Copilot, please refer to the full testing steps in our documentation.

Quality of life improvements galore!

To improve the experience of Chat Copilot, we’ve made numerous UX improvements to make it a more enjoyable (and faster) experience. These include (but are not limited to):

- Performance improvements

- Stability improvements to planner

- Streaming of text from the agent

- Dark mode

With streaming, responses are snappier than ever. Click the image to view the GIF.

Peak behind the curtains of Chat Copilot

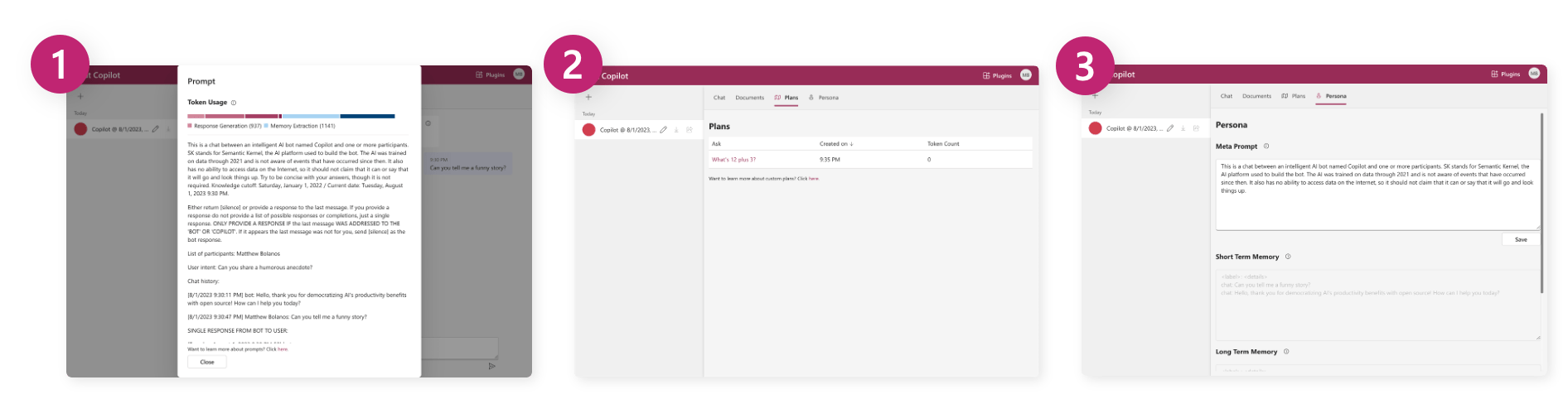

We’ve added several features that make it easier for developers to understand what is going on to power Chat Copilot. Not only do we expect this to help debug issues, but we also hope this helps make many of the concepts of Semantic Kernel come to life (e.g., plugins, planners, and memories)

- By clicking on the info bubble in Chat Copilot, you can see the entire prompt that was used to generate a response (along with its token usage).

- In the Plans tab, you can see and investigate all of the plans that have been generated using your plugins.

- In the Persona tab, you can play around with the variables that impact how an agent responds.

To learn more about the developer features of Chat Copilot, check out our documentation.

It’s easier than ever to get started

Lastly, to make it simpler to start running Chat Copilot, we’ve improved the series of steps that you need to take to run the app locally. Whether your on a Windows, Linux, or MacOS machine, we’ve got you covered with scripts that help you quickly get up and running with Chat Copilot.

| Script | Description |

| Install | Install all of the core dependencies for Chat Copilot: yarn, dotnet, and node. |

| Configure | Generates the local development configuration files for the backend and frontend by using connection details you provide it. |

| Start | Starts both the backend and frontend of Chat Copilot. |

To learn how to use these scripts, check out our getting started article for Chat Copilot.

We want to see how you’re using Chat Copilot

As always, we want to hear from you! If you build a cool plugin and use it in Chat Copilot, we want to see it! If you add a cool feature to Chat Copilot, we want to incorporate it in! If you deploy Chat Copilot within your company, we want to hear about it!

Give us feedback early and often either on GitHub or in our Discord server. With your feedback, we’ll continue to improve Semantic Kernel and Chat Copilot.

0 comments