Summary: Microsoft PFE, Dan Sheehan, shares Windows PowerShell scripting best practices for a shared environment.

Microsoft Scripting Guy, Ed Wilson, is here. Today I would like to welcome a new guest blogger, Dan Sheehan.

Dan recently joined Microsoft as a senior premiere field engineer in the U.S. Public Sector team. Previously he served as an Enterprise Messaging team lead, and was an Exchange Server consultant for many years. Dan has been programming and scripting off and on for 20 years, and he has been working with Windows PowerShell since the release of Exchange Server 2007. Overall Dan has over 15 years of experience working with Exchange Server in an enterprise environment, and he tries to keep his skillset sharp in the supporting areas of Exchange, such as Active Directory, Hyper-V, and all of the underlying Windows services.

Here's Dan…

I have been working and scripting (using various technologies) in enterprise environments where code is shared, updated, and copied by others for over 20 years. Even though I don’t consider myself a Windows PowerShell expert, I find myself assisting others with their Windows PowerShell scripts with best practices and speed improvement techniques, so I thought I would share them with the community as a whole.

This blog post is centered on the best practices I find myself sharing and championing the most in shared environments (I include all enterprise environments). In my next blog post, I will be discussing some Windows PowerShell script speed improvement techniques.

But before we try to speed up our script, it’s a good idea to review and implement coding best practices as a form of a code cleanup. Although some of these best practices can apply to any coding technology, they are all relevant to Windows PowerShell. For another good source of best practices for Windows PowerShell, see The Top Ten PowerShell Best Practices for IT Pros.

The primary benefit of these best practices is to make it easier for others who review your script to understand and follow it. This is especially important for the ongoing maintenance of production scripts as people change jobs, get sick, or get hit by a bus (hopefully never). They also become important people and post scripts in online repositories, such as the TechNet Gallery, to share with the community.

Some of these best practices may not provide a lot of value if the script is small or will only be used by one person. However, even in that scenario, it is a good idea to get into a habit of using best practices for consistency. You never know when you might revisit a script you wrote years ago, and these best practices can help you save time refamiliarizing yourself with it.

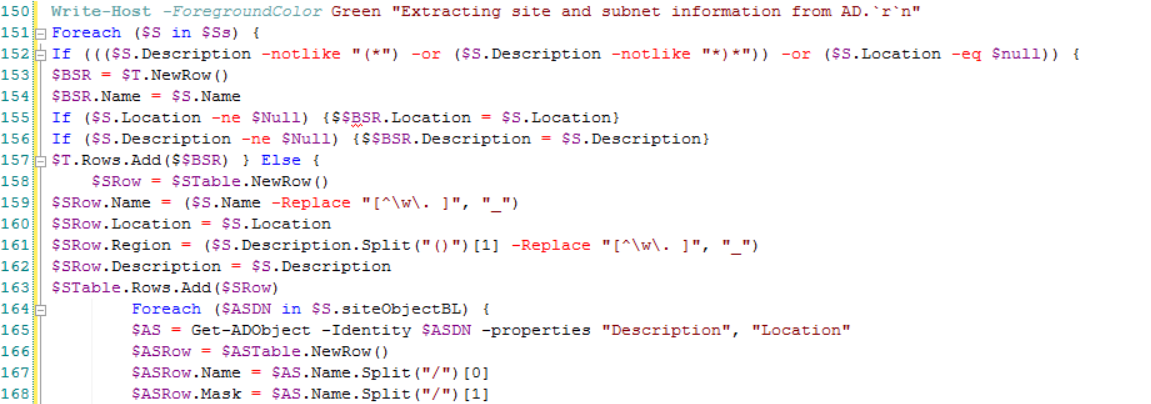

Ultimately, the goal of the best practices I discuss in this post is to help you take messy script that looks like this:

…and turn it into an exact functional, but much more readable, version, like this:

Note I format my Windows PowerShell script for landscape-mode printing. It is my personal opinion that portrait-mode causes excessive line wraps in script, which makes the script harder to read. This is a personal preference, and I realize most people stick to keeping their script to within 85 characters on a line, which is perfectly fine if that works for them. Just be consistent about wherever you choose to wrap your script.

Keep it simple (or less is more)

The first best practice, which really applies to all coding, is to try to keep the script as simple and streamlined as possible. The first thing to remember is that most humans think in a very linear fashion, in this case from the top to the bottom of a script, so you want to try to keep your script as linear as possible. This means you should avoid making someone else jump around your script to try follow the logical outcome.

Also during the course of learning how to do new and different things, IT workers have a tendency to make script more complex than it needs to be because that’s how some of us experiment with and learn new techniques. Even though learning how to do new and different things in Windows PowerShell scripting is important, learning exercises should be separate from production script that others will have to use and support.

I’m going to use Windows PowerShell functions as an example of a scenario where I see authors unnecessarily overcomplicating script. For example, if a small, simple block of code will accomplish what needs to occur, don’t go out of your way to turn that script into a function and move it somewhere else in the script where it is later called…just because you can. Unnecessarily breaking the linear flow of the script just to use a function makes it harder for someone else to review your script linearly.

I was discussing the use of functions with a coworker recently. He argued that modularizing his script into functions and then calling all the functions at the end of the script made the script progression easier for him to follow.

I see this type of modularization behavior from those who have been full-on programming (or taught by a programmer)—all the routines, voids, or whatever in the code are modularized. Although I appreciate that we all have different coding styles, and ultimately you need to write the script in the way that works best for you, the emphasis in this blog post is writing your script so others can read and follow it as easily as possible.

Although using a couple of single-purpose functions in a script may not initially seem to make it hard for you to follow the linear progression of the script, I have also seen script that calls functions inside of other functions, which compounds the issue. This nesting of functions makes it exceedingly difficult for someone else to follow the progression of events because they have to jump around the script (and script logic) quite a bit.

To be clear, I am not picking on all uses of functions because there is definitely a time and place for them in Windows PowerShell. A good justification for using a function in your script is when you can avoid listing the same block of code multiple times in your script and instead store that code in a multiple use function. In this case, reducing the amount of code people have to review will hopefully make it easier for them to understand.

For example, in the Mailbox Billing Report Generator script I wrote at a previous job, I used a function to generate Excel spreadsheets because I was going to be reusing that block of code in the script multiple times. It made more sense to have the code listed once and then called multiple times in the script. I also tried to locate the function close to the script where it was going to be called, so other people reviewing the script didn’t have to go far to find it.

Let's take the focus off of functions and back to Windows PowerShell scripting techniques in general…

Utimately when you are thinking about using a particular scripting technique, try to determine if it is really beneficial. A good way to do this is by asking yourself if the technique is adding value and functionality to the script and if it will potentially unnecessarily confuse another person reading it. Remember that just because you can use a certain technique doesn’t mean you should.

Use consistent indentation

Along with keeping the script simple, it should be consistently organized and formatted, including indentations when new loop or conditional check code constructs are used. Lack of indentation, or even worse, inconsistent use of indentation makes script much harder to read and follow. One of the worst examples that I have seen is when someone pasted examples (including the example indentation level) from multiple sources into their script, and the indentation seemed to be randomly chosen. I had a really hard time following that particular script.

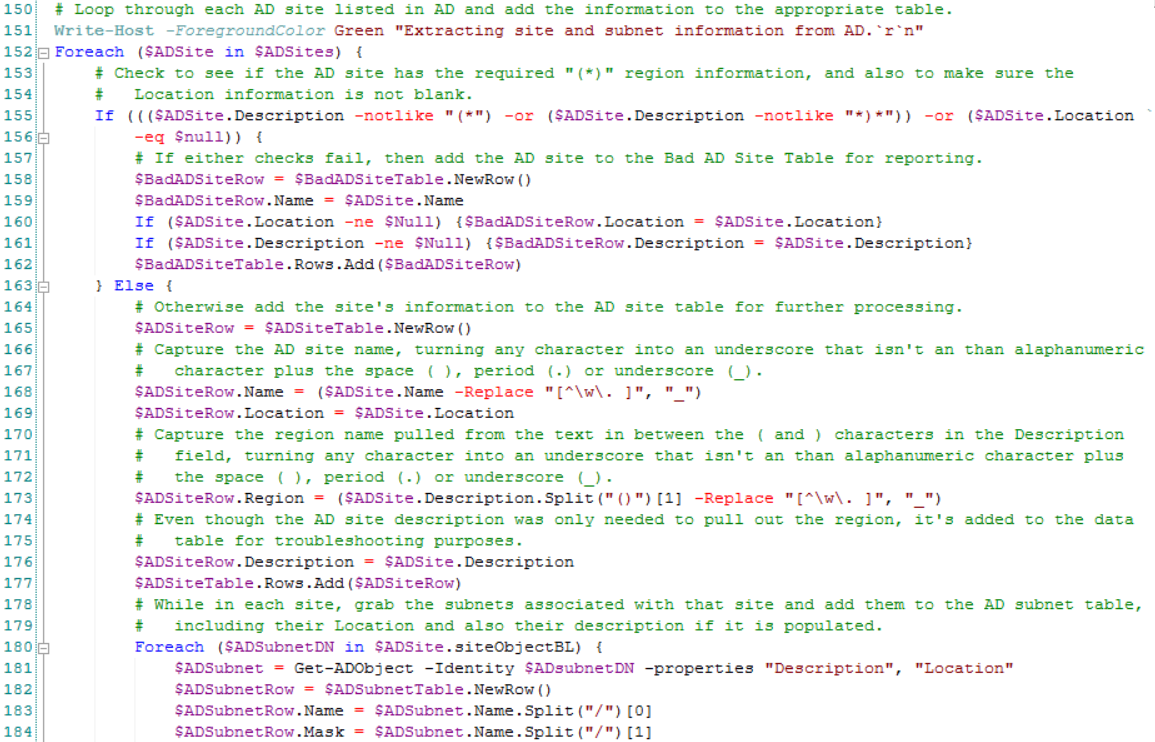

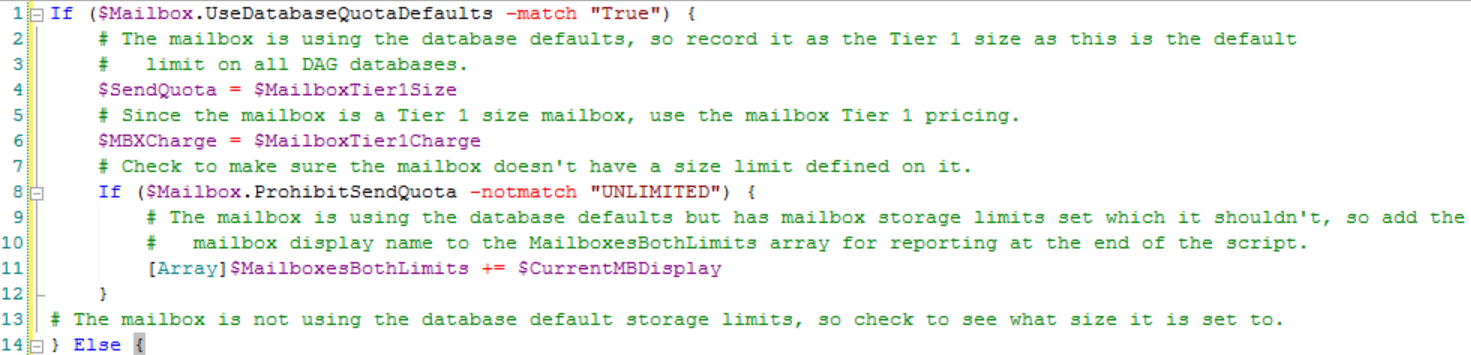

The following example uses the Tab key to indent the script after each time a new If condition check construct is used. This is used to represent that the script following that condition check is executed only if the outcome of the condition check is met. The Else statement is returned to the same indentation level as the opening If condition check, because it represents closure of the original condition check outcome and the beginning of the alternate outcome (the condition check wasn’t met). Likewise, the final closing curly brace is returned to the same level of indentation as the opening condition check because the condition check is now completely finished.

If you add another condition check inside of an existing condition check (referred to as “nesting”), then you should begin indenting the new condition check at the current indentation level to show it is nested inside a “parent” condition check. The previous example shows a second If condition check on line #6, which is nested inside a parent If condition check where everything is already indented one level. The nested If condition check then indents a second level on line #7 for its condition check outcome, but then it returns to the first indentation level when the condition check outcome is complete.

Indentations should be used any time you have open and close curly braces around a block of code, so the person reading your script knows that block of code is a part of construct. This would apply to ForEach loops, Do…While condition check loops, or any block of code in between open and closing curly brackets of a construct.

The use of indentation isn’t limited to constructs, and it can be used to show that a line of script is a continuation of the line above it. For example as a personal preference, whenever I use the back tick character ( ` ) to continue the same Windows PowerShell command on the next line in a script, I indent that next line so that as I am reviewing the script, I can easily tell that line is a part of the command on the previous line.

Note Different Windows PowerShell text editors can record indentations differently, such as a Tab being recorded as a true Tab in one editor and multiple spaces in another editor. It’s a good idea check your indentations if you switch editors and you aren’t sure they use the same formatting. Otherwise, viewing your script in other programs (such as cutting and pasting the script into Microsoft Word) can show your script with inconsistent indentations.

Use Break, Continue, and other looping controls

Normally, if I want to execute a large block of code only if certain conditions are met, I would create an If condition check in the script with the block of code indented (following the practices I discussed previously). If the condition wasn’t met, the script would jump to the end of the condition check where the indentation was returned back to the level of the original condition check.

Now imagine you have a script where you only want the bulk of the script to execute if certain condition checks are met. Further imagine you have multiple nested condition checks or loops inside of that main condition check. Although this may not seem like an issue because it works perfectly fine as a scripting method, nesting multiple condition checks and following proper indentation methods can cause many levels of indenting. This, in turn, causes the script to get a little cramped, depending on where you chose to line wrap.

I refer to excessive levels of nested indentation as “indent hell.” The script is so indented that the left half of the screen is wasted on white space and the real script is cramped on the right side of the screen. To avoid “indent hell,” I started looking for another method to control when I executed large blocks of code in a script without violating the indentation practice.

I came across the use of Break and Continue, and after conferring with a colleague infinitely more versed in Windows PowerShell than myself, I decided to switch to using these loop processing controls instead of making multiple gigantic nested condition checks.

In the following example, I have a condition check that is nested inside of a ForEach loop. If the first two condition checks aren’t met, the Windows PowerShell script executes the Continue loop processing control, which tells it to skip the rest of the ForEach loop.

Using these capabilities in your script isn’t ideal for every situation, but they can help reduce “indent hell” by helping streamline and simplifying some of your script.

For more information about these Windows PowerShell commands, see:

Use clear and intelligently named variables

Too often I come across scripts that use variables, for example, $j. This name has nothing to do with what the variable is going to be used for, and it doesn’t help distinguish its purpose later in the script from another variable, such as $i.

You may know the purpose of $j and $i at the time you are writing the script, but don’t assume someone else will be able to pick up on their purposes when they are reviewing your script. Years from now, you may not remember the variable’s purposes when you are reviewing your script, and you will have to back track in your own script to reeducate yourself.

Ideally, variables should be clearly named for the data they represent. If the variable name contains multiple words, it’s a good idea to capitalize the first letter of each word so the name is easier to read because there are no spaces in a Windows PowerShell variable name. For example, the variable name of $GatheredMailboxes is easier to read quickly and understand than $gatheredmailboxes.

Providing longer and more intelligently named variables does not adversely affect Windows PowerShell performance or memory utilization from what I have seen. So there should be no arguments for saving memory space or improving speed to impede the adoption of this practice.

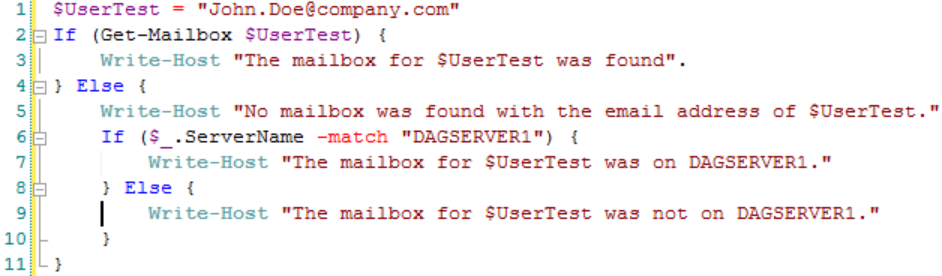

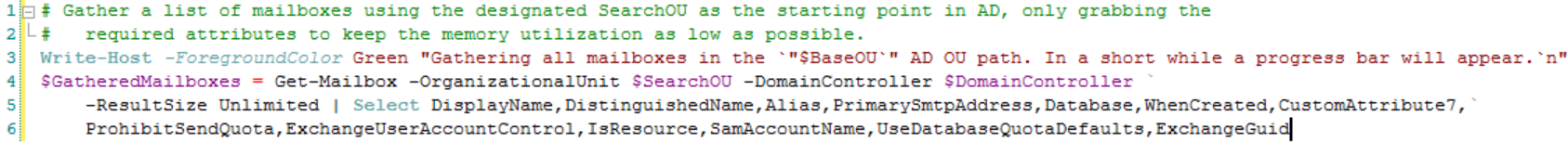

In the following example, all mailbox objects gathered by a large Get-Mailbox query are stored in a variable named $GatheredMailboxes, which should remove any ambiguity as to what the variable has stored in it.

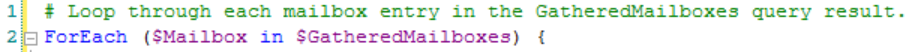

Building on this example, if we wanted to process each individual mailbox in the $GatheredMailboxes variable in a ForEach loop, we could additionally use a clear purpose variable with the name of $Mailbox like this:

Using longer variable names may seem unnecessary to some people, but it will pay off for you and others working with your scripts in the long run.

Leverage comment-based Help

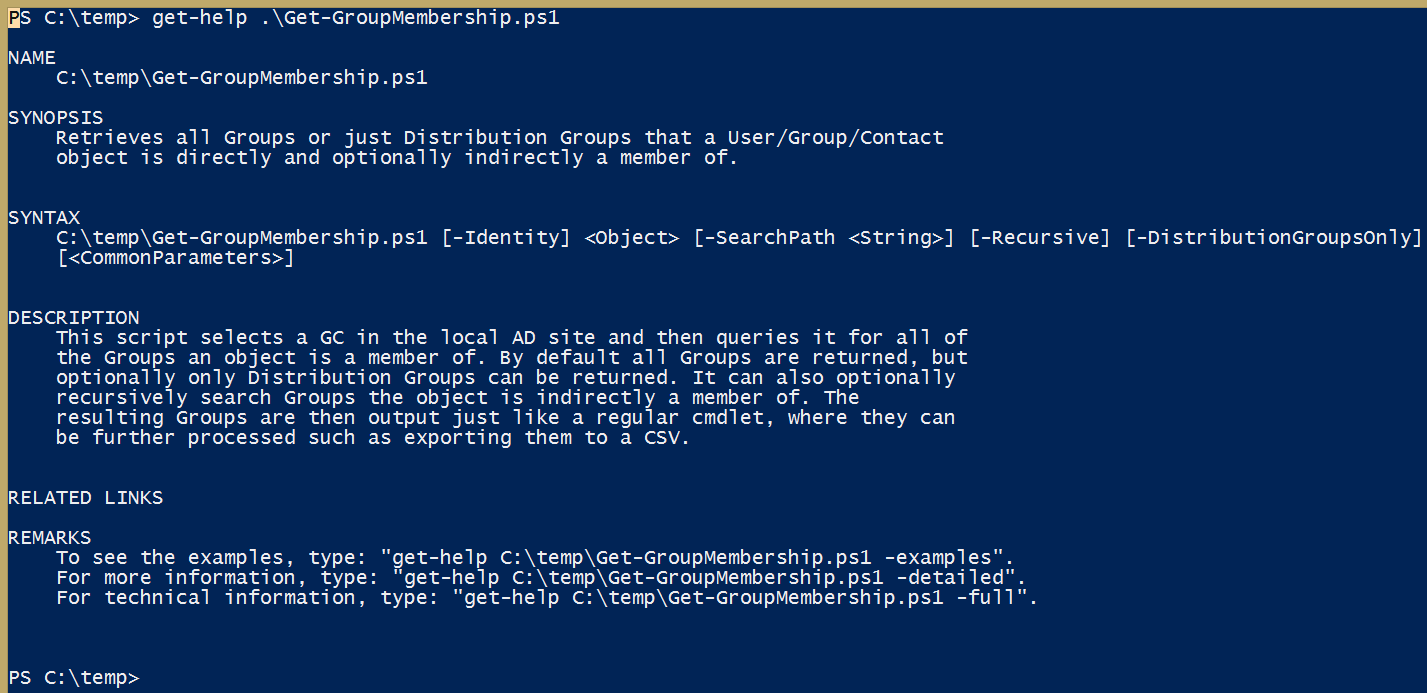

Sometimes known as the “header” in Windows PowerShell scripts, a block of text called comment-based Help allows you to provide important information to readers in a consistent format, and it integrates into the Help function in Windows PowerShell. Specifically, if the proper tags are populated with information, and a user runs Get-Help YourScriptName.ps1, that information will be returned to the user.

Although a header isn’t necessary for small scripts, it is a good idea to use the header to track information in large scripts, for example, version history, changes, and requirements. The header can also provide meaningful information about the script’s parameters. It can also provide examples, so your script users don’t have to open and review the script to understand what the parameters are or how they should use them.

For example, this is the Get-Help output from a Get-GroupMembership script I wrote:

If the –detailed or –full switches are used with the Get-Help cmdlet, even more information is returned.

For more information about standard header formatting, see WTFM: Writing the Fabulous Manual.

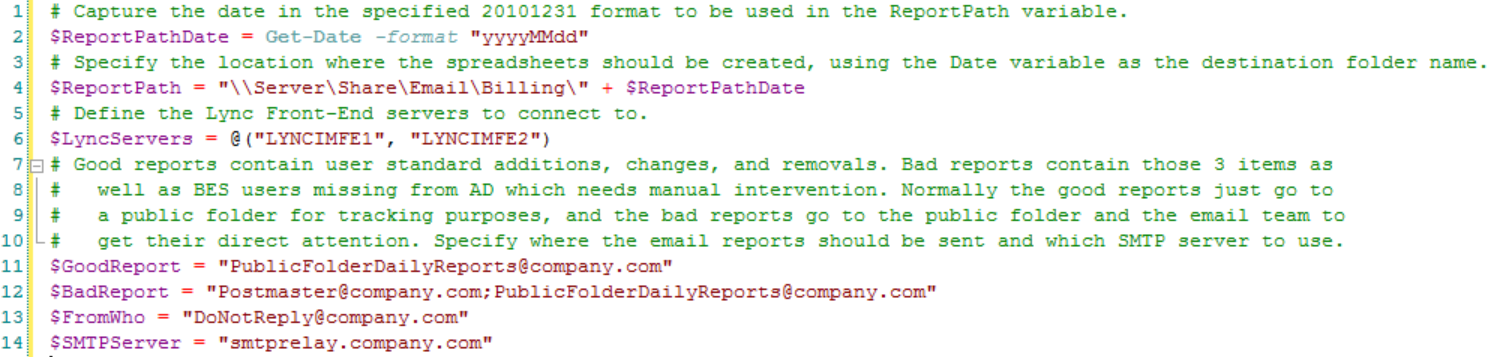

Place user-defined variables at top of script

Ideally, as the script is being written, but definitely before the script is “finished,” variables that are likely to be changed by a user in the future should be placed at the top of the script directly under the comment-based Help. This makes it easier for anyone making changes to those script variables, because they don’t have to go hunting for in your script. This should be obvious to everyone, but even I occasionally find myself forgetting to move a user-defined variable to the top of my script after I get it working.

For example, user might want to change the date and time format of a report file, where that file should be stored, who an email is to be sent to, and the grouping of servers to be used in the script:

There are no concrete rules as to when you should place a variable at the top of a script or when you should leave it in the middle of the script. If you are unsure whether you should move the variable to the top, ask yourself if another person might want or need to change it in the future. When in doubt, and if moving the variable to the top of the script won’t break anything, it’s probably a good idea to move it.

Comment, comment, comment

Writing functional script is important because, otherwise, what is the point of the script right? Writing script with consistent formatting and clearly labeled variables is also important, otherwise your script will be much harder to read and understand by someone else. Likewise adding detailed comments that explain what you are doing and why will further reduce confusion as other people (and your future self) try to figure out how and sometimes more importantly, why, specific script was used.

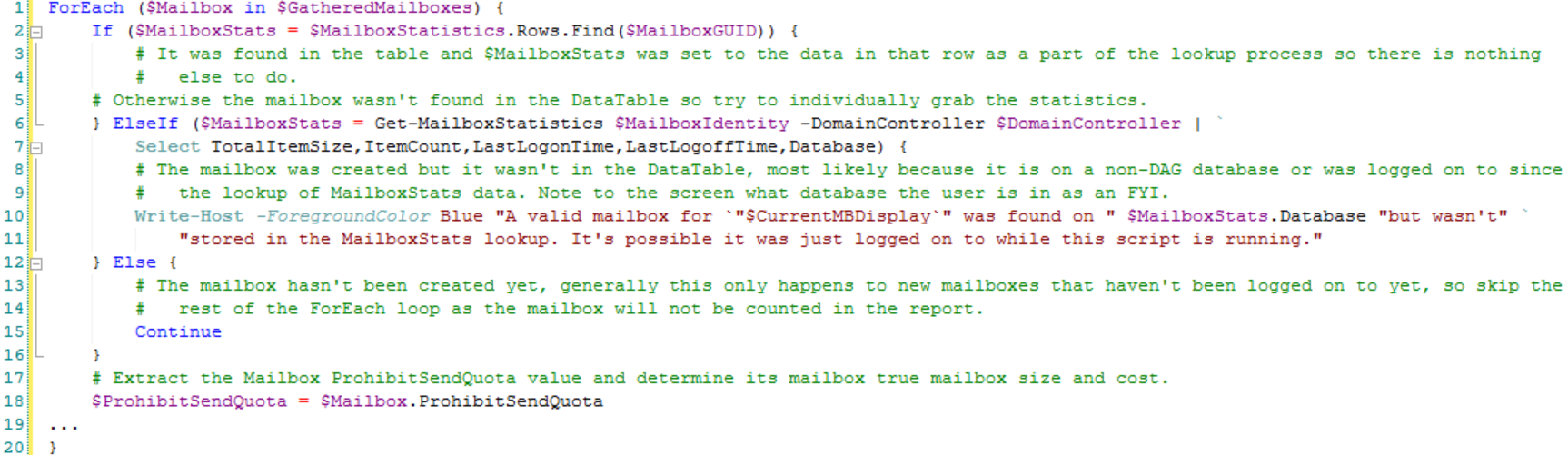

In the following detailed comment example, we are figuring out if a mailbox is using the default database mailbox size limits, and we are taking multiple actions if it is True. Otherwise we launch into an Else statement, which has different actions based on the value of the mailbox send limit.

This level of detailed commenting of what you are doing and why can seem like overkill until you get into a habit of doing it. But it pays off in unexpected ways, such as not having to sit with a co-worker and explain your script step-by-step, or having to remember why a year ago you made an array called $MailboxesBothLimits. This is especially true if you are doing any complex work in the script that you have not done before, or you know others will have a hard time figuring it out.

I prefer to err on the side of caution, so I tend to over comment versus under comment in my script. When in doubt, I pretend I am going to publish the script in the TechNet Gallery (even if I know I won’t), and I use that as a gauge as to how much commenting to add. Most Windows PowerShell text editors will color code comments in a different color than the real script, so users who don’t care about the comments can skip them if they don’t need them.

When it comes to inline commenting when comments are added on the same line of script but at the end, my advice is to strongly avoid this practice. When people skim script, they don’t always look to the end to see if there was a comment. Also, if others start modifying your script, you could end up with old or invalid comments in places where you didn’t expect them, which could cause further confusion.

Note There are different personal styles of Windows PowerShell commenting, from starting each line with # to using <# and #> to surround a block of comment text. One way is as good as another, and you should use a personal style that makes sense to you (be consistent about it). For example, in my scripts, the first line of a new block of commenting always gets a # followed by one space. Each additional line in the continued comment block gets a # followed by three spaces. You can see this demonstrated in the second and third lines of script in the previous example. I like using this method because it shows me when I have multiple separate comments next to each other in the script. The important point is that you are putting comments in your script.

Avoid unnecessary temporary data output and retrieval

Occasionally, I come across a script where the author is piping the results of one query to a file, such as a CSV file, and then later reading that file information back into the script as a part of another query. Although this certainly works as a method of temporarily storing and retrieving information, doing so takes the data out of the computer’s extremely fast memory (in nanoseconds) and slows down the process because Windows PowerShell incurs a file system write and read I/O action (in milliseconds).

The more efficient method is to temporarily store the information from the first query in memory, for example, inside an array of custom Windows PowerShell objects or a data table, where additional queries can be performed against the in-memory storage mechanism. This skips the 2x file system I/O penalty because the data never leaves the computer’s fast memory where it was going to end up eventually.

This may seem like a speed best practice, but keeping data in memory if at all possible avoids unnecessary file system I/O headaches such as underperforming file systems and interference from file system antivirus scanners.

~Dan

Thank you, Dan, for a really helpful guest post. Join us tomorrow when Dan will continue his discussion.

I invite you to follow me on Twitter and Facebook. If you have any questions, send email to me at scripter@microsoft.com, or post your questions on the Official Scripting Guys Forum. See you tomorrow. Until then, peace.

Ed Wilson, Microsoft Scripting Guy

I have many friends who are software devs and I've been leaning on them for much of my advice. I do have a question, why does everyone leave the parentheses { to open a subroutine at the end of the line they wrote, rather than put it on a new line with the correct indent? Writing the code in this fashion is much easier to track open and close of subroutines.

Example:

It seems coders prefer this,

if (Test-Path $outfile){

Remove-Item $outfile

}

My developer friends suggest this:

if (Test-Path $outfile)

{

Remove-Item $outfile

}

Just curious, Thanks!

Edit:...