Sanjeev Gogna and Charles Ofori, Senior Application Development Managers, discuss the importance of testing and spotlight the resources available to our customers as part of a testing engagement.

This is the second of a three part series to discuss the importance of testing and spotlight the resources available through Premier Support and our IT Support Management (ITSM) team. In the first blog post we introduced the features of Premier testing services. In this blog, we will focus on the stages of such a lab engagement – planning, execution and post lab activities.

Planning

This phase will typically last a month to a couple of months depending on the complexity. In this phase we work with our customers to clearly define the objectives and goals of the lab engagement. We also focus on determining their physical and software topology. An initial Test Plan is obtained from the customer for conversations to be initiated with a Microsoft Test Architect.

- Determine lab goals and objectives

- Determine hardware and software requirements for the testing topology

- Determine the high level test plan and application architecture

- Determine Microsoft Subject Matter experts needed to help execute the test engagement

Here are some of the questions that help us to properly scope out the items listed above. This will help you see the level of detail we need to capture and understand for a successful lab engagement.

I Background

This is where the customer provides some basics about the engagement itself, to include:

- Customer Background (Market segment, etc.)

- Business Need (Marketing numbers, hardware requirements, porting, etc.)

- Engagement Objectives (Goal statement—2-3 sentence description of goals)

- Success Criteria (Listing of metrics for success—concurrent users, pages/sec, etc.)

II Architecture

- What is the architecture of the application you will be testing? How many tiers (Web Application 3-tier, Client Server 2-tier, Database Application 1-tier, Web Services n-tier)? Is the database SQL Server or Oracle? Will you test using DBLib, ODBC, OLE-DB, or OCI?

- Is the middle tier using MTS, IIS, ASP.NET, COM, JSP, ISAPI, DCOM, or ASP? Is it written in VB, C++, or Java?

- What application protocols are you testing (HTTP, HTTP Secure Sockets, FTP, .NET, RMI (Java), EJB, POP3, SMTP, LDAP etc…)?

- Is the front end thin or fat? Using IE, Netscape, DHTML, ActiveX, Java Script, VB Script, VB, VC++ or Java?

- Which tier do you wish to test? Will this be a db (ODBC, OLEDB) benchmark or a middle tier (IIS) benchmark? Or a functional front-end test (GUI)?

- Will Wide Area Network (WAN) connectivity be simulated? If so, what are the metrics for the WAN links to be simulated (i.e. packet loss, latency, speed, etc)?

III Testing Requirements

- Do you have scenarios identified that you want to test? How many scripts do you expect to run?

- Will you need actual physical clients (one instance per client machine) or virtual clients (multiple instances per client machine)?

- Have you tested this application before? If so, what has been used for testing (3rd party software?). Have you used Mercury LoadRunner/WinRunner or Segue SilkPerformer/SilkTest or IBM Rational Software for any testing?

IV Hardware Requirements

- What kind of hardware is required for this test? How many servers will be needed?

- How many client machines will you need?

- What are the configuration requirements? DB Server (disks, disk configuration, RAM, processors),

- Web Servers (how many, disks, disk configuration, RAM, processors),

- Application Servers (how many, disks, disk configuration, RAM, processors),

- Client machines (how many, disks, disk configuration, RAM, processors)? Are there any external (SAN or SCSI) disk requirements needed for the servers i.e. database? If so, what type and what is the configuration?

- Do you have any load balancing requirements? If so, what type (software or hardware)?

- What OS and IE version needs to be loaded on which machines (Database server, Web servers, Application servers, Client Servers etc.)? Would you like all the critical windows updates installed on all of the machines? *All other software (SQL, BackOffice, Exchange etc…) beyond the OS and IE must be installed by the Engagement Owner or the Customer.

- Will you be bringing any equipment? If so, we need lots of details on this (power, footprint, etc.)

- How many people will be attending? (Please list their names and role if possible)

- How will you be bringing your data? (CD, DVD, DLT Tape, Laptop, or Hard drive)? If you are bringing a DLT tape, what type will it be? If you are bringing a hard drive, what type will it be?

V. Knowledge Resources Required

- Are any technical resources required to attend during the engagement for the purposes of consultation? We will need a detailed test plan identifying which days you need what type of resource so that they can be scheduled accordingly.

Based on the info above, our consultants with create the environment needed and install the appropriate Microsoft software.

Execution Phase

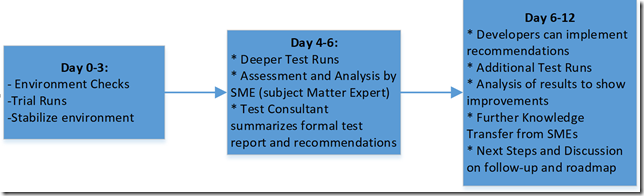

This phase typically lasts 2-3 weeks again depending on complexity. In most cases the engagement is delivered onsite at Microsoft labs.

- Customer will be given remote access to the lab environment so that they can install any non-Microsoft software and run a smoke test validation.

- Customers will then travel to our lab facilities and work with their assigned Microsoft test architect to set up a plan for daily test runs. They are also able to conduct remote testing, this involves an extra step of understanding the impact of latency of test scenarios and any mitigations that need to be incorporated.

- Establish a baseline run to compare against. Once the baseline has been established, each iteration will be measured against the baseline run to gauge if it exceeded the metrics of the baseline. The Performance Acceptance Criteria will be the baseline run.

- After the baseline is established, we test for each scenario on the test plan one by one. For a typical two-week execution, it follows the schedule below:

In a three-week engagement, the final week is leveraged for additional summary reports, setting up final closing call, which may sometimes include other remote stakeholders and developers who can directly benefit from the engagement’s outcome. During the closing call additional recommendations may be discussed along with action plans to advance the learnings obtained from the engagement with Microsoft Premier Developer.

Premier Support for Developers provides strategic technology guidance, critical support coverage, and a range of essential services to help teams optimize development lifecycles and improve software quality. Contact your Application Development Manager (ADM) or email us to learn more about what we can do for you.

0 comments