This post is provided by App Dev Manager Dave Harrison based on an interview with Aaron Bjork, Principal Group Program Manager for VSTS (Visual Studio Team Services) at Microsoft.

The following content is shared from an interview conducted in January with Aaron Bjork, Principal Group Program Manager for the VSTS (Visual Studio Team Services) product at Microsoft. Many people ask us how Microsoft accomplished our transformation with DevOps. Our interview with him opened up some valuable lessons that could be applied to any large enterprise trying to transform the way they deliver value and get feedback faster.

I just want to stress that you can’t follow what we did on the Visual Studio Team Services (VSTS) team like a prescription. There’s not another product in the world like ours; it would be foolish for me to say, you should exactly do it our way.

That being said, I do see some common elements in teams that successfully make the jump in DevOps:

- Have a single cadence across all your teams. I haven’t seen a single place yet where that won’t apply. Your teams within that cadence can have significant freedom and autonomy, but we want everyone to be dancing to the same beat.

- Ship at the end of each sprint. The saying we live by goes – “You can’t cheat shipping.” If you deliver working software to your users at the end of every iteration, you’ll learn what it takes to do that and which pieces you’ll need to automate. If you don’t ship at the end of each iteration, human nature kicks in and we start to delay, to procrastinate. Shipping at the end of a sprint is comfy and righteous and produces the right behaviors.

- We same-size our teams. Every team has a consistent size and shape – about 8-12 people, working across the stack all the way to production support. This helps not just with delivering value faster in incremental sizes, but gives us a common taxonomy so we can work across teams at scale. Whenever we break that rule – teams that are smaller than that, or bloat out to 20 people for example – we start to see anti-patterns crop up; resource horse-trading and things like that. I love the “two pizza rule” at Amazon; there’s no reason not to use that approach, ever.

- Have each team own their features as a product. Our teams own their features in production. If you start having siloed support or operations teams running things in production, almost immediately you start to see disruption in continuity and other bad behaviors. It doesn’t motivate people to ship quality and deliver end to end capabilities to users; instead it becomes a “not it” game.

In handling support, our teams each sprint are broken up into an “F” and an “L” team. The F team is focused on new features; the L team is focused on disruptions and lifecycle. We rotate these people, so every sprint a different pair of engineers are handling bugfixes and interruptions, and the other 10 new feature work. This helps people schedule their lives when they’re on call.

We’ve gone through a big movement in the past few years where we took our entire test bed, which was largely automated UI focused, and not a lot of unit testing, and flipped it on its head. Now we are running much fewer automated UI tests and a ton of what we call L1 and L2 tests, which are essentially unit tests at the lowest levels checking components and end to end capabilities. This allows us to run through our test cycle much faster, like every commit. I think you still have to do some level of acceptance testing; just determine what level works for your software base and helps drive quality.

We started to deploy at the end of every 3 weeks instead of twice a year. Another thing was, we moved everyone into the same building and reporting up to the same structure/org. The folks that run our ops are a part of our leadership team just like our engineering and program management team – all under the same umbrella. This started getting everyone bought into shared goals we have. We have monthly business reviews, where we talk about more than just the technical goals but financial, operations, bug health, not just code. This helps us align on the same goal, bringing people into same umbrella so we are invested in the other side, if you will.

Our teams own features in production – we hire engineers who write code, test code, deploy code, and support code. In the end that’s devops. Now our folks have a relationship with the people handling support – they have to. If you start with that setup – the rest falls into place. If you have separate groups, each responsible for a piece of the puzzle – that’s a recipe for not succeeding, in my view.

Branching is similar where we don’t have long-lived branches at all. We do have a release branch; our engineers check out their work from mainline though and they check in their short-lived branches direct to main. In general, I’d say people are checking their changes into their user branch every day; every other day they submit a pull request to integrate their user branch back to main. The team handles all merge issues internally; everything is validated that it works before its checked in.

When I think about how we handle releases, a couple things come to mind. First, we want to minimize the time that any code is written is in isolation. We used to have the mindset – at beginning of each sprint, teams would check their code into a feature branch and then integrate back at end of sprint. The problem with this is, the longer you stay away from master, the harder it is to integrate and you pay a massive tax with merge issues. We want to check into master continuously, that’s a very important construct for us. Second, we wanted to get into mindset that when a feature is ready, it’s easy to put it into production. Instead of the idea that we will put a new feature into production when its 100% ready, move to where the feature is ALWAYS being put into prod. We were trying to get out of engineering mechanics – something we were constantly having to manage, where I felt it should be more a consistent, without thinking kind of mechanical movement. Now our mechanics are the same whether something is a bug, a critsit incident or a new feature – and we do it without thinking. Getting to that model and think that way required some change – but now, we’re always writing code, always deploying code. Feature flags were a big help to us where we felt like we can turn on access to a new feature when we’re ready – it’s safe, controlled.

Pair programming is accepted widely as a best practice; it’s also a culture that shapes how we write code. The interesting thing here is we don’t mandate pair programming. We do teach it; some of our teams have embraced pair programming and it works great for them, always writing in tandem. Other teams have tried it, and it just hasn’t fit. We do enforce consistency on some things across our 40 different teams; others we let the team decide. Pair programming and XP practices are one thing we leave up to the devs; we treat them as adults and don’t shove one way of thinking down their throats.

Another big help to us is a kind of team of teams meeting, which we have once every sprint. This is not a “get everybody in the room” type of meeting but its very focused, about 4-6 people in the room, each representing their team. We don’t talk about what we’re doing now, but what we’re working on three sprints ahead. It always amazes me how many “A-Ha!” moments we have during these meetings. It really helps expose points of dependency that we weren’t aware of; “Hmm, we should probably synch up and make sure we have a shared point of view”. In my view this is very agile; its lightweight, just enough to accomplish the purpose.

We do track one metric that is very telling – the number of defects a team has. We call this the bug cap. You just take the number of engineers and multiply it by 4 – so if your team has 10 engineers, your bug cap is 40. We operate under a simple rule – if your bug count is above this bug cap, then in the next sprint you need to slow down and pay down that debt. This helps us fight the tendency to let technical debt pile up and be a boat anchor you’re dragging everywhere and having to fight against. With continuous delivery, you just can’t let that debt creep up on you like that. We have no dedicated time to work on debt – but we do monitor the bug cap and let each team manage it as they see best. I check this number all the time, and if we see that number go above the limit we have a discussion and find out if there’s a valid reason for that debt pileup and what the plan is to remedy. Here we don’t allow any team to accrue a significant debt but we pay it off like you would a credit card – instead of making the minimum payment though we’re paying off the majority of the balance, every pay period. It’s often not realistic to say “Zero bugs” – some defects may just not be that urgent or shouldn’t come ahead of a hot new feature work in priority. This allows us to keep technical debt to reasonable number and still focus on delivering new capabilities.

We have an engineering scorecard that’s visible to everyone but we’re very careful about what we put on that. Our measurements are very carefully chosen and we don’t give teams 20 things to work on – that’s overwhelming. With every metric that you start to measure, you’re going to get a behavior – and maybe some bad ones you weren’t expecting. We see a lot of companies trying to track and improve everything, which seems to be overburdening teams – no one wants to see a scorecard with 20 red buttons on it!

Agile is a culture more than anything else but – I’m going to be frank – too many people have turned it into a religion, a stone tablet with a bunch of “thou shalts” on it. Some organizations we’ve worked with for example bring in multiple rounds of expensive consultants and agile trainers, and they’re given an audit. “Oh, you’re not doing DSU’s, your sprint planning meeting doesn’t have the right amount of ceremony, blah blah.” This makes me a laugh a little. Do I think daily standups are good practice? Yes, I do. But I’m not going to measure a team’s efficiency by these things. If the team is struggling producing business value, then we might bring in some of these practices. But it is SO shortsighted to say if you follow these practices following this recipe you’ll be successful. I don’t allow people to start telling me “we need to do things Agile.” There’s just no such thing. Talk to me about what you want to achieve, the business value you want to drive, and that’s our starting point.

Just because you have a DSU doesn’t mean you’re making the right decisions. Because you’re using containers or adopted microservices doesn’t mean you’re doing DevOps. Maybe you’re better set up to do Agile or DevOps because of these tools, but nothing really has changed. Agile’s very simple and beautiful as a mindset – we are going to deploy as frequently as we can. Too often we turn it into a set of rules you have to follow.

Note: if you want more on this story and how Microsoft went about their transformation, check out Aaron’s presentation here – 41 minutes that could very well change your whole view of how to go about your own transformation. It remains one of the best real-world encapsulations of DevOps that I’ve ever seen. Some more DevOps stories from the Visual Studio team are here, including our understanding of what DevOps is and Munil Shah’s excellent thoughts on “shifting Left” with our test infrastructure. These and other interviews and case studies will form the backbone of our upcoming book “Achieving DevOps” from Apress, due out in late 2018. Contact me if you’d like an advance copy!

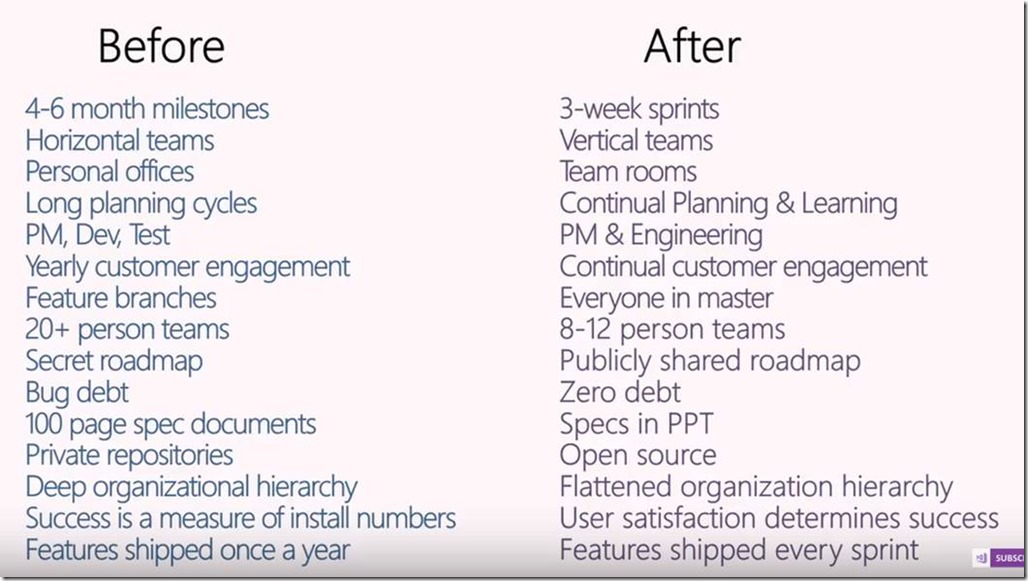

(from Aaron’s presentation deck on “Agile at Microsoft”, https://www.youtube.com/watch?v=-LvCJpnNljU )

Premier Support for Developers provides strategic technology guidance, critical support coverage, and a range of essential services to help teams optimize development lifecycles and improve software quality. Contact your Application Development Manager (ADM) or email us to learn more about what we can do for you.

0 comments