You may have already read about the suite of open-source Accessibility Insights products in Mark Reay’s post from August. In this blog post, we are going to talk about one of tools in the suite: Accessibility Insights for Windows, which enables users to inspect and test Windows applications to find and fix accessibility issues. The tool draws inspiration from legacy Windows accessibility testing tools from Microsoft, building on their features to offer additional automated and assisted testing functionality wrapped up in a modern user interface. Unlike many of these earlier tools, Accessibility Insights for Windows is built in .NET Framework using WPF and leverages Microsoft’s UI Automation framework.

Let’s dive into the core functionality of Accessibility Insights for Windows.

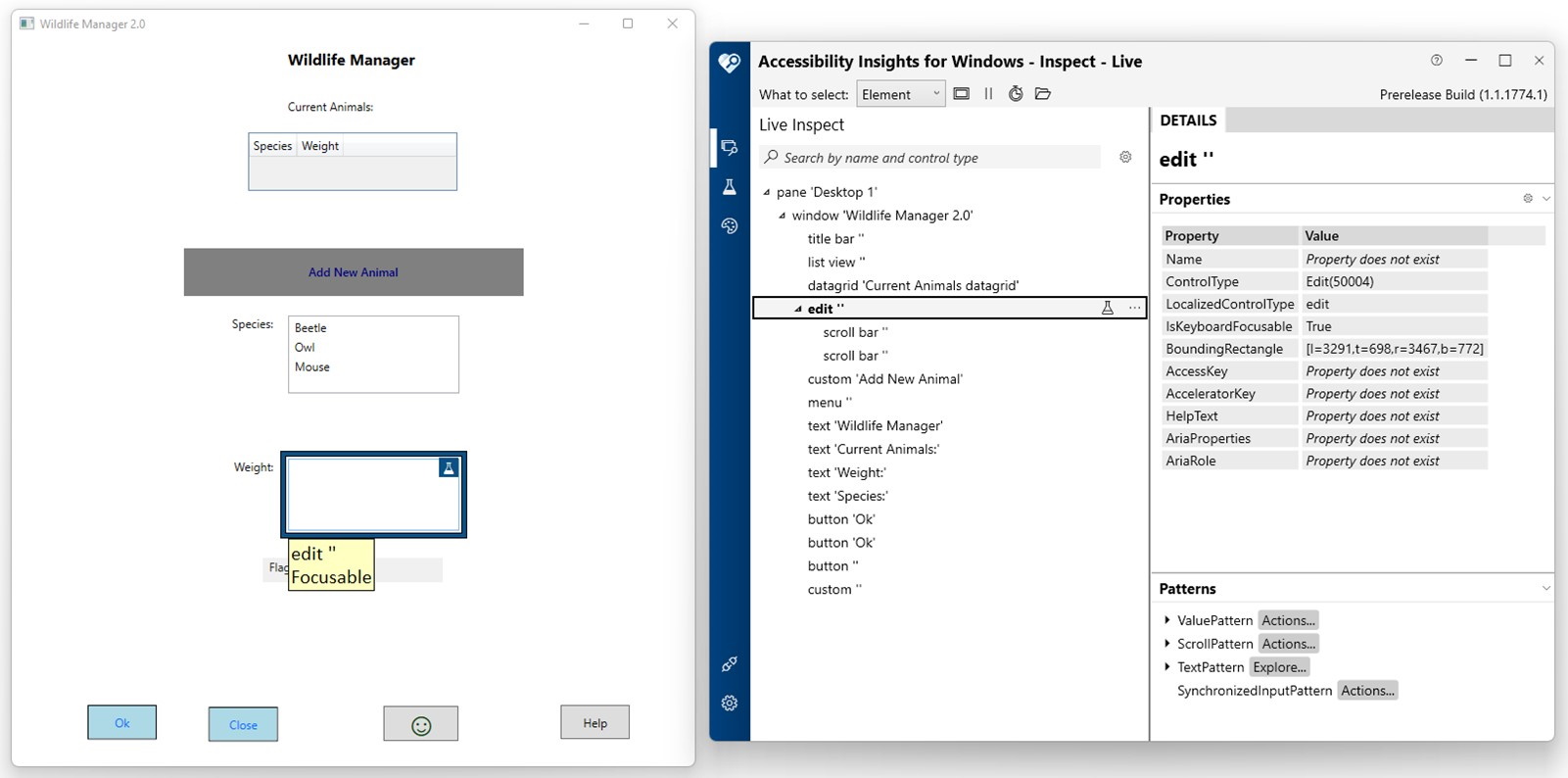

Inspect Mode

Inspect mode in Accessibility Insights for Windows

When you want to better understand what raw accessibility information will be available to assistive technologies, Accessibility Insights for Windows’s Inspect mode is the way to go. As you select elements using the mouse or keyboard, Accessibility Insights for Windows exposes the element’s position in the UI Automation tree alongside its properties and patterns. As an example, imagine a screen reader is narrating something unexpected for a particular button on your application. If you open Accessibility Insights for Windows in Inspect mode, you can quickly view what text is associated with that button’s UI Automation element, as well as information about neighboring elements. Since this is the same information a screen reader has access to, the data can be vital to diagnose and fix your issue.

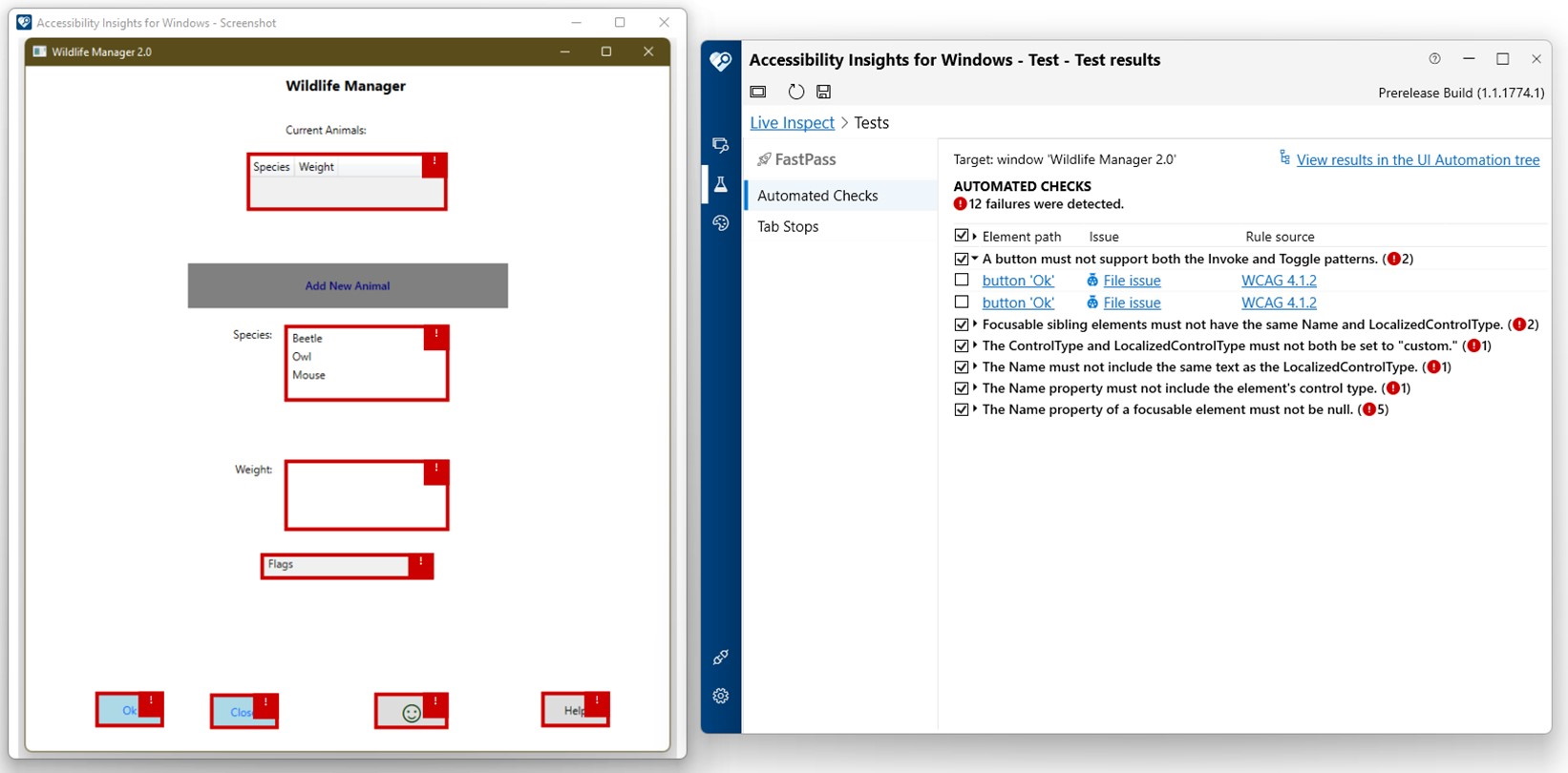

FastPass: Automated Checks and Tab Stops

FastPass is a lightweight, two-step process that helps developers identify common, high-impact accessibility issues in less than five minutes (also found in our Web and Android products). In the first step, users run Automated Checks, a test that checks compliance with dozens of accessibility rules, highlights results on a screenshot of the tested application, provides information on how to fix the issue, and links to sample code for potential fixes. You can find these code examples and more on Microsoft’s technical documentation website.

Automated checks

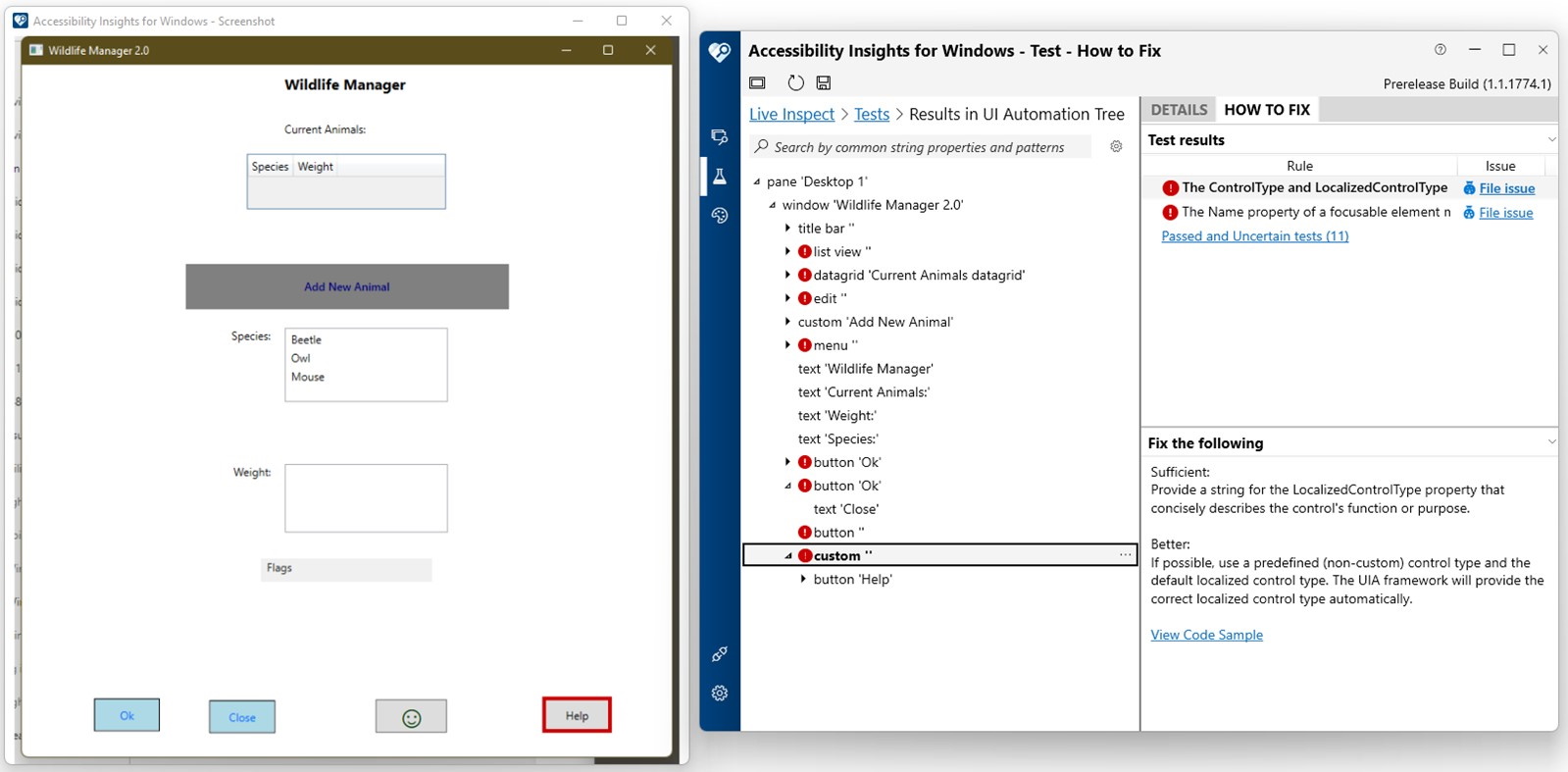

To better understand a particular result, users can navigate to a snapshot of the Inspect view at the time of scanning to view relevant UI Automation properties and patterns.

Inspected rule results and how-to-fix information

The results from Automated Checks can be saved, shared, and loaded with a .a11ytest file, which includes Automated Checks results, the accompanying UI Automation snapshot data, and the screenshot of the tested application. You can file issues to either GitHub or Azure DevOps directly from Accessibility Insights for Windows. For both platforms, relevant information about the rule result is automatically populated in the issue. For Azure DevOps, we automatically attach a screenshot and .a11ytest file to the issue once it is created.

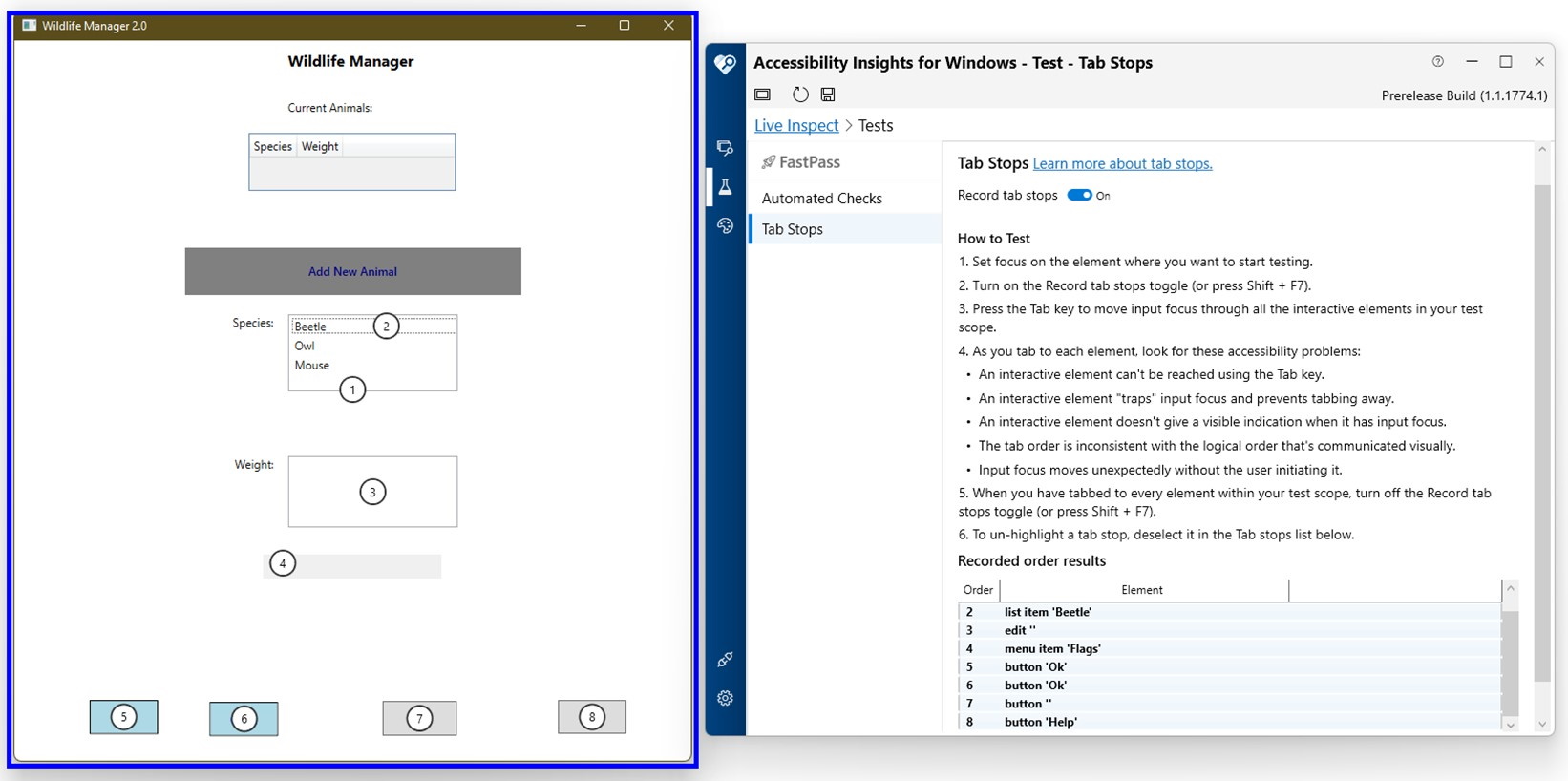

The next step is the Tab Stops assisted test. As you navigate through your target application using the keyboard, Accessibility Insights for Windows highlights and lists any elements that receive focus—it’s able to access this information by handling UI Automation FocusChanged events just like a screen reader. Using this data, users can quickly identify accessibility issues such as missing elements from the tab order.

Tab stops mode

Event Mode

Inspect mode is great for viewing the same data from your application as assistive technologies (ATs). Often, though, this data only offers a partial view of what ATs access and report. For example, imagine you noticed during the Tab Stops test that a button didn’t show up in the tab order despite visually receiving focus. Upon further investigation, you discover that a screen reader doesn’t seem to realize that there is a button at all. In that situation, Event mode might help you find answers. With Event mode, you can monitor and inspect the same UI Automation events that ATs observe. In this button-missing scenario, listening to events on the button could reveal that no UI Automation FocusChanged event occurs when the button appears to receive focus. If the screen reader can’t observe this event, it won’t attempt to read the element, regardless of how well populated its properties and patterns are. Similar to Automated Checks, Accessibility Insights for Windows allows users to save, share, and load recorded events and their accompanying UI Automation data in .a11yevent files.

Color Contrast Analyzer

Accessibility Insights for Windows offers Color Contrast Analyzer mode to allow users to manually test for color contrast accessibility issues. In this mode, users can select any two colors on screen using an eyedropper tool or manually via hex code and color palette and view their color contrast ratio alongside information about whether that ratio meets WCAG color contrast requirements.

Using Color contrast analyzer

Axe.Windows

Now that we’ve covered Accessibility Insights for Windows’s main features, let’s take a moment to highlight an interesting element behind the scenes: Axe.Windows, available as a NuGet package, takes the data provided by UI Automation and compares it to a set of rules which identify cases where the given data would create issues for users of the assistive technologies. These rules consider data such as an element’s properties, patterns, and other elements in the UI Automation tree. The results of these rules are then displayed in the Automated Checks section of Accessibility Insights for Windows. In its initial iteration, what is now Axe.Windows was a part of Accessibility Insights for Windows. Once Axe.Windows was separated into its own package, we developed a command-line interface which enables you to run our rules as a part of their own tests and CI/CD pipelines.

With that, we wrap up our introduction to Accessibility Insights for Windows! You can learn more and download the tool at https://accessibilityinsights.io or check out its repo on GitHub at https://github.com/Microsoft/accessibility-insights-windows. Stay tuned for more posts about our Accessibility Insights tools for Android and Web in the next few months!

Good day,

I am a user desperate to get a enigineer of the Microsoft data team to call me. How is it thta your teams cannot access you and leave a business down for 5 days no email or any form of conact other than a data protection call center collecting the same information over and over. Your Microsoft authenticator has issues, what's meant to assist a user is more problematic than anything else and your support is even worse.

A business has been down for five days Case #28813288 linked to 28813819.

Could you build apps that will improve...