Azure Cosmos DB is a fully-managed, multi-tenant, distributed, shared-nothing, horizontally scalable database that provides planet-scale NoSQL capabilities offering APIs for Apache Cassandra, MongoDB, Gremlin, Tables, and the Core (SQL) APIs . A core component of the Azure Cosmos DB service is the API Gateway that handles request parsing and routing across all supported APIs. It is now running on .NET 6. The API Gateway is the front door for the service that accepts and processes trillions of requests daily! Given the high throughput and scalability demands for the API Gateway, we continually invest in optimizing the performance of the service including relying on new .NET features.

The API Gateway was initially developed on .NET Framework. On .NET Framework, we were limited in our ability to improve the performance of the API Gateway, and over the years, we moved the API Gateway to .NET Core. Moving to .NET Core (and .NET 6) unlocked numerous features and optimizations that we eagerly leveraged to enhance the service. Currently, this critical piece of infrastructure is powered by .NET 6 and utilizes multiple .NET performance and scalability features to achieve low latency, high throughput request processing end to end.

A service like Azure Cosmos DB requires using low-level platform features, and we were glad that .NET 6 included a new set for us to use. Our changes led to overall lower CPU utilization and improving the end-to-end P90 latency of core requests by up to 1500%! This post covers Azure Cosmos DB’s path with .NET and the numerous benefits using the framework brought the service.

Azure Cosmos DB Architecture

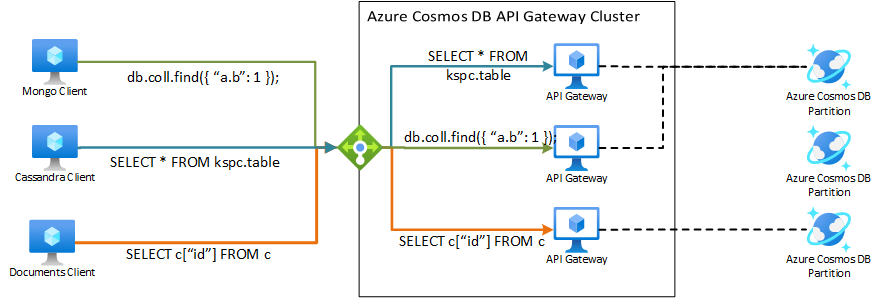

Azure Cosmos DB has a tiered architecture, consisting of a cluster of partitioned multi-tenant storage nodes that contain the user data and handles the distribution, replication, and processing of requests within that partition. The data is stored and processed in an API agnostic manner in these storage nodes. In addition to the storage nodes, there are additional clusters that act as API Gateway nodes . These API Gateways accept requests across all APIs, parse/translate/process the requests, efficiently route the requests across distributed storage nodes, and dispatch the post-processed responses to the client.

Figure 1: Overall Architecture of the Azure Cosmos DB Service

API Gateway nodes are multi-tenant, and host multiple .NET processes within them, each one handling requests for a given API. Each API Gateway process uses a subset of resources of the node and these resources are load balanced across tenants as the cluster load and state changes over time. Premium offerings are also available, where customers can purchase dedicated Gateway capacity to enable advanced capabilities such as write-through caching, data transformation and so on. The premium offerings enable reserving resources such as cores, memory in the service that can handle semi-stateful workloads for that customer.

Delivering high throughput and predictable low latency overhead for network-intensive scenarios are key requirements of the gateway. With the tiered architecture of requests being processed by the API Gateway and then by storage nodes, the request processing overhead on the Gateway needs to be ‘effectively invisible’ to the end-user. As we strive for minimal overhead on processing, the behavior and functionality of the underlying framework becomes crucial to understand and build on. We heavily rely on .NET features to meet those performance goals.

Leveraging .NET for the API Gateway

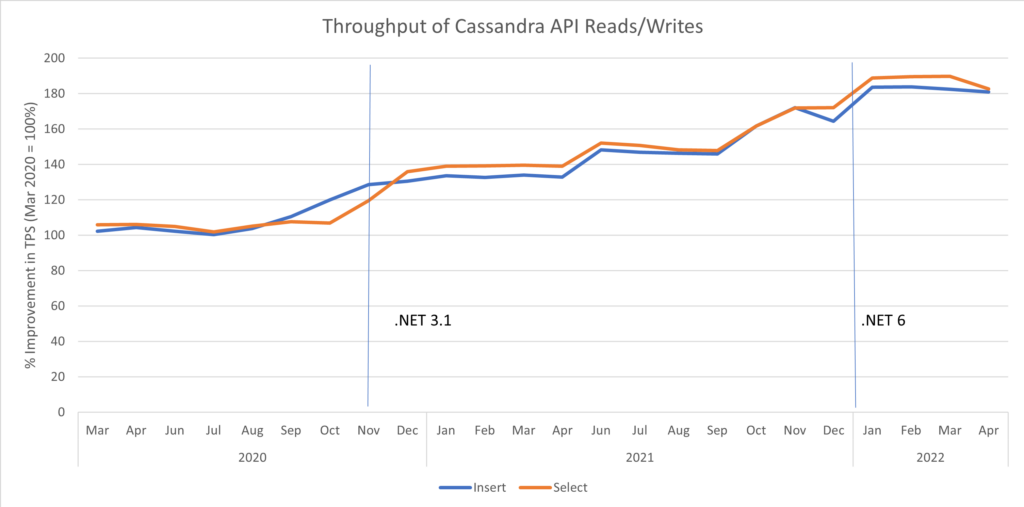

Building highly scalable performant services require a deep understanding of the underlying framework, and Azure Cosmos DB leverages several such features from the .NET runtime to achieve its performance goals within Azure. Numerous places in the request pipeline use specific performance features of .NET to improve the allocation profile or reduce the processing overhead of a request. Over the years, each .NET upgrade has provided a noticeable improvement in performance. These have been due to changes in the Azure Cosmos DB codebase as part of adopting the new framework, as well as improvements within the runtime itself. The graph in Figure 2 shows the relative improvements and increases in throughput from each framework upgrade over the years (higher is better).

Figure 2: Relative Throughput of Simple Insert/Read queries on Cassandra API over the years (Baselined on March 2020 )

HTTP Protocol Performance

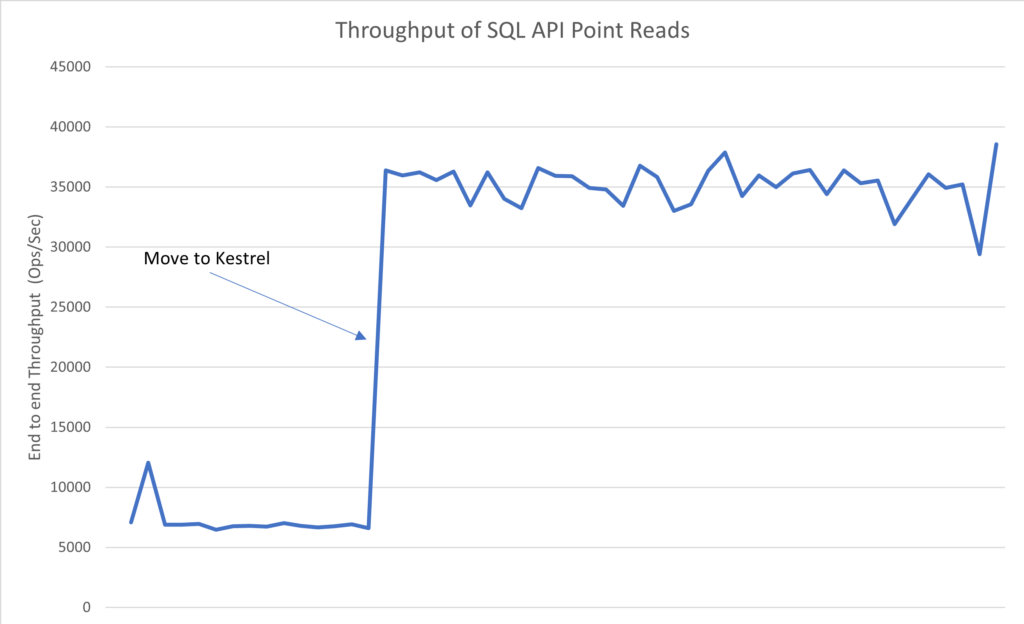

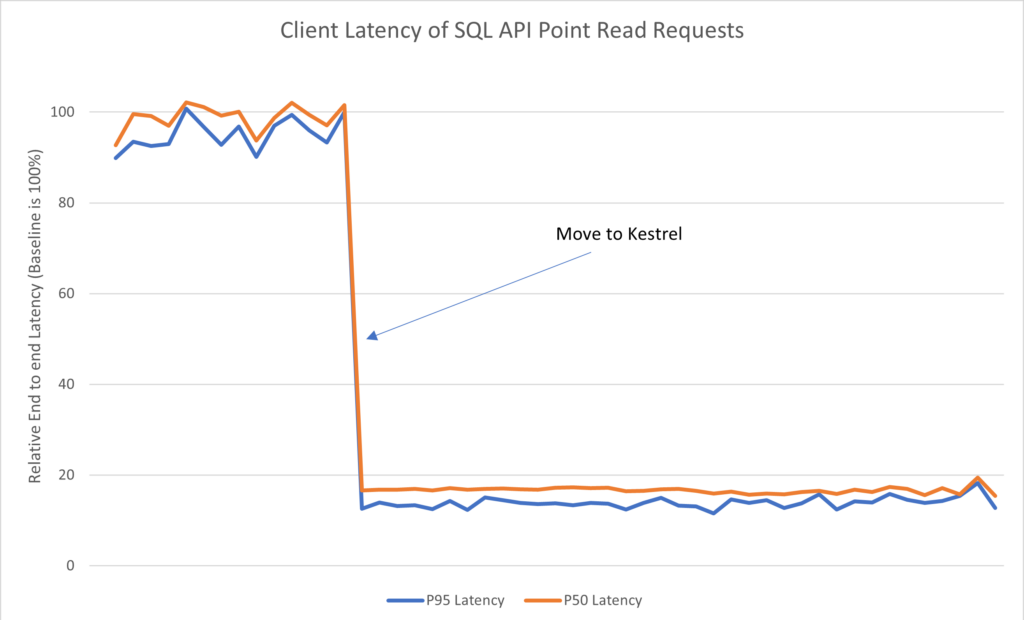

The transport protocol for the Documents (SQL), Table, and Gremlin APIs is based on HTTP. .NET Framework provides a default implementation using HttpListener that leverages the Http.SYS implementation in Windows to process requests. This API wraps around the Native callbacks and provides the services a Request/Response based model for HTTP. However, this also leaves significant performance benefits on the table with respect to caching state pertinent to a user connection and having multiple user/kernel transitions on reading a given request. The API Gateway moved from the default HttpListener stack to the ASP.NET Core Kestrel stack which implements the entire HTTP protocol in user mode with in-process parsing of the protocol and request state. This also allowed for the caching of connection bound state in process within the highly extensible and performant Kestrel stack. Currently, Azure Cosmos DB’s HTTP framework is entirely based on Kestrel, and the performance improvements from this move is shown below.

Figure 3 Throughput improvement on a single Gateway Process post Kestrel migration

Figure 4: End to end relative improvement in latencies for point read requests post-Kestrel migration (lower is better) Kestrel benefits include much easier configuration management for SSL connections, caching of connection state for networking and account configurations, and much better buffer management due to using shared memory pools between the Kestrel transport layer and the API Gateway infrastructure.

Buffer Management and Low Allocation APIs

As a network intensive service, Azure Cosmos DB’s API Gateway deals with lots of byte buffers. A significant portion of processing entails copying/processing buffers from a source to a sink (be it from the customer socket to the target storage node, or vice versa). On top of this, the request must be partially parsed to understand the necessary routing information and tenant details. Minimizing the allocation and processing overhead of these workflows is critical in the pipeline of a request.

Over the years, Azure Cosmos DB has heavily leveraged Span, Memory, and MemoryPool implementations in the .NET libraries, including the MemoryExtension APIs for string manipulation to avoid allocations in the request path. Swapping out URI path extraction using MemoryExtension APIs on ReadOnlyMemory and direct protocol parsing of UTF-8 spans has significantly reduced the overhead of routing requests in the API gateway. Using Pooled Memory backed buffers in the I/O path reduced buffer allocation overhead in the network to almost 0 in the request path, reducing the impact of Garbage Collections in the P99 latencies.

Similarly, in-memory caches using dictionaries are common, and utilizing the new .NET APIs for ConcurrentDictionary that pass in a Context argument for AddOrUpdate alone reduced delegate allocation overheads to 0 in the request path.

The impact of these improvements can be seen when we moved from .NET Framework to Core, where a specific customer’s latencies significantly improved by a factor of 5x from the migration.

NUMA Affinity and Process Scaling

As modern CPU architectures trend towards multiple cores and Non-Uniform Memory Access (NUMA), services need to adapt to that architecture . Azure Cosmos DB’s Gateway runs on VMs with hundreds of cores and multiple NUMA nodes. Running large processes that span multiple NUMA nodes yields sub-optimal performance with cross-NUMA interactions for the GC and thread pool. This is also a fairly common phenomenon in processes that run with more than 64 cores (across processor groups in Windows).

Consequently, Azure Cosmos DB affinitizes processes with Job Objects to specific cores within a NUMA node and runs multiple such processes on a given node. This allows for better memory locality, better utilization of caches, and much better predictability of performance. It also helps in improving the governance of rogue queries, tenants, and requests as the scope of managing such errant requests is limited to a subset of cores in a VM. Based on discussions with the .NET team, processor affinity was recommended as a generally good approach to mitigate the negative impact of memory access patterns with NUMA.

However, while several such processes exist in a VM, dynamic load balancing of connections across these processes presents a challenge. To resolve this, Azure Cosmos DB leverages .NET 5 and 6 features that enable dynamic connection multiplexing within a node. For TCP based protocols, .NET supports serializing connection state and ‘resuming’ the connection in another process. Similarly, Kestrel introduced the capability to load balance connections across multiple processes in .NET 6. Given these features, Azure Cosmos DB gateway processes can dynamically monitor load metrics and rebalance incoming connections across a large VM.

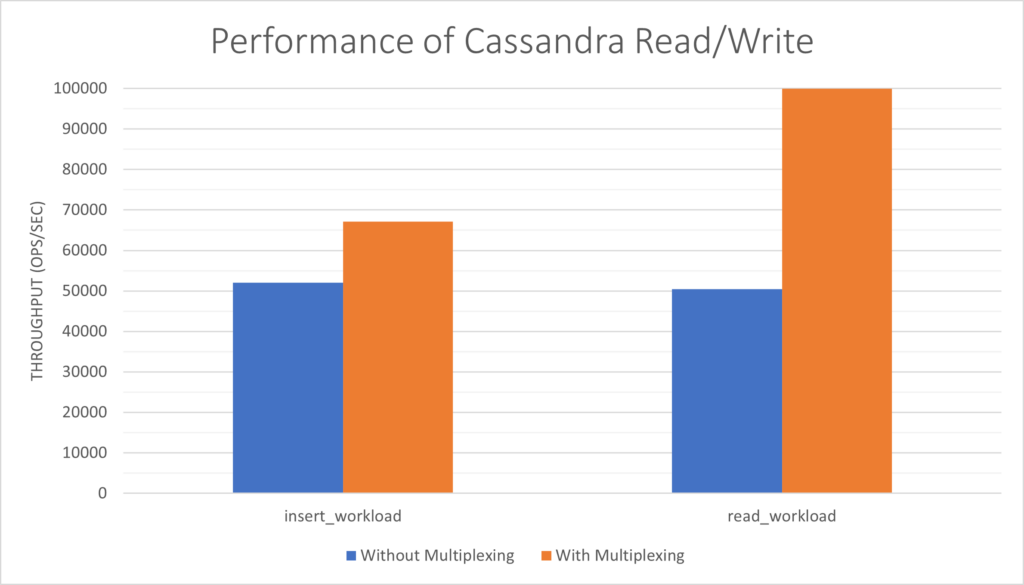

Figure 5: Throughput on a single process vs multiple processes with connection multiplexing for Cassandra API (higher is better)

As Figure 5 illustrates, introducing connection multiplexing presents a near linear scaling in throughput due to the strong affinity between processes and cores leading to better thread/memory locality. For write workloads, we did notice bottlenecks due to saturation outside of the API Gateway, and we’re working to resolve those bottlenecks soon.

Asynchronous Code Processing

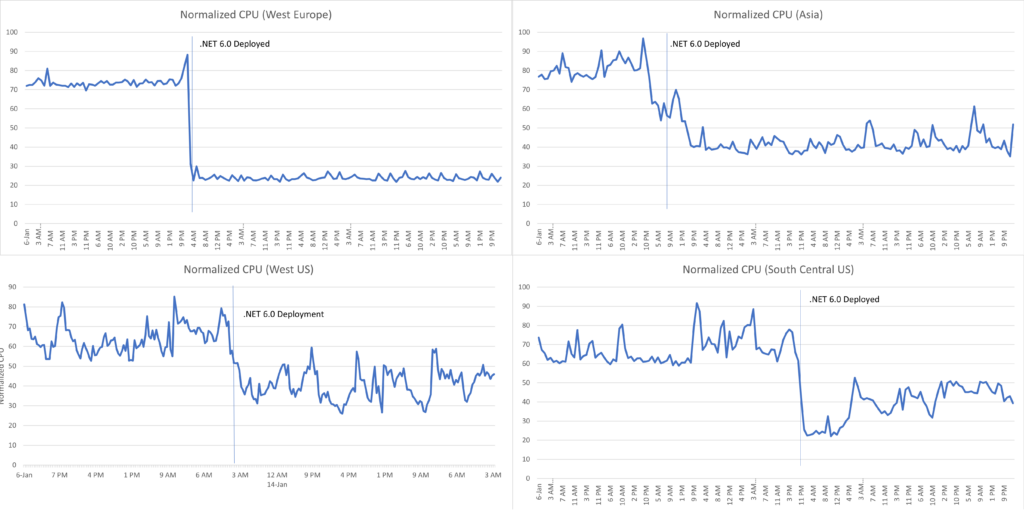

The API Gateway service is highly asynchronous. Improvements in async code APIs in .NET have delivered among the biggest performance improvements for Azure Cosmos DB. Numerous improvements due to additions such as ValueTask and optimizations in the framework around async state machine management have led to increased performance end to end with minimal changes in the application code. These were observed in every single .NET upgrade in the Azure Cosmos DB Gateway (from .NET Core 2.2 to 3.1, and again from .NET Core 3.1 to .NET 6.0).

Figure 6: Cluster level CPUs following the deployment of .NET 6.0

Summary

Azure Cosmos DB’s API Gateway is a low latency Azure service. It utilizes .NET in numerous ways to achieve its performance and latency requirements. Over the years, each .NET upgrade has yielded multiple benefits both in terms of new APIs that provide better ways of managing performance and improving the existing APIs and runtime behavior within the framework. We are actively working with the .NET team with adopting .NET 7 and look forward to even more high-impact performance features in upcoming .NET releases.

Thanks to Mei-Chin Tsai as co-author on this post. Mei-Chin was previously the engineering manager of the .NET Runtime team.

Thanks for the details. When you upgraded to .Net 6, did you also moved to Linux?

Your reference to HTTP.sys indicate your servers are Windows. But just want to confirm.

We did not move to Linux as part of our move to .NET 6. However we have considered Linux with .NET 6 for some other parts of our product.

Thank you for the excellent case study!

I would like to ask you more about this. I could not find any information about this in the release notes or documentation.

What specific functions/settings are available for “the capability to load balance connections across multiple processes in .NET 6.”

At a high level, the .NET Socket API for DuplicateAndClose allows us to take a client socket and dispatch the serialized socket to a target process. When a standard TcpListener accepts a new socket, that socket can be dispatched to another set of processes using the DuplicateAndClose API using whatever load balancing policy you choose.

Additionally ASP.NET Core's Kestrel implementation has an extensibility point to inject a custom factory to retrieve TCP Sockets which can be used to load balance Kestrel connections across processes as well.

Thank you, I understand. I did not know DuplicateAndClose API, so this was very helpful. 👍

Cool,

how about client side?

Will it reduce costs?

Congratulations! This is a great achievement! NET6 is awesome 😁🎉

It awesome to know about those results of moving to the newer version of .NET ! Congratulations!