In this post, we’ll discuss the improvements we’ve been making to the Windows Console’s internal text buffer, enabling it to better store and handle Unicode and UTF-8 text.

Posts in the Windows Command-Line series:

This list will be updated as more posts are published:

- Command-Line Backgrounder

- The Evolution of the Windows Command-Line

- Inside the Windows Console

- Introducing the Windows Pseudo Console (ConPTY) API

- Unicode and UTF-8 Output Text Buffer [this post]

[Source: David Farrell’s “Building a UTF-8 encoder in Perl”]

The most visible aspect of a Command-Line Terminal is that it displays the text emitted from your shell and/or Command-Line tools and apps, in a grid of mono-spaced cells – one cell per character/symbol/glyph. Great, that’s simple. How hard can it be, right – it’s just letters? Noooo! Read-on!

Representing Text

Text is text is text. Or is it?

If you’re someone who speaks a language that originated in Western Europe (e.g. English, French, German, Spanish, etc.), chances are that your written alphabet is pretty homogenous – 10 digits, 26 separate letters – upper & lower case = 62 symbols in total. Now add around 30 symbols for punctuation and you’ll need around 95 symbols in total. But if you’re from East Asia (e.g. Chinese, Japanese, Korean, Vietnamese, etc.) you’ll likely read and write text with a few more symbols … more than 7000 in total!

Given this complexity, how do computers represent, define, store, exchange/transmit, and render these various forms of text in an efficient, and standardized/commonly-understood manner?

In the beginning was ASCII

The dawn of modern digital computing was centralized around the UK and the US, and thus English was the predominant language and alphabet used.

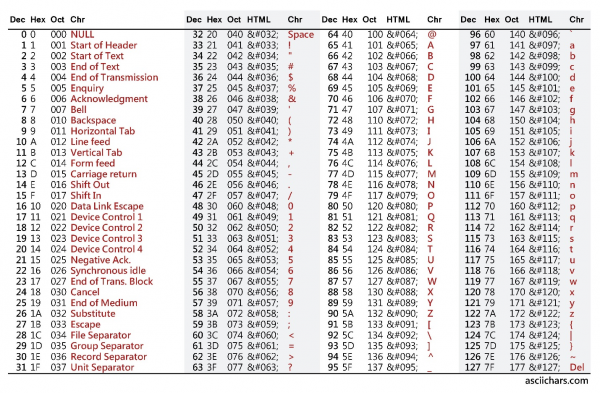

As we saw above, the ~95 characters of the English alphabet (and necessary punctuation) can be individually represented using 7-bit values (0-127), with room left-over for additional non-visible control codes.

In 1963, the American National Standards Institute (ANSI) published the X3.4-1963 standard for the American Standard Code for Information Interchange (ASCII) – this became the basis of what we now know as the ASCII standard.

… and Microsoft gets a bad rap for naming things 😉 The initial X3.4-1963 standard left 28 values undefined and reserved for future use. Seizing the opportunity, the International Telegraph and Telephone Consultative Committee (CCITT, from French: Comité Consultatif International Téléphonique et Télégraphique) proposed a change to the ANSI layout which caused the lower-case characters to differ in bit pattern from the upper-case characters by just a single bit. This simplified character case detection/matching and the construction of keyboards and printers.

Over time, additional changes were made to some of the characters and control codes, until we ended up with the now well-established ASCII table of characters which is supported by practically every computing device in use today.

The rapid adoption of Computers in Europe presented a new challenge though: How to represent text in languages other than English. For example, how should letters with accents, umlauts, and additional symbols be represented?

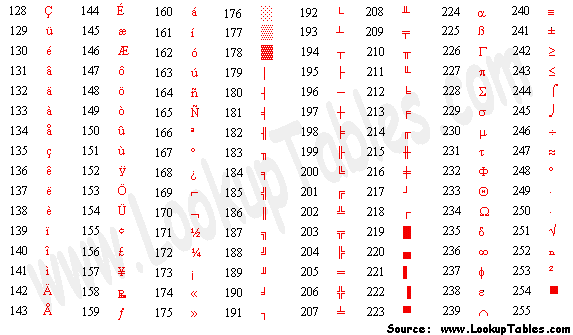

To accomplish this, the ASCII table was extended with the addition of an extra bit, making characters 8-bits long, adding 127 “extended characters”:

But that that still didn’t provide enough room to represent all the characters, glyphs and symbols required by computer users across the globe, many of whom needed to represent and display additional characters / glyphs.

So, Code pages were introduced.

Code Pages – a partial solution

Code pages define sets of characters for the “extended characters” from 0x80 – 0xff (and, in some cases, a few of the non-displaying characters between 0x00 and 0x19). By selecting a different Code page, a Terminal can display additional glyphs for European languages and some block-symbols (see above), CJK text, Vietnamese text, etc.

However, writing code to handle/swap Code pages, and the lack of any standardization for Code pages in general, made text processing and rendering difficult, error prone, and presented major interop & user-experience challenges.

Worse still, 128 additional glyphs doesn’t even come close to providing enough characters to represent some languages: For example, high-school level Chinese uses 2200 ideograms, with several hundred more in everyday use, and in excess of 7000 ideograms in total.

Clearly, code pages – additional sets of 128 chars – are not a scalable solution to this problem.

One approach to solving this problem was to add more bits – an extra 8-bits, in fact!

The Double Byte Character Set (DBCS) code-page approach uses two bytes to represent a single character. This gives an addressable space of 2^16 – 1 == 65,535 characters. However, despite attempts to standardize the Japanese Shift JIS encoding, and the variable-length ASCII-compatible EUC-JP encoding, DBCS code-page encodings were often riddled with issues and did not deliver a universal solution to the challenge of encoding text.

What we really needed was a Universal Code for text data.

Enter, Unicode

Unicode is a set of standards that defines how text is represented and encoded.

The design of Unicode started in 1987 by engineers at Xerox and Apple. The initial Unicode-88 spec was published in February 1988, and has been continually refined and updated ever since, adding new character representations, additional language support, and even emoji 😊

For a great history of Unicode, read this! Today, Unicode supports up to 1,112,064 valid “codepoints” each representing a single character / symbol / glyph / ideogram / etc. This should provide plenty of addressable codepoints for the future, especially considering that “Unicode 11 currently defines 137,439 characters covering 146 modern and historic scripts, as well as multiple symbol sets and emoji” [source: Wikipedia, Oct 2018]

“1.2 million codepoints should be enough for anyone” – source: Rich Turner, Oct 2018 Unicode text data can be encoded in many ways, each with their strengths and weaknesses:

| Encoding | Notes | # Bytes per codepoint | Pros | Cons |

| UTF-32 | Each valid 32-bit value is a direct index to an individual Unicode codepoint | 4 | No decoding required | Consumes a lot of space |

| UTF-16 | Variable-length encoding, requiring either one or two 16-bit values to represent each codepoint | 2/4 | Simple decoding | Consumes 2 bytes even for ASCII text. Can rapidly end-up requiring 4 bytes |

| UCS-2 | Precursor to UTF-16 Fixed-length 16-bit encoding used internally by Windows, Java, and JavaScript | 2 | Simple decoding | Consumes 2 bytes even for ASCII text. Unable to represent some codepoints |

| UTF-8 | Variable-length encoding. Requires between one and four bytes to represent all Unicode codepoints | 1-4 | Efficient, granular storage requirements | Moderate decoding cost |

| Others | Other encodings exist, but are not in widespread use | N/A | N/A | N/A |

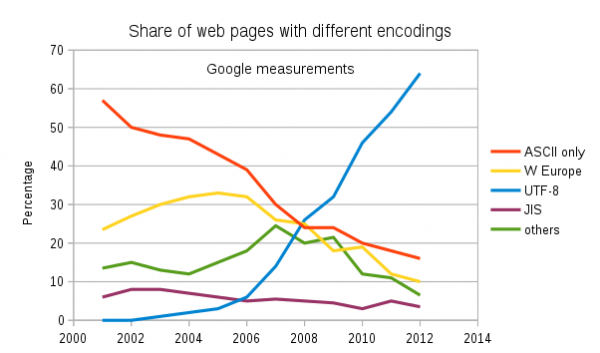

Due largely to its flexibility and storage/transmission efficiency, UTF-8 has become the predominant text encoding mechanism on the Web: As of today (October 2018), 92.4% of all Web Pages are encoded in UTF-8!

It’s clear, therefore that anything that processes text should at least be able to support UTF-8 text.

To learn more about text encoding and Unicode, read Joel Spolsky’s great writeup here: The Absolute Minimum Every Software Developer Absolutely, Positively Must Know About Unicode and Character Sets (No Excuses!)

Console – built in a pre-Unicode dawn

Alas, the Windows Console is not (currently) able to support UTF-8 text!

Windows Console was created way back in the early days of Windows, back before Unicode itself existed! Back then, a decision was made to represent each text character as a fixed-length 16-bit value (UCS-2). Thus, the Console’s text buffer contains 2-byte wchar_t values per grid cell, x columns by y rows in size.

While this design has supported the Console for more than 25 years, the rapid adoption of UTF-8 has started to cause problems:

One problem, for example, is that because UCS-2 is a fixed-width 16-bit encoding, it is unable to represent all Unicode codepoints.

Another related but separate problem with the Windows Console is that because GDI is used to render Console’s text, and GDI does not support font-fallback, Console is unable to display glyphs for codepoints that don’t exist in the currently selected font!

Font-fallback is the ability to dynamically look-up and load a font that is similar-to the currently selected font, but which contains a glyph that’s missing from the currently selected font These combined issues are why Windows Console cannot (currently) display many complex Chinese ideograms and cannot display emoji.

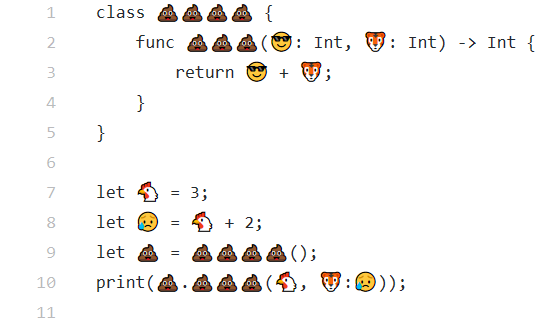

Emoji? SRSLY? This might at first sound trivial but is an issue since some tools now emit emoji to, for example, indicate test results, and some programming languages’ source code supports/requires Unicode, including emoji!

[Source: You can use emoji characters in Swift variable, constant, function, and class names]

But I digress …

Text Attributes

In addition to storing the text itself, a Console/Terminal must store the foreground and background color, and any other per-cell information required.

These attributes must be stored efficiently and quickly – there’s no need to store background and foreground information for each cell individually, especially since most Console apps/tools output pretty uniformly colored text, but storing and retrieving attributes must not unnecessarily hinder rendering performance.

Let’s dig in and find out how the Console handles all this! 😊

Modernizing the Console’s text buffer

As discussed in the previous post in this series, the Console team have been busy overhauling the Windows Console’s internals for the last several Win10 releases, carefully modernizing, modularizing, simplifying, and improving the Console’s code & features … while not noticeably sacrificing performance, and not changing current behaviors.

For each major change, we evaluate and prototype several approaches, and measure the Console’s performance, memory footprint, power consumption, etc. to figure-out the best real-world solution. We took the same approach for the buffer improvements work which was started before 1803 shipped and continue beyond 1809.

The key issue to solve was that the Console previously stored each cell’s text data as UCS-2 fixed-length 2-byte wchar_t values.

To fully support all Unicode characters we needed a more flexible approach that added no noticeable processing or memory overhead for the general case, but was able to dynamically handle additional bytes of text data for cells that contain multi-byte Unicode characters.

We examined several approaches, and prototyped & measured a few, which helped us disqualify some potential approaches where turned-out to be ineffective in real-world use.

Adding Unicode Support

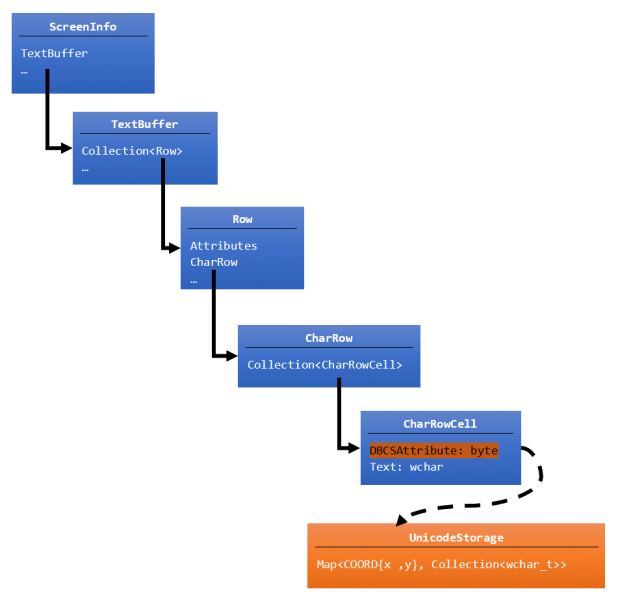

Ultimately, we arrived at the following architecture:

From the top (original buffer’s blue boxes):

- ScreenInfo – maintains information about the viewport, etc., and contains a TextBuffer

- TextBuffer – represents the Console’s text area as a collection of rows

- Row – uniquely represents each CharRow in the console and the formatting attributes applied to each row

- CharRow – contains a collection of CharRowCells, and the logic and state to handle row wrapping & navigation

- CharRowCell – contains the actual cell’s text, and a DbcsAttribute byte containing cell-specific flags

- CharRow – contains a collection of CharRowCells, and the logic and state to handle row wrapping & navigation

- Row – uniquely represents each CharRow in the console and the formatting attributes applied to each row

- TextBuffer – represents the Console’s text area as a collection of rows

Several key changes were made to the Console’s buffer implementation (indicated in orange in the diagram above), including:

- The Console’s

CharRowCell::DbcsAttributestores formatting information about how wide the text data is for DBCS chars. Not all bits were in use so an additional flag was added to indicate if the text data for a cell exceeds one 16-bitwchar_tin length. If this flag is set, the Console will fetch the text data from theUnicodeStorage. UnicodeStoragewas added, which contains a map of{row:column}coordinates to a collection of 16-bitwcharvalues. This allows the buffer to store an arbitrary number ofwcharvalues for each individual cell in the Console that needs to store additional Unicode text data, ensuring that the Console remains impervious to expansion to the scope and range of Unicode text data in the future. And becauseUnicodeStorageis a map, the lookup cost of the overflow text is constant and fast!

So, imagine if a cell needed to display a Unicode grinning face emoji: 😀 This emoji’s representation in (little-endian) bytes: 0xF0 0x9F 0x98 0x80, or in words: 0x9FF0 0x8098. Clearly, this emoji glyph won’t fit into a single 2-byte wchar_t. So, in the new buffer, the CharRowCell’s DbcsAttribute‘s “overrun” flag will be set, indicating that the Console should look-up the UTF-16 encoded data for that {row:col} stored in the UnicodeStorage’s map container.

A key point to make about this approach is that we don’t need any additional storage if a character can be represented as a single 8/16-bit value: We only need to store additional “overrun” text when needed. This ensures that for the most common case – storing ASCII and simpler Unicode glyphs, we don’t increase the amount of data we consume, and don’t negatively impact performance.

Great, so when do I get to try this out?

If you’re running Windows 10 October 2018 Update (build 1809), you’re already running this new buffer!

We tested the new buffer prior to including it quietly in Insider builds in the months leading-up to 1809 and made some key improvements before 1809 was shipped.

Are we there yet?

Not quite!

We’re also working to further improve the buffer implementation in subsequent OS updates (and via the Insider builds that precede each OS release).

The changes above only allow for the storage of a single codepoint per CharRowCell. More complex glyphs that require multiple codepoints are not yet supported, but we’re working on adding this capability in a future OS release.

The current changes also don’t cover what is required for our “processed input mode” that presents an editable input line for applications like CMD.exe. We are planning and actively updating the code for popup windows, command aliases, command history, and the editable input line itself to support full true Unicode as well.

And don’t go trying to display emoji just yet – that requires a new rendering engine that supports font-fallback – the ability to dynamically find, load, and render glyphs from fonts other than the currently selected font. And that’s the subject of a whole ‘nother post for another time 😉.

Stay tuned for more posts soon!

We look forward to hearing your thoughts – feel free to sound-off below, or ping Rich on Twitter.

Hi Rich,

I just wanted to share this post:

https://developercommunity.visualstudio.com/content/problem/136180/utf-8-save-as-without-signature-default-request-to.html

Though it is to do with Visual Studio, one of the issues I present is the possible difficulties working in the Pre-installation environment, especially when Australia has the possibility of two different OEM CHCP.