Artificial Intelligence is evolving rapidly. What began as simple prompt-and-response systems is now transforming into fully autonomous, agentic AI architectures capable of reasoning, orchestrating tools, interacting with enterprise data, and invoking external systems dynamically. While these capabilities unlock enormous business potential, they also introduce an entirely new category of security challenges.

Organizations are no longer asking only:

“How do we build AI systems?”

They are now asking:

“How do we build AI systems securely, responsibly, and with governance built into every layer?”

This is where security architecture becomes critical.

Modern AI systems introduce threats that traditional applications were never designed to handle. Prompt injection, data poisoning, over-privileged agents, hidden data exfiltration, unauthorized tool execution, and lack of operational traceability are becoming real concerns as enterprises move toward production-scale AI adoption.

To address these emerging risks, MAESTRO framework is a layered threat modeling approach designed specifically for AI and Agentic AI systems. At the same time, Microsoft SQL introduces a powerful set of AI-enabled capabilities that bring AI closer to enterprise data while maintaining strong governance, observability, and security boundaries.

This combination creates an interesting architectural opportunity:

Microsoft SQL is no longer just a database. It becomes a governed execution boundary for enterprise AI systems.

Understanding the MAESTRO Framework

The MAESTRO framework provides a structured way to think about security risks across AI systems. Instead of viewing AI as a single application component, MAESTRO breaks the architecture into multiple operational layers, each with its own attack surface and security concerns.

These layers include:

- Foundation Models

- Data Operations

- Agent Frameworks

- Deployment & Infrastructure

- Evaluation & Observability

- Security & Compliance

- Agent Ecosystem

What makes MAESTRO particularly important is that it recognizes a fundamental shift in how applications behave. Traditional threat modeling frameworks such as STRIDE were designed around predictable application behavior, predefined execution paths, and relatively static trust boundaries. Agentic AI systems introduce a fundamentally different operating model. These systems operate dynamically at runtime, combining user input, retrieved data, tools, and external system interactions to make decisions and execute actions. As a result, the attack surface becomes significantly more dynamic and less deterministic than traditional applications. Frameworks such as MAESTRO help organizations evaluate these emerging risks across the full AI operational stack rather than focusing solely on conventional application threats.

Understanding the attack surface is only the first step. The next challenge is determining how to reduce risk across these interconnected layers. Because AI systems span data, models, agents, infrastructure, and external services, organizations require security controls that operate across multiple boundaries simultaneously.

Why Defense-in-Depth Matters for AI Systems

One of the biggest misconceptions in AI security is the idea that a single security control can “solve” AI risk. In reality, AI systems require layered protection strategies because attacks can occur across multiple boundaries simultaneously.

An attacker may manipulate prompts, poison retrieval data, abuse delegated agent permissions, or exploit infrastructure misconfigurations. Preventing every attack entirely may not always be possible.

Instead, modern AI security focuses on:

- reducing blast radius

- enforcing least privilege

- maintaining observability

- constraining execution pathways

- preserving accountability

This is the core principle behind Defense-in-Depth.

In AI systems, Defense-in-Depth means applying security controls across:

- data access

- model interaction

- execution pathways

- infrastructure

- telemetry

- governance

- compliance

The goal is not simply prevention. The goal is resilience.

This is precisely where modern data platforms begin to play a much larger role in AI security architecture. As AI systems move closer to enterprise data, the database itself becomes a critical enforcement boundary for governance, observability, and controlled execution.

Microsoft SQL and the Rise of Agentic AI

Microsoft SQL introduces several capabilities that position it as a strong platform for AI-enabled and Agentic AI solutions.

Historically, AI systems often require organizations to move enterprise data into external AI platforms or standalone vector databases. Microsoft SQL changes this model by bringing AI capabilities directly into the data platform itself.

New capabilities such as VECTOR support, DiskANN based vector indexing and search , external model integration, REST endpoint invocation and native SQL AI functions allow organizations to build Retrieval-Augmented Generation (RAG) systems and agent-driven workflows while keeping governance close to the data layer.

More importantly, Microsoft SQL applies decades of enterprise-grade security investment directly to AI-enabled workflows.

Rather than treating AI as a disconnected external system, Microsoft SQL allows organizations to govern AI interactions using:

- encryption

- auditing

- row-level security

- least-privilege execution

- telemetry

- compliance controls

- tamper-evident ledgers

This applies across multiple deployment models including:

- Microsoft SQL Server 2025

- Microsoft SQL in Azure

- Microsoft SQL in Azure MI

- Microsoft SQL in Fabric

- Microsoft SQL in Azure VM

Some capabilities such as Microsoft Defender for SQL, Azure Arc-enabled SQL Server, and Microsoft Purview are ecosystem services rather than core SQL engine capabilities, but they extend the same defense-in-depth model into hybrid and cloud-connected environments.

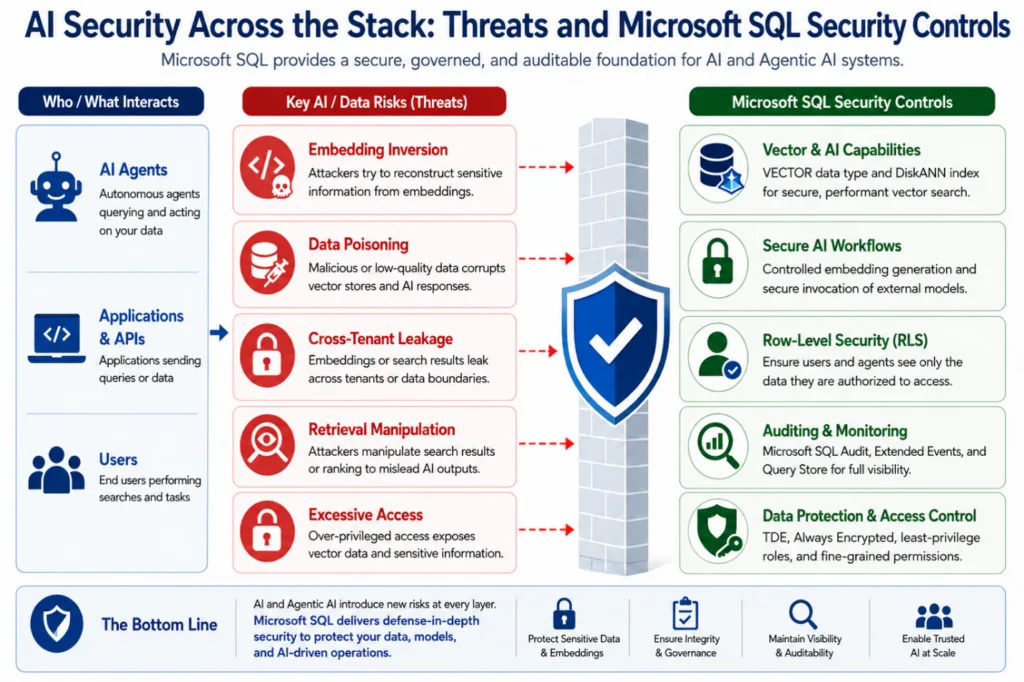

To better understand how Microsoft SQL aligns with defense-in-depth principles for AI systems, we can map Microsoft SQL security capabilities across each layer of the MAESTRO stack. This helps illustrate how database-native controls contribute to securing modern AI and Agentic AI architectures.

Microsoft SQL Security Across the MAESTRO Stack

The diagram below provides a high-level view of how Microsoft SQL participates in securing modern AI and Agentic AI architectures. As AI systems interact with enterprise data, vector search, external models, and autonomous agents, Microsoft SQL becomes a critical enforcement boundary for security, governance, observability, and controlled execution.

To better understand how these protections align within a defense-in-depth strategy, we can map Microsoft SQL capabilities across the different layers of the MAESTRO framework. In the following sections, we will examine the security threats associated with each layer, why they matter in AI systems, and how Microsoft SQL helps mitigate risk through built-in security and governance capabilities.

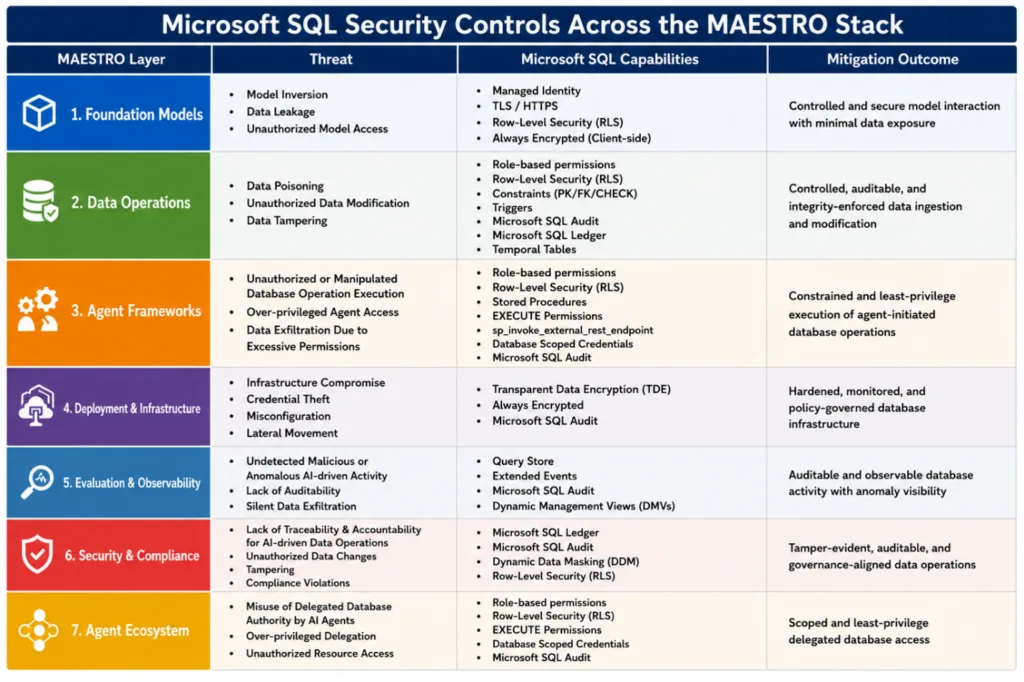

Foundation Models: Protecting Sensitive Data Interactions

Foundation model interactions frequently involve highly sensitive enterprise information including prompts, embeddings, retrieval data, and generated outputs. Without proper controls, these interactions can introduce risks such as data leakage, unauthorized model access, and exposure of sensitive information.

Microsoft SQL helps mitigate these risks by integrating model interactions directly into governed database workflows.

Capabilities such as create external model allow organizations to integrate locally hosted models into SQL-based workflows, while sp_invoke_external_rest_endpoint provides controlled and auditable outbound model invocation. Combined with encryption technologies such as Always Encrypted and Transparent Data Encryption (TDE), Microsoft SQL helps ensure that sensitive enterprise data remains protected throughout the AI interaction lifecycle.

Row-Level Security and Dynamic Data Masking further restricts exposure of sensitive data to only authorized users and applications.

Data Operations: Reducing the Risk of Data Poisoning and Tampering

AI systems are only as trustworthy as the data they consume.

One of the most significant threats in AI systems is data poisoning — the introduction of malicious or misleading data designed to manipulate downstream model behavior or retrieval results. Unlike traditional corruption attacks, poisoned data often appears legitimate, making detection difficult.

Microsoft SQL does not inherently understand semantic correctness or identify poisoned embeddings directly. However, it provides strong governance and integrity controls that significantly reduce the likelihood and impact of unauthorized data modification.

Role-based permissions, Row-Level Security, constraints, triggers, and audit logging help ensure that only authorized entities can insert or modify data. SQL Ledger adds cryptographically verifiable integrity guarantees, while temporal tables preserve historical versions of records for forensic analysis and recovery.

VECTOR support and DiskANN indexing enable scalable vector search capabilities while maintaining governance within the database boundary itself.

Agent Frameworks: Constraining AI Execution Boundaries

Agentic AI systems introduce a fundamentally different execution model compared to traditional applications. AI agents can dynamically invoke tools, generate queries, and orchestrate workflows autonomously.

This flexibility creates new risks including unauthorized database operations, over-privileged agent access, and unintended data exfiltration.

Microsoft SQL helps constrain these risks through strict execution boundaries.

Rather than allowing unrestricted query execution, organizations can expose controlled database operations through stored procedures and permission-scoped execution pathways. Role-Based Access Control/permissions, Row-Level Security, EXECUTE permissions, and Database Scoped Credentials ensure that agents operate only within explicitly authorized boundaries.

Even if an agent is manipulated through prompt injection or tool misuse, Microsoft SQL helps reduce blast radius by enforcing least-privilege access controls and auditable execution pathways.

Deployment and Infrastructure: Extending Security Beyond the Database

AI-enabled systems often span hybrid infrastructure, cloud services, APIs, vector indexes, and distributed execution environments. Infrastructure compromise, credential theft, misconfiguration, and lateral movement remain serious operational concerns.

Microsoft SQL contributes to infrastructure defense through encryption, auditing, and governance capabilities that help protect sensitive enterprise data even if underlying systems are compromised.

Transparent Data Encryption (TDE) and Always Encrypted reduce exposure of sensitive information at rest and during processing. Microsoft SQL Audit provides operational traceability across database activity.

In hybrid and cloud-connected deployments, ecosystem services such as Microsoft Defender for SQL and Azure Arc-enabled SQL Server extend monitoring, policy governance, and anomaly detection capabilities across distributed environments.

Evaluation and Observability: Maintaining Visibility into AI-Driven Activity

One of the most important principles in AI security is visibility.

AI systems may generate unexpected queries, anomalous access patterns, or hidden execution behavior that traditional monitoring solutions were never designed to detect.

Microsoft SQL provides extensive telemetry and observability capabilities that help organizations monitor AI-driven database activity.

Query Store preserves historical execution behavior, Extended Events provide detailed runtime telemetry, and Dynamic Management Views expose operational state and execution characteristics. Microsoft SQL Audit adds traceability for security-relevant actions and operational analysis.

Together, these capabilities allow organizations to investigate suspicious behavior, identify anomalous database operations, and maintain observability across AI-enabled workflows.

Security and Compliance: Enforcing Accountability and Trust

Enterprise AI systems require more than operational security controls. They also require accountability, governance, traceability, and integrity assurance.

Microsoft SQL provides strong compliance and governance capabilities that align naturally with these requirements. It includes SQL Ledger, which introduces tamper-evident records to support integrity verification and non-repudiation. In addition, Microsoft SQL Audit enables operational traceability, while Row-Level Security and Dynamic Data Masking enforce controlled data visibility policies.

In larger enterprise environments, Microsoft Purview extends governance capabilities through lineage tracking, classification, and policy management.

Together, these capabilities help organizations ensure that AI-driven data operations remain observable, attributable, and governance-aligned.

Agent Ecosystem: Securing Delegated Authority

As AI systems become increasingly autonomous, agents frequently operate using delegated permissions on behalf of users, applications, or external systems.

Improperly scoped access can lead to over-privileged agents and unintended resource access.

Microsoft SQL helps constrain delegated authority through fine-grained permission models including Row-Level Security, EXECUTE permissions, Database Scoped Credentials, and audit logging.

These controls help ensure that AI agents only access explicitly authorized resources while maintaining traceability across delegated operations.

Microsoft SQL Security Controls Across the MAESTRO Stack — Summary

The following summary provides a consolidated view of the threats across each MAESTRO layer and the Microsoft SQL capabilities that help enforce security, governance, observability, and controlled execution boundaries for AI systems

Building Trusted AI Starts with the Data Platform

As organizations move toward Agentic AI architectures, security, governance, and observability can no longer be optional. AI systems must be built on platforms that not only enable intelligence, but also enforce trust, accountability, and controlled execution.

Microsoft SQL brings AI closer to enterprise data while extending the same enterprise-grade security capabilities that organizations already rely on for mission-critical workloads. From vector search and external model integration to auditing, encryption, least-privilege access, and tamper-evident controls, Microsoft SQL provides a strong foundation for building secure and governed AI solutions.

Whether you are deploying on premises, in hybrid environments, or in the cloud with Microsoft SQL in Azure, Microsoft SQL enables organizations to adopt AI confidently without compromising on security or compliance.

Ready to explore secure AI with Microsoft SQL?

0 comments

Be the first to start the discussion.