Reed Robison explores techniques to reduce SNAT port consumption in Azure App Services.

Azure App Services provides a powerful platform for building scalable web applications and conveniently abstracts many of the details that can make architecting such solutions a challenge. App Services are configured under an App Service Plan that make it exceptionally easy to Scale Up, Scale Out, and choose the instance size and count to dynamically scale solution for whatever workload you need to handle. Azure goes to great lengths to handle all the difficult pieces for you, but like any online service – there are scale limits you occasionally need to consider to optimize your performance and cost.

When you start looking at availability and performance of an App Service, a good place to start is memory, CPU, and response time. Memory and CPU both reflect high level metrics that can impact performance, but slow and failed responses may take a little more work to fully understand. I usually start by looking at slow or failed responses and ask the question “what are these requests doing and what could slow them down?” – then follow the data. I dig into the code behind those requests to understand if there might be a source of resource contention slowing them down. That could be a slow SQL command, a dependent REST call, file operation, etc. Application Insights may be helpful here. Process dumps can also be helpful to understand what an application is doing if you can manage to dump it at exactly the right time a problem is occurring. The Diagnose and Solve problems tool, provided by App Services, can also be really helpful to isolate what might be going on.

If everything looks good but you still see requests that are slow or failing, you might want to look at Outbound Connections. If your App Service is making dependent outbound connections to fulfill incoming requests, there are scale limits that are important to understand. Outbound requests coming off your App Service are often (but not always) subject to Source Network Address Translation (aka SNAT) and this can become very important under load. There’s a finite number of SNAT ports available to make outbound calls. Azure actually does a really good job to make sure you never have to think about this, but if you exhaust these ports you may start seeing slow or failed requests on your service. If you open your App Service and drill into Diagnose and Solve Problems->Availability and Performance->SNAT Port Exhaustion this will be where clues start to add up. If you see SNAT Port Pending or SNAT Ports Failed metrics in here, that’s a good indication you are dealing with SNAT issues. The occasionally “SNAT Ports Pending” warning may not be too problematic – that just means you periodically wait for ports to allocated but if you are seeing SNAT Ports Failed, this is probably going to result in errors in your app.

If you are making calls to other Azure services, they typically use one of the outbound IP addresses associated with your App Service to do it and it’s routed like any other outbound call (e.g. – not treated as internal traffic).

This article does a really good job of explaining how SNAT works and some details about the limits. Again, Azure does a good job of doing everything it can to get you ports – even when you go beyond the “guaranteed” limit. However, if you exhaustively make unique outbound connections in a short period of time, you can eventually hit a hard limit. This post is focused on why that might happen and some techniques to solve this problem.

There is no simple way to know exactly which outbound connections your SNAT ports are associated with, but you can dump your process and get some clues. You can also collect a memory dump and either use the online analysis or something like DebugDiag Analysis to check the pooled database connections in order to get an idea of how many connections may be active. You may have to dig around in the .NET objects to determine if there are other non-database connection objects being held.

There are a handful of reasons people generally run into this issue, but they are almost always the result of not re-using connections efficiently.

- If you are connecting to the same resource over and over, re-use the connection. If you are heavily making outbound calls to something like a REST API and not re-using the connection this will consume excessive SNAT ports. See the examples in the linked article for some examples of code that demonstrates how to re-use connections with .NET HttpClient and HttpWebRequest.

- If you are making database calls, make sure you are re-using connections (see ADO.NET ). This will ensure efficient re-use of outbound connections to SQL and avoid excessive SNAT port consumption.

If you are leaking connections, fix this first. If you are confident connections are not leaking and you are doing everything you can do to minimize the unique connections coming off your App Service, there are some other approaches. For example, supposed you have many unique database connections (e.g. – database for every customer) resulting in connection pool fragmentation. This can result in a massively large number of unique DBs connections where pooling isn’t very effective. One approach is use a shared connection, then set the database on the command object. That may not be ideal for nor trivial depending on the complexity of your code. Another option is to consider VNets and Service Endpoints.

SNAT Ports are tied to outbound connection, so if everything you are connecting to exists within Azure you can also optimize these routes by using VNet and Service Endpoints. While VNet and Service Endpoints are frequently used for finer control of secure communications, there’s another benefit since this configuration uses direct connectivity to Azure services over an optimized route on the Azure backbone network. You effectively bypass the need for SNAT.

The configuration is pretty straight forward:

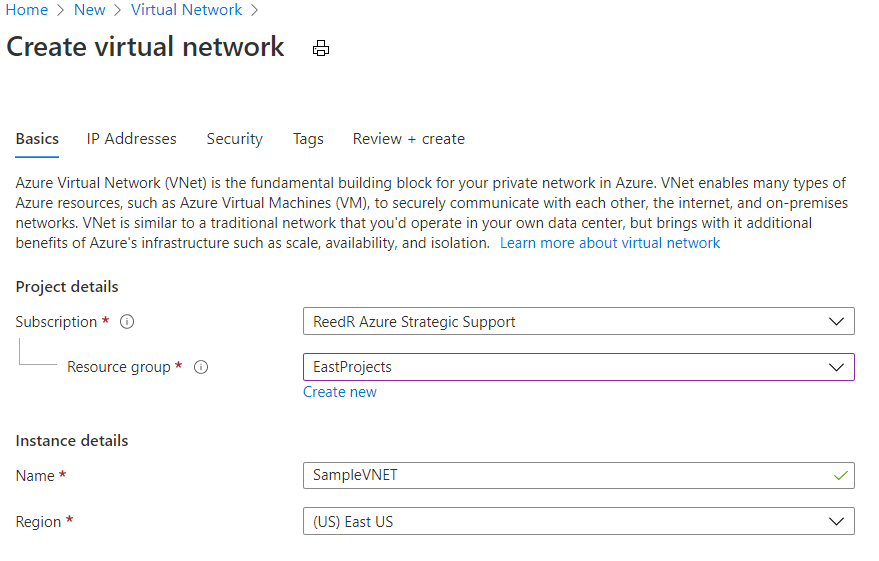

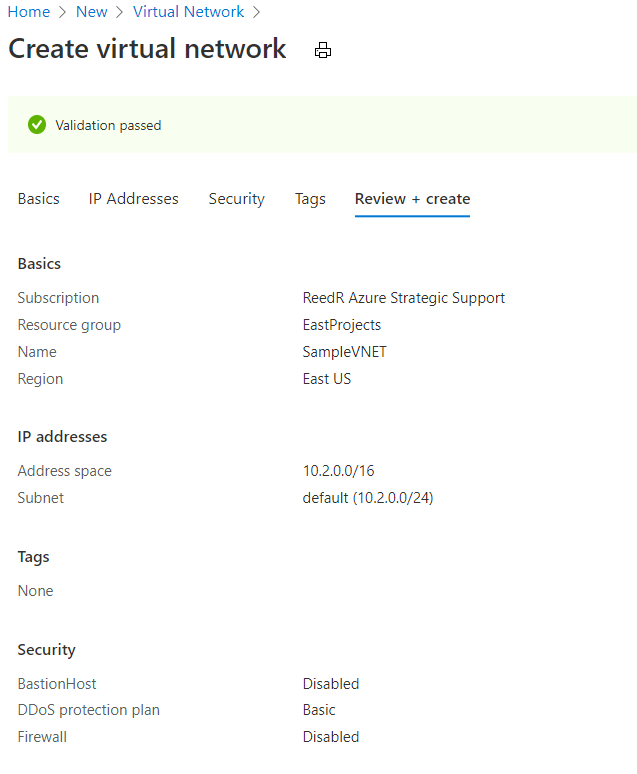

- Create a new Virtual Network

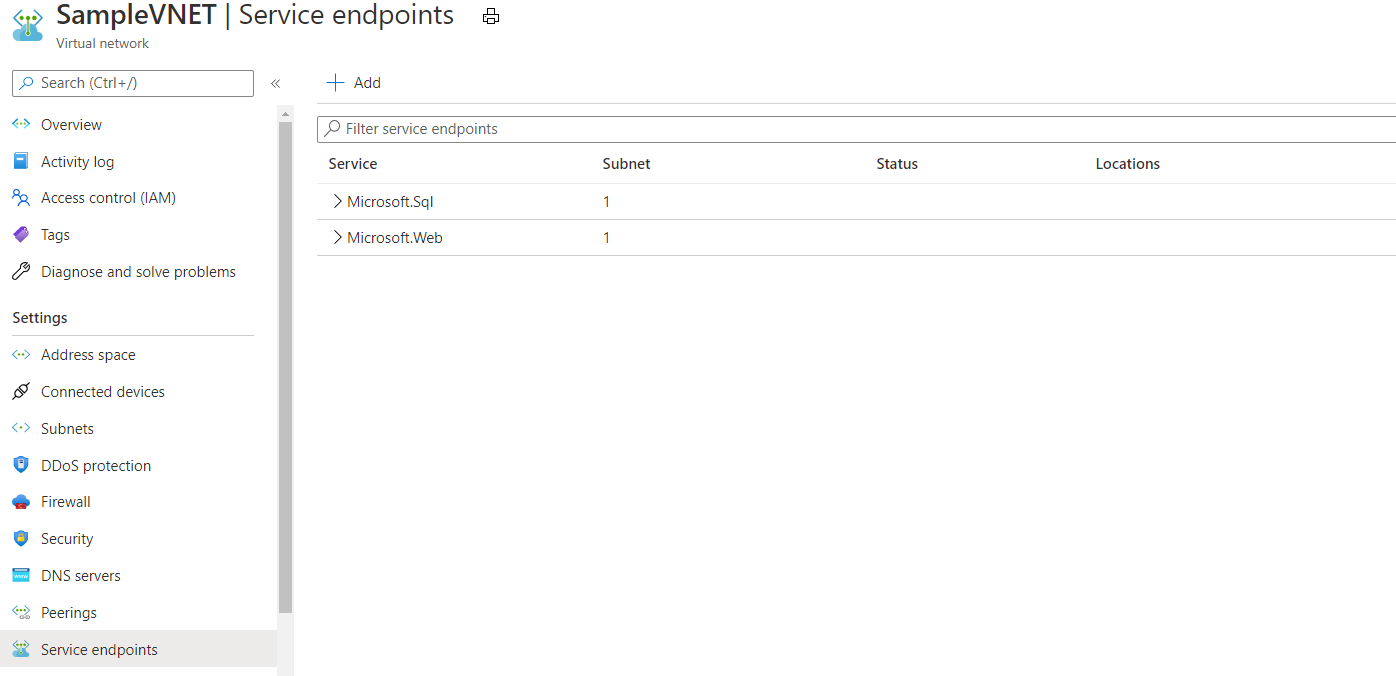

- Add Service Endpoints to your VNet (I’ll add Web and SQL)

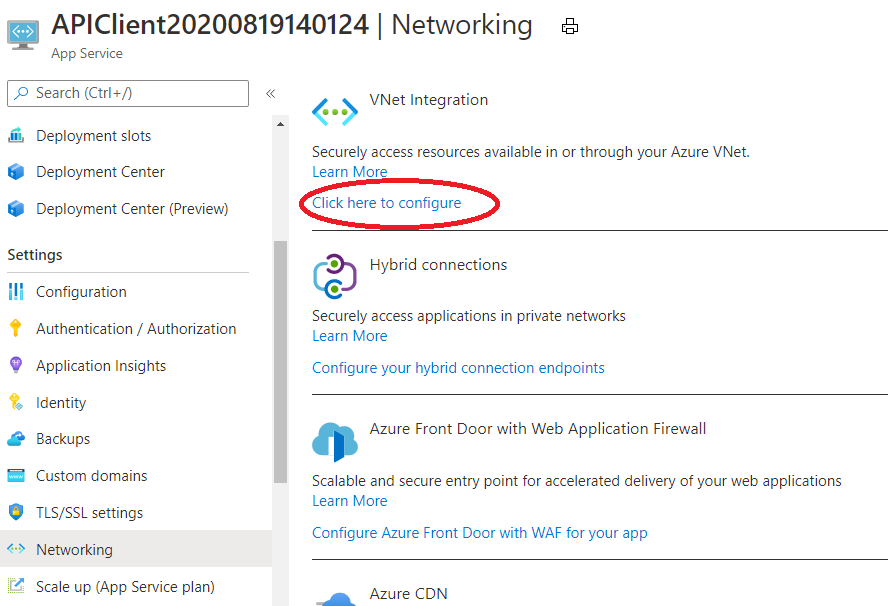

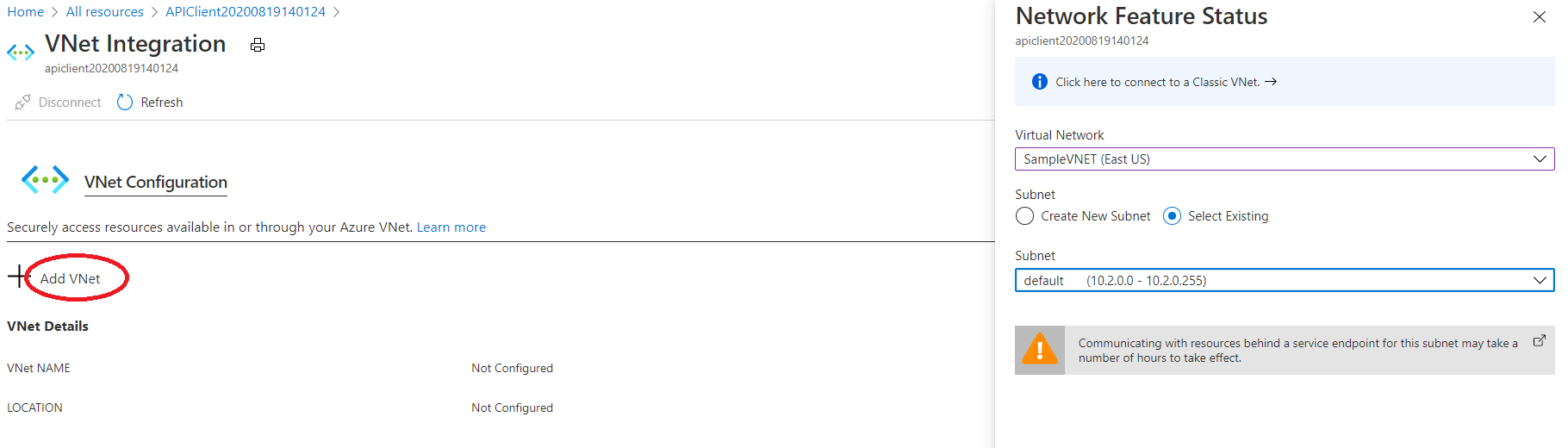

- Attach the VNet to your App Service

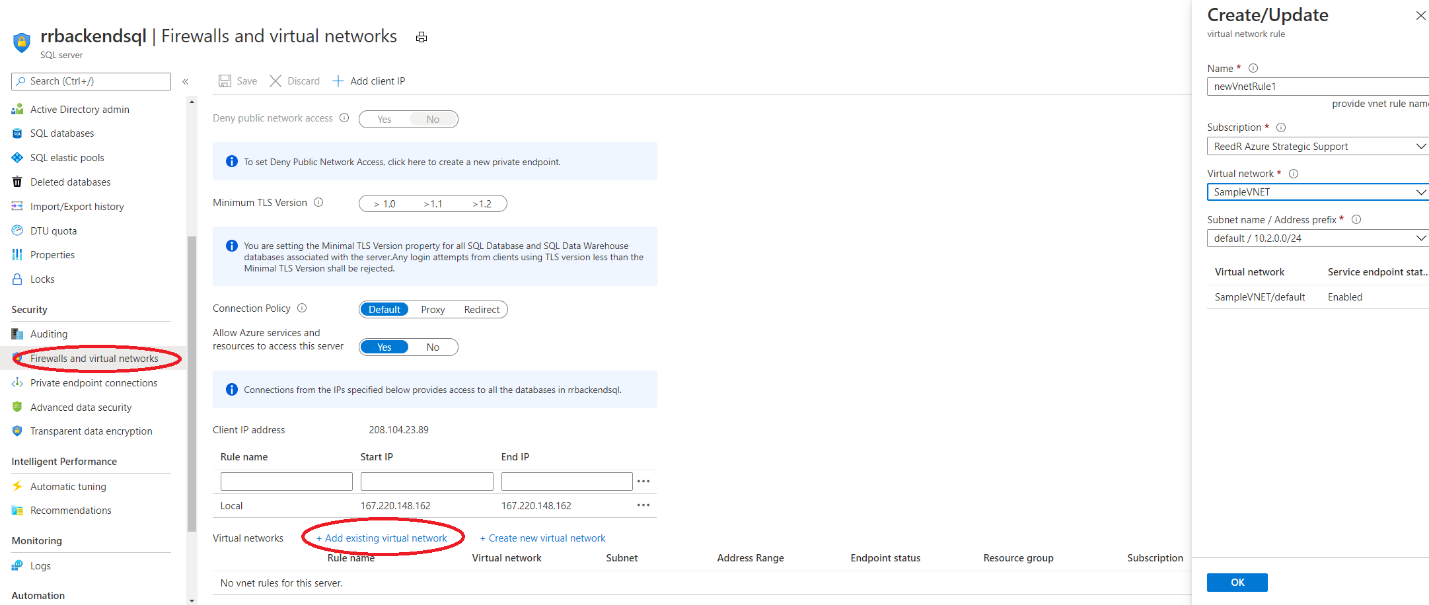

- Attach the VNet to SQL Server

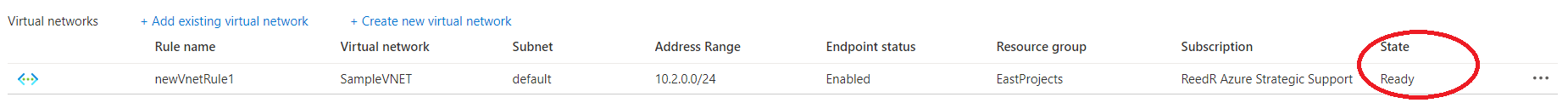

One configured, you should see the state change to Ready

One configured, you should see the state change to Ready

Once the VNET is properly configured, if you audit the traffic coming into the dependent services (for example the IIS logs on a downstream service or the Audit logs for SQL calls), you’ll notice that the requests start using an internal IP, and not the external (outbound) IPs associated with your App Service. That’s an indication that requests are now routing internally and therefor, no longer require a SNAT port to successfully make the call.

If you find yourself struggling with SNAT ports using Azure App Services and your destination is an Azure service that supports service endpoints, regional VNET integration with Service Endpoints or Private Endpoints can provide a fairly simple way to allow these requests to use an internal, optimized route and avoid SNAT port limitations.

Good article, especially the tip on service endpoints. However, I don’t believe you can use the tip on setting the database in the command object with Azure SQLDB, as ‘USE’ is not supported. Still useful for SQL Server 20xx & SQL Managed Instance, though.

Hi,

Any tips for setting up settings in httpClient ? (Net core, in linux container), like max connections, lifetime, etc?

Should we use internal ip addresses or is it fine to use http://service.azurewebsites.com url?

Any other tips to make sure connections between services inside VNET do not cause SNAT issues?

Cheers

Thanks for sharing this informative post.