Introduction

As I wrap up my internship with Microsoft this summer, I have discovered the value in being confused. The more I learned, the more some things became clearer and the more other things became cloudier. In the end, I am left with a headache of confusion on how to tell time, something I thought I mastered in 2nd grade. Three months ago, I started with a seemingly simple task of investigating the quality of garbage collection information in Java Flight Recorder (JFR) and Garbage Collection (GC) logging. On accident, I discovered that the times these tools reported for corresponding garbage collection events not only differed but diverged over time. This led me into a deep dive on how GC logging and JFR both acquire and report times. What follows is a discussion of what I found.

JFR Files vs. GC Logs

First on my journey, was to understand Java’s garbage collection tools. JFR files and GC logs can both be a great source of information on Java’s garbage collection events, however, their approach and format are slightly different. A GC log is a text-based file that reports specific garbage collection events line by line with a time stamp at the very beginning of every line.

[2.311s][info][gc,start ] GC(0) Pause Young (Allocation Failure)In contrast, a JFR file holds binary that correlates to specific Java Objects called a RecordedEvent. These events can be retrieved through an API call and cover a variety of information. For this topic, I am primarily concerned with the RecordedEvents that deal with garbage collection and their corresponding startTime field.

Although both JFR files and GC logs both hold equivalent information about garbage collection, there are a few differences on how they gather and report this information. Most of these differences do not end up affecting the data received by the end user, except, of course, for timing.

The Recorded Time Delay

How do you define the start time of an event? I thought this would have one simple answer but turns out JFR and GC logging do not agree. JFR defines the start time as the beginning of the event, storing the time to be used later when the event is reported out to the JFR file. GC logs, on the other hand, record the start time as the event is being printed. For this reason, the start times recorded by GC logs tend to lag behind JFR files by about a fourth of a second. However, this lag is only consistent if they are using the same clock.

The Clock Drift

Just when things got clearer, I learned that Java could use more than one clock. These clocks are the Read Time-Stamp Counter (RDTSC) clock and the OS clock. The OS clock is guaranteed to be used, while RDTSC will be used if Java determines that you have reliable x86 hardware to support this clock.

OS Clock

The OS clock is the appropriate system clock that your machine supports. This clock will always be used by GC logs.

RDTSC Clock

The RDTSC clock is the most precise clock available on the x86 architecture as it is a counter that increments for every clock cycle and stores this number in a register. The RDTSC clock has much lower overhead and is far faster in retrieving a time stamp than the OS clock. For this reason, if Java finds that there is a reliable RDTSC clock then Java will use this clock for JFR time stamps. JFR files collects information on nearly every event in the JVM, not just garbage collection, so it needs this low overhead and speed when collecting time stamps.

If this clock is more precise and faster, why doesn’t GC logs also use the RDTSC clock? Well this clock comes with a host of its own problems, that may have been deemed worth it for the needed speed in JFR but not worth it with GC logging. These problems include dynamic clock frequency, the halt of the RDTSC clock when the machine sleeps, and the lack of synchronization across multiple cores and processors. This dynamic clock frequency and irregular halt of the clock causes a lack of stability in comparing times. The most reliable and recent hardware that resolves these two issues is called the Invariant TSC. With the Invariant TSC there has been more of an effort to also sync the RDTSC value among multiple cores and processors. Although, this issue is a little more complicated and not a guaranteed fix.

Again, just as things started to get clearer, I learned that a machine often lies about its capabilities and whether it can handle these uncertainties with the RDTSC clock. Java can only do its best with guessing whether the machine has a reliable TSC and most of this guess is dependent on the machine’s honesty.

OS Clock vs. RDTSC Clock in Java

If your machine decides that it does not have a reliable TSC, then the OS clock is always used and your only concern between GC logs and JFR files is the quarter of a second delay. Otherwise, you might be experiencing some very large drifts in your two clocks and subsequently in the times reported out by GC logging versus JFR.

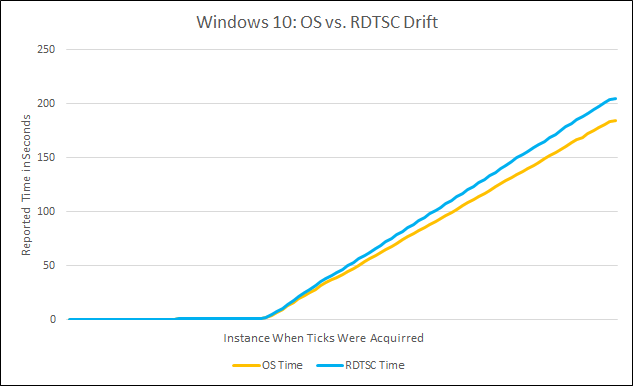

Every time Java collects clock ticks it will attempt to collect both OS ticks and RDTSC ticks. If there is no reliable TSC then it will only collect OS ticks. Otherwise it will collect both and theoretically the two clock ticks when converted to seconds should be overwhelmingly close. So, let us look at a Windows 10 machine with amd64 architecture running a 3-minute Java application. This machine picks up ticks from both clocks, but over the 3-minute time period the two clocks begin to drift apart. At the end, the OS clock reports a total of 184.59 seconds and RDTSC clock reports 205.10 seconds. Considering that 3 minutes is 180 seconds, it seems appropriate to say that the OS clock is reporting more accurate times in this case. Not to mention these clocks are only 0.08 seconds off at the start of the application and over 20 seconds off by the end.

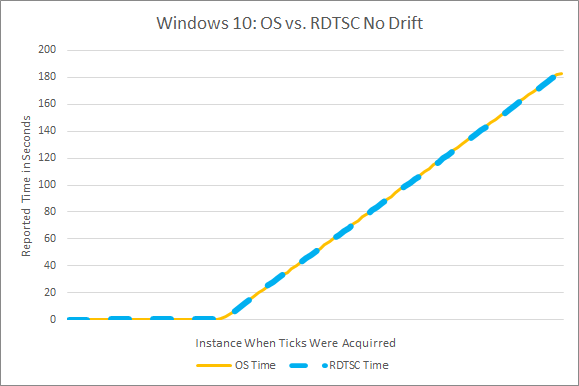

To continue the trend of confusion, I discovered there is nothing specific about Windows 10 that causes these clocks to drift. Here is another Windows 10 machine with amd64 architecture which reports very similar times between the two clocks with the OS reporting an end time of 182.89 seconds and the RDTSC clock reporting 182.42 seconds.

After running this 3-minute application on various machines, it seems like Linux might be more likely to be transparent with its TSC capabilities, but that is no guarantee. Results have varied among Mac, Linux and Windows machines from no reliable TSC discovered, to a drift between the two clocks, to no drift between the two clocks. This exposes the lack of portability and reliability of hardware specific clocks.

Going Forward

At the end of my journey I am left with more questions than answers. My first instinct is to grasp for a fix. Based off my discoveries there is not a great solution to the time delay, other than being aware that this delay exists and accounting for it accordingly. Regarding this issue, the times reported by GC logs and JFR files might be different, but time deltas are a constant and you may be able to find value in this. As for the potential drift in clocks, one easy fix is to add the following arguments to your command line to force your machine to use the OS clock for both JFR files and GC logs.

-XX:+UnlockExperimentalVMOptions -XX:-UseFastUnorderedTimeStampsAt the end of the day, this still leaves many unanswered questions and confusion. Consequently, I think this is an appropriate time to follow Segal’s Law:

“A person with a watch knows what time it is.

A person with two watches is never sure.”

We may not be dealing with watches, but clocks are close enough. Having one clock is clear but provides an ignorant view, having two clocks is confusing but provides more details. So, should the two tools be using the same clock? If so, which one? If we choose RDTSC, how do we convince machines to be more transparent to Java about their capabilities? On the other hand, if we choose OS, are we willing to sacrifice the low overhead in JFR? And ultimately, which tool and its clock represents the most accurate concept of time?

For further guidance feel free to read this thread on Java’s GC Dev mailing group.

Ana,

Awesome write up. You should get hired once you are graduated!

We do really need more Dig Dive on JVM content from Microsoft. We know that .NET has lots of content from channel9.

But right now Microsoft is not only about .NET. It’s open for any developers. Especially JVM community!

It’s also a really great time for Java Developers!

Rock on!