The landscape of AI development has evolved dramatically. Modern intelligent agents require real-time web access to provide accurate, grounded responses. With the retirement of direct Bing Search API access, developers must transition from direct API integration to agent-based architectures.

This article explores how to implement a robust search solution by orchestrating a communication channel between a Semantic Kernel agent and an Azure AI Foundry agent using the Agent-to-Agent (A2A) protocol.

The Challenge

The deprecation of raw Bing Search API endpoints necessitates a new integration pattern. Currently, Bing Grounding capabilities are encapsulated within Azure AI Foundry agents. This creates a service boundary where external agents—such as those built with Semantic Kernel or LangChain—cannot directly invoke Bing search capabilities as a standalone tool.

Instead of a direct API call, search functionality must be consumed through an intermediary agent hosted within the Azure AI Foundry ecosystem. This requires a reliable protocol for inter-agent communication.

Solution Architecture: Multi-Agent Orchestration

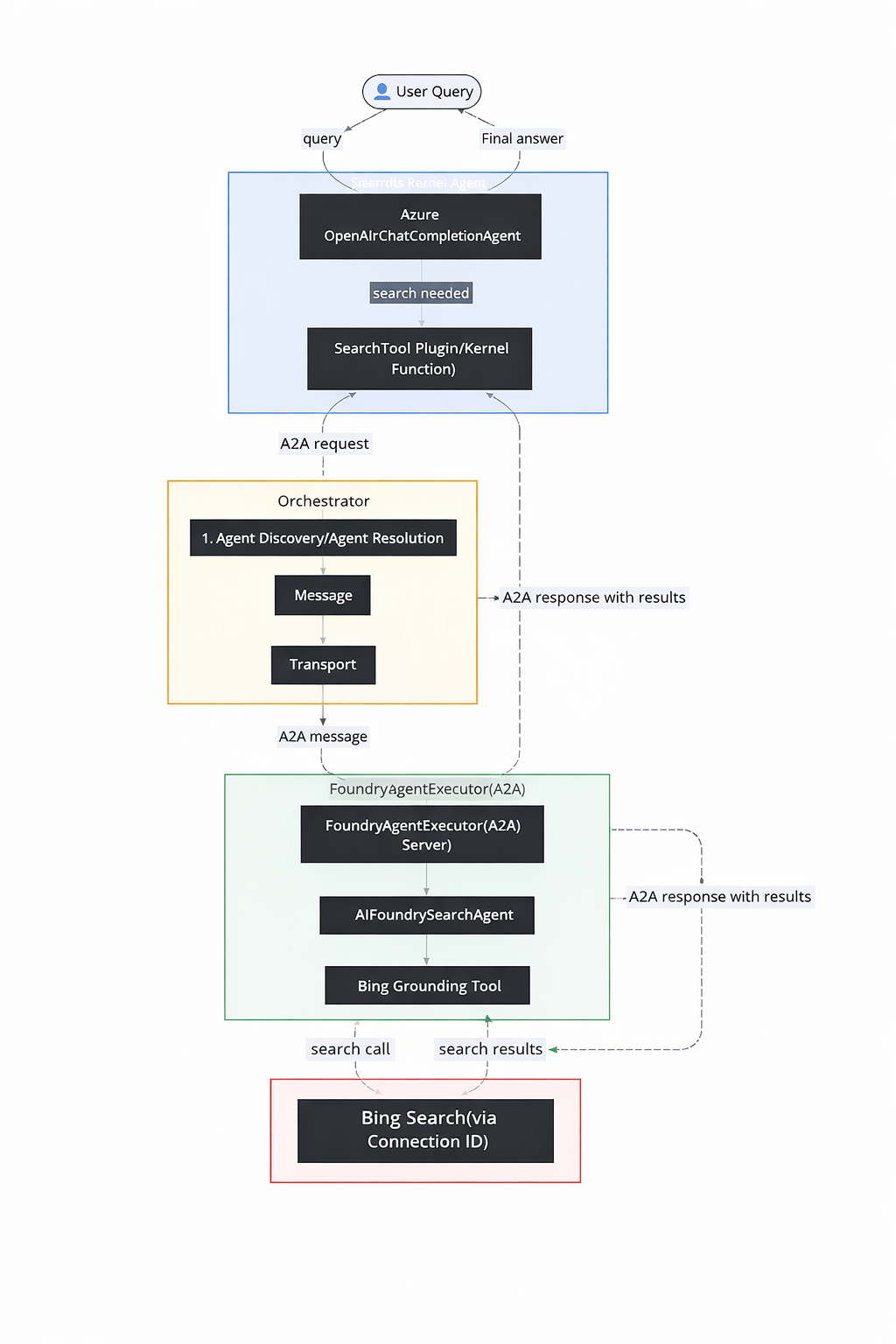

To address this constraint, we implemented a multi-agent pattern that separates concerns between orchestration and information retrieval:

- Search Specialist (Azure AI Foundry): A hosted agent configured with the Bing Grounding tool. This agent handles query formulation, execution, and result parsing.

- Orchestrator (Semantic Kernel): A primary agent that manages user interaction, maintains conversation context, and delegates search tasks to the specialist agent when external information is required.

By using the A2A (Agent-to-Agent) communication protocol, we establish a standardized interface for the Orchestrator to dispatch requests to the Search Specialist, effectively treating the remote agent as a native tool.

Setting Up Bing Grounding in Azure

Establishing the connection between your AI Foundry environment and Bing Search requires careful configuration through the Azure portal.

Step-by-Step Configuration Process:

- Access Azure AI Studio: Navigate to the Azure AI Studio through your Azure portal account

- Project Selection: Either create a new AI project or select an existing project from your workspace

- Connection Management: Locate the “Connections” section in the project’s navigation panel

- Service Integration: Select “New connection” and choose “Bing Search” from the available service catalog

- Authentication Setup: Input your Bing Search API key (obtained from your Azure Bing Search resource)

- Retrieve Connection Identifier: After successful creation, the system assigns a unique Connection ID—this identifier becomes the critical link between your code and the Bing Search service. Store this value securely in your environment configuration (typically as

BING_CONNECTION_ID)

Deep-Dive: A2A Protocol for Foundry Agent Communication with Bing Grounding

Once Bing Grounding is available inside Azure AI Foundry, the remaining challenge is enabling an external agent to use it. This is solved using the Agent-to-Agent (A2A) protocol. Google’s Agent-to-Agent (A2A) protocol represents a foundational advancement in inter-agent communication standards. This protocol establishes a universal language that enables AI agents built on different platforms and technologies to collaborate seamlessly.

A2A Protocol Components

The A2A protocol implementation in our system involves several key components:

Agent Discovery and Capability Advertisement

The A2A protocol begins with agent discovery, where agents advertise their capabilities through standardized “agent cards”:

#Client-side discovery process

resolver = A2ACardResolver(httpx_client=httpx_client, base_url=base_url)

agent_card = await resolver.get_agent_card()The agent card contains metadata about the agent’s capabilities, supported message formats, and communication endpoints. This allows agents to understand what services are available before attempting communication.

Message Structure and Communication Patterns

A2A messages follow a standardized structure that ensures compatibility across different agent implementations:

#A2A message construction

request = SendMessageRequest(

id=str(uuid4()), # Unique request identifier

params=MessageSendParams(

message={

"messageId": uuid4().hex, # Message tracking ID

"role": "user", # Message sender role

"parts": [{"text": user_input}], # Message content parts

"contextId": str(uuid4()), # Conversation context

}

)

)This structure provides:

- Unique identification for request tracking and debugging

- Role-based messaging to distinguish between user and agent communications

- Multi-part content support for complex message types

- Context management for maintaining conversation continuity

Communication Flow Analysis

The complete A2A communication flow demonstrates sophisticated inter-agent coordination:

class SearchTool:

@kernel_function(

description="web search using search agent",

name="web_Search"

)

async def web_search(self, user_input: str) -> str:

timeout = httpx.Timeout(120.0, connect=60.0)

async with httpx.AsyncClient(timeout=timeout) as httpx_client:

# Step 1: Agent Discovery

resolver = A2ACardResolver(httpx_client=httpx_client, base_url=base_url)

agent_card = await resolver.get_agent_card()

# Step 2: Client Initialization

client = A2AClient(httpx_client=httpx_client, agent_card=agent_card)

# Step 3: Message Construction

request = SendMessageRequest(

id=str(uuid4()),

params=MessageSendParams(

message={

"messageId": uuid4().hex,

"role": "user",

"parts": [{"text": user_input}],

"contextId": str(uuid4()),

}

)

)

# Step 4: Message Transmission

response = await client.send_message(request)

# Step 5: Response Processing

result = response.model_dump(mode='json', exclude_none=True)

return result["result"]["parts"][0]["text"]

supervisor_agent = ChatCompletionAgent(

service=AzureChatCompletion(

api_key=os.getenv("AZURE_OPENAI_API_KEY"),

endpoint=os.getenv("AZURE_OPENAI_ENDPOINT"),

deployment_name=os.getenv("AZURE_OPENAI_DEPLOYMENT_NAME"),

api_version=os.getenv("AZURE_OPENAI_API_VERSION"),

),

name="SupervisorAgent",

instructions="You are a helpful general assistant. Use the provided tools to assist users with their ask on latest news and search.",

plugins=[SearchTool()]

The searchTool has been integrated into the Semantic Kernel ChatCompletionAgent, enabling the LLM to automatically invoke it whenever a user issues a search-related query.

Server-Side A2A Implementation

The server-side A2A implementation demonstrates how agents can expose their capabilities through the protocol:

class AIFoundrySearchAgent:

def __init__(self):

logger.info("Initializing AIFoundry search agent.")

try:

# Load configuration from environment variables

self.endpoint = os.getenv("AZURE_AI_ENDPOINT")

self.model = os.getenv("AZURE_OPENAI_DEPLOYMENT_NAME")

self.conn_id = os.getenv("BING_CONNECTION_ID")

self.credential = DefaultAzureCredential()

self.threads: dict[str, str] = {}

# Create Azure AI Agents client

agents_client = AgentsClient(

endpoint=self.endpoint,

credential=self.credential,

)

# Initialize Bing grounding tool with connection ID

bing = BingGroundingTool(connection_id=self.conn_id)

# Create the specialized search agent

self.agent = agents_client.create_agent(

model=self.model,

name='foundry-search-agent',

instructions="An intelligent bing search agent powered by Azure AI Foundry. Your capabilities include Latest news and Web search. Return in 1 line only",

tools=bing.definitions,

)

except Exception as e:

logger.error(f"Failed to initialize AIFoundrySearchAgent: {str(e)}")

raise

class FoundryAgentExecutor(AgentExecutor):

async def execute(self, context: RequestContext, event_queue: EventQueue) -> None:

user_input = context.get_user_input()

context_id = context.context_id

# Process A2A request context

task = context.current_task

if not task:

task = new_task(context.message)

await event_queue.enqueue_event(task)

# Execute agent capability

result = await self.agent.search(user_input, context_id)

# Return response via A2A event system

await event_queue.enqueue_event(new_agent_text_message(result))Testing the Agents using the A2A Inspector

The A2A Inspector is a web-based tool designed to help developers inspect, debug, and validate servers that implement the Google A2A (Agent2Agent) protocol. It provides a user-friendly interface to interact with an A2A agent, view communication, and ensure specification compliance.

For more information go here.

You can use the tool to interact with the A2A servers.

Protocol Benefits and Architecture Impact

The A2A protocol provides several critical architectural benefits:

- Platform Independence: Agents built on different frameworks can communicate seamlessly

- Loose Coupling: Changes to one agent don’t affect others as long as A2A compatibility is maintained

- Scalability: New agents can be added to the ecosystem without modifying existing components

- Standardization: Common communication patterns reduce development complexity

- Debuggability: Standardized message formats improve monitoring and troubleshooting

In our architecture, A2A serves as the critical communication backbone that enables our Python-based Semantic Kernel agent to interact naturally with the Azure AI Foundry search agent. This protocol abstraction means that either agent could be replaced or upgraded without affecting the other, as long as they maintain A2A compatibility. This loose coupling is essential for building maintainable, scalable multi-agent systems.

Conclusion

The deprecation of direct Bing Search API access has catalyzed a fundamental shift toward more sophisticated multi-agent architectures. What initially appeared as a technical challenge has evolved into an opportunity to build more robust, scalable, and maintainable AI systems.

Key Technical Achievements

Our implementation demonstrates several critical patterns:

- Specialized Agent Design: The Azure AI Foundry search agent serves a single, well-defined purpose, incorporating robust error handling, observability, and conversation management

- Seamless Orchestration: Semantic Kernel’s plugin architecture enables natural integration of specialized agents into broader workflows

- Standardized Communication: The A2A protocol provides a platform-independent way for agents to discover and interact with each other

- Production-Ready Patterns: Thread management, timeout handling, and state persistence ensure enterprise-grade reliability

The Evolution of A2A

The A2A protocol is rapidly evolving to support more sophisticated interaction patterns:

Future versions will likely support:

- Multi-party agent negotiations and consensus mechanisms

- Streaming data exchanges for real-time collaboration

- Advanced security and authentication frameworks for enterprise environments

- Semantic capability matching for automatic agent discovery and composition

Ecosystem Growth: As adoption increases, we can expect agent marketplaces and cross-platform orchestration tools that will enhance the ecosystem’s maturity and interoperability.

Moving Forward

This multi-agent approach represents more than a workaround for API deprecation—it establishes a foundation for building the next generation of intelligent applications. By treating external capabilities as specialized agents rather than simple API endpoints, we enable systems that are more modular, fault-tolerant, and adaptable to changing requirements.

The architecture patterns demonstrated here—from Azure AI Foundry’s grounding capabilities to Semantic Kernel’s orchestration framework—provide a practical blueprint for developers facing similar integration challenges. As the AI ecosystem continues to evolve, the ability to compose specialized agents through standardized protocols like A2A will become an essential architectural skill.