You slide into your office chair, cup of coffee in hand, and check your email. Another high severity ticket – your servers have crashed, and you have by tomorrow morning to figure out why. Heaving a heavy sigh, you soon find yourself sifting through tens of thousands of lines of code and logs. Morning soon passes to afternoon, fading into dusk. Finally, you find it, push the fix, and reboot the application. Minutes pass. Crash. Your night of the living dead has begun – no matter how many times you try to put this bug down, it just keeps coming back to life.

We’ve all suffered from “zombie bugs” before. There are many static analysis tools on the marketplace today to help you detect bugs, many of which work by searching for a series of syntactic patterns in code. Unfortunately, due to the huge variation and complexity of coding constructs and conventions in different projects, this approach often leads to the high false positive and false negative rates for which static analysis tools are notorious.

In today’s post, we’re going to show you how Infer# can help you put down these zombie bugs, once and for all. Microsoft open-sourced Infer# in 2020, which leverages Meta’s Infer. Infer# takes a different approach from other tools you’ve heard of – it derives summaries of each method’s semantics and reasons over the program’s behavior. It uses incorrectness separation logic, one of the latest advancements in program analysis research, to mathematically prove the presence of bugs in code. Infer# performs cross-assembly analysis to find issues like null pointer dereferences, resource leaks, and thread safety violations, in addition to security vulnerabilities like SQL injections and DLL injections.

Getting started with Infer

With Infer# v1.4 you can identify security and performance issues with a single click, all in VS2022 and VSCode. First, make sure that Windows Subsystem for Linux (WSL) is properly installed. Then, download and install the InferSharp extension from the Visual Studio or Visual Studio Code marketplaces. In this article, we’ll show the VS experience, which is mimicked in VS Code. You can also use Infer# directly in WSL and Docker.

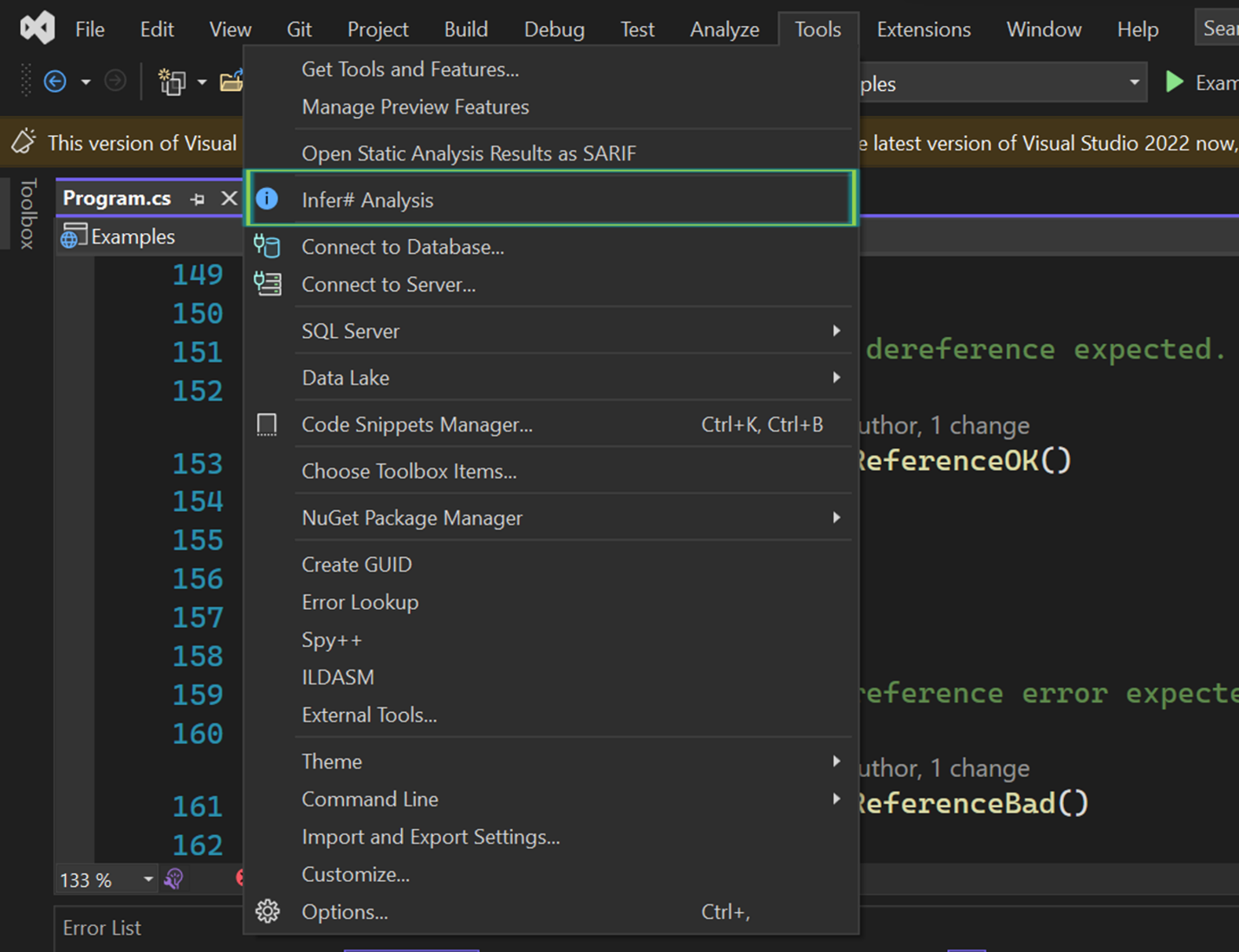

The extension adds an Infer# Analysis menu item to the Tools menu. The first time it’s selected, it will complete setup by downloading and installing the Infer# custom WSL distro from Github.

Analyze your code

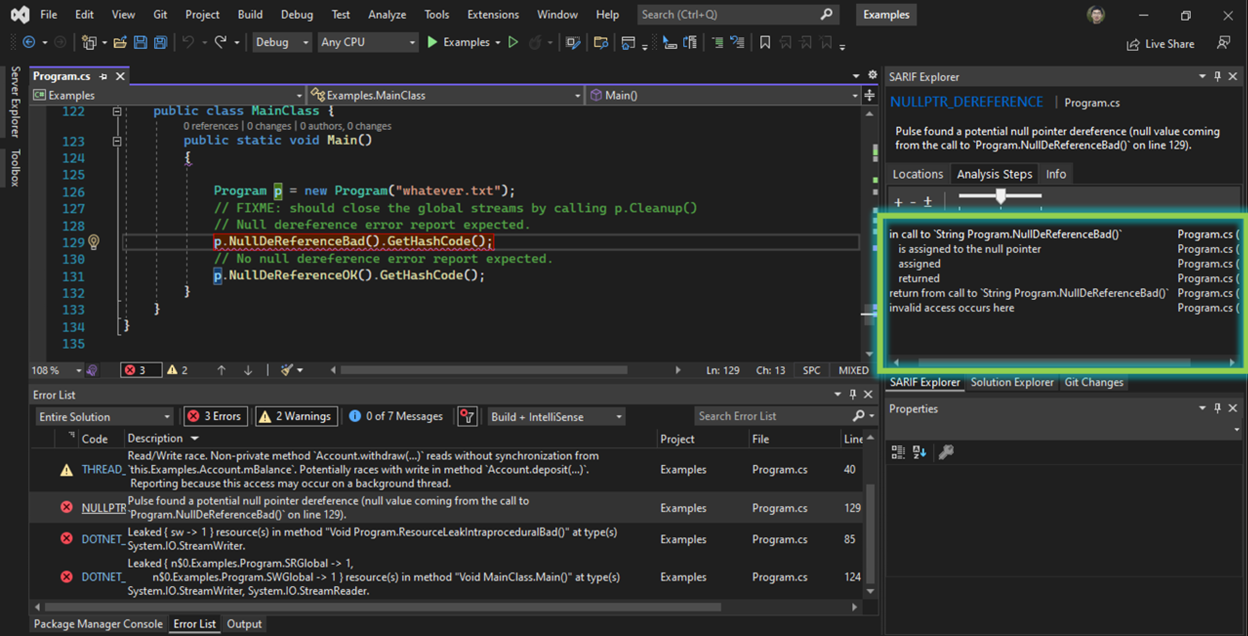

After waiting for setup to complete, selecting the Infer# Analysis menu item again will prompt you to provide a directory tree (defaulting to the solution directory, if it exists) containing the DLLs and PDBs you want to analyze. Your selection is automatically saved for future runs in the .infersharpconfig file created in your project directory. The analysis will then run, displaying the warnings in the Error List pane. Additionally, information about the analysis steps is shown in a pane on the right side of the editor, with clickable links to the relevant lines of code.

Issue or not?

Now that we’ve seen how to use Infer#, let’s look at a couple of examples that demonstrate how Infer# works under the hood. Suppose we created the following IDisposable class OwnsStreamReader:

public class OwnsStreamReader : IDisposable

{

public StreamReader reader;

public OwnsStreamReader(StreamReader input)

{

reader = input;

}

public void Dispose()

{

reader?.Dispose();

}Does the following method contain a resource leak or not?

public static void LeakOrNot()

{

var fileStream = new FileStream("test.txt", FileMode.Create, FileAccess.Write);

var reader = new StreamReader(fileStream);

using (var myClass = new OwnsStreamReader(reader))

{

myClass.reader.Read();

}

}The answer is no. Infer# determines this by producing method summaries that represent the semantics of OwnsStreamReader’s constructor and Dispose(). It determines that the Dispose() method takes care of the StreamReader, whose Dispose() in turn takes care of the FileStream object. The using statement ensures that the Dispose() function is invoked.

Let’s look at another example:

public class OwnsResources : IDisposable

{

private Socket socket;

private FileStream stream { get; set; } = new FileStream("test", FileMode.Create);

public OwnsResources(SocketInformation info)

{

socket = new Socket(info);

stream = new FileStream("test", FileMode.Create);

}

public void Dispose()

{

stream?.Dispose();

socket?.Dispose();

}Is there a resource leak in the following method?

public static void LeakOrNot()

{

using (var myClass = new OwnsResources(new SocketInformation()))

{

myClass.stream.Flush();

}

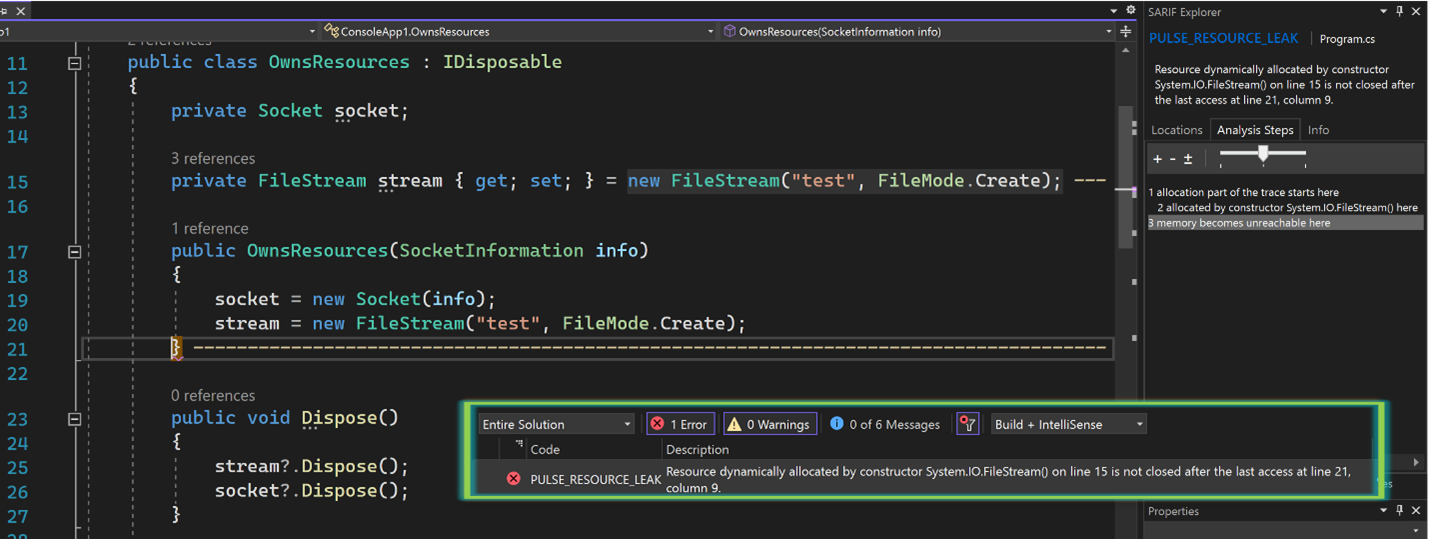

}The answer is yes. Let’s see what Infer# found:

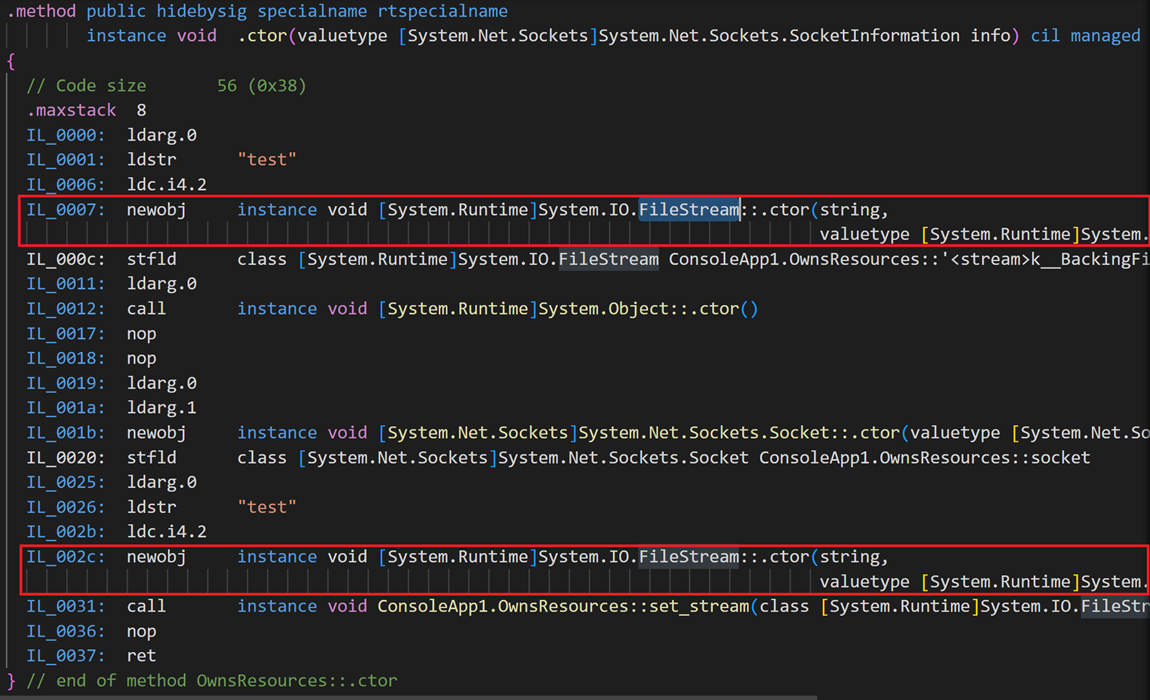

It reports “Resource dynamically allocated by constructor System.IO.FileStream(…)is not closed after the last access at…” The problem lies in the construction of the OwnsResources class itself. The stream field gets initialized twice: once in its field initialization and again in the constructor. This is clear if we look at the bytecode, which is what Infer# uses to produce its analysis*:

*You might notice that the first created object is stored into the stream field, whereas the second one is followed by an invocation of stream’s setter method. A naïve approach might not realize that these both have the ultimate effect of storing the object into the field. Infer#’s semantic analysis of stream’s setter method determines this equivalence.

*You might notice that the first created object is stored into the stream field, whereas the second one is followed by an invocation of stream’s setter method. A naïve approach might not realize that these both have the ultimate effect of storing the object into the field. Infer#’s semantic analysis of stream’s setter method determines this equivalence.

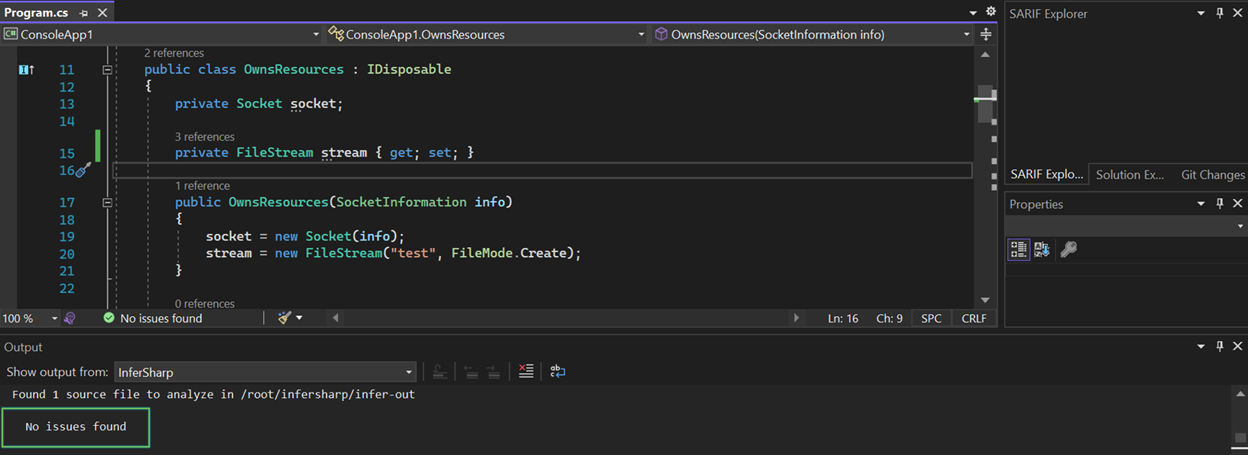

Removing one of the assignments and rerunning Infer# confirms that the issue is fixed:

Finding critical security issues in your code

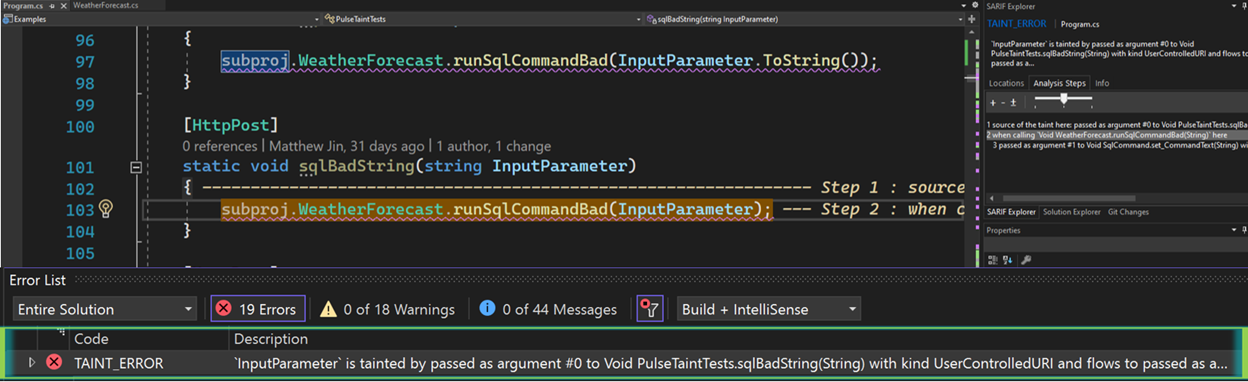

Those of you building ASP.NET web apps might be familiar with issues like SQL injections or using unsafe deserializers like BinaryFormatter, which all involve the flow of information from an untrustworthy source into a sensitive location within the app. We’re excited to announce the initial release of Infer#’s ability to detect security vulnerabilities via taint analysis. For example, suppose we have a project with the following method:

namespace subproj

{

public class WeatherForecast

{

public static void runSqlCommandBad(string input)

{

var command = new SqlCommand()

{

CommandText = "SELECT ProductId FROM Products WHERE ProductName = '" + input + "'",

CommandType = CommandType.Text

};

var reader = command.ExecuteReader();

}On its own, this method doesn’t pose a SQL injection issue, as it is only an issue if it gets invoked with a string input that comes from a user-controlled source. An example of this would be the invocation of this method with input coming as the parameter of a method with an HttpPost annotation. Infer# can detect this issue despite its trace spanning across multiple assemblies:

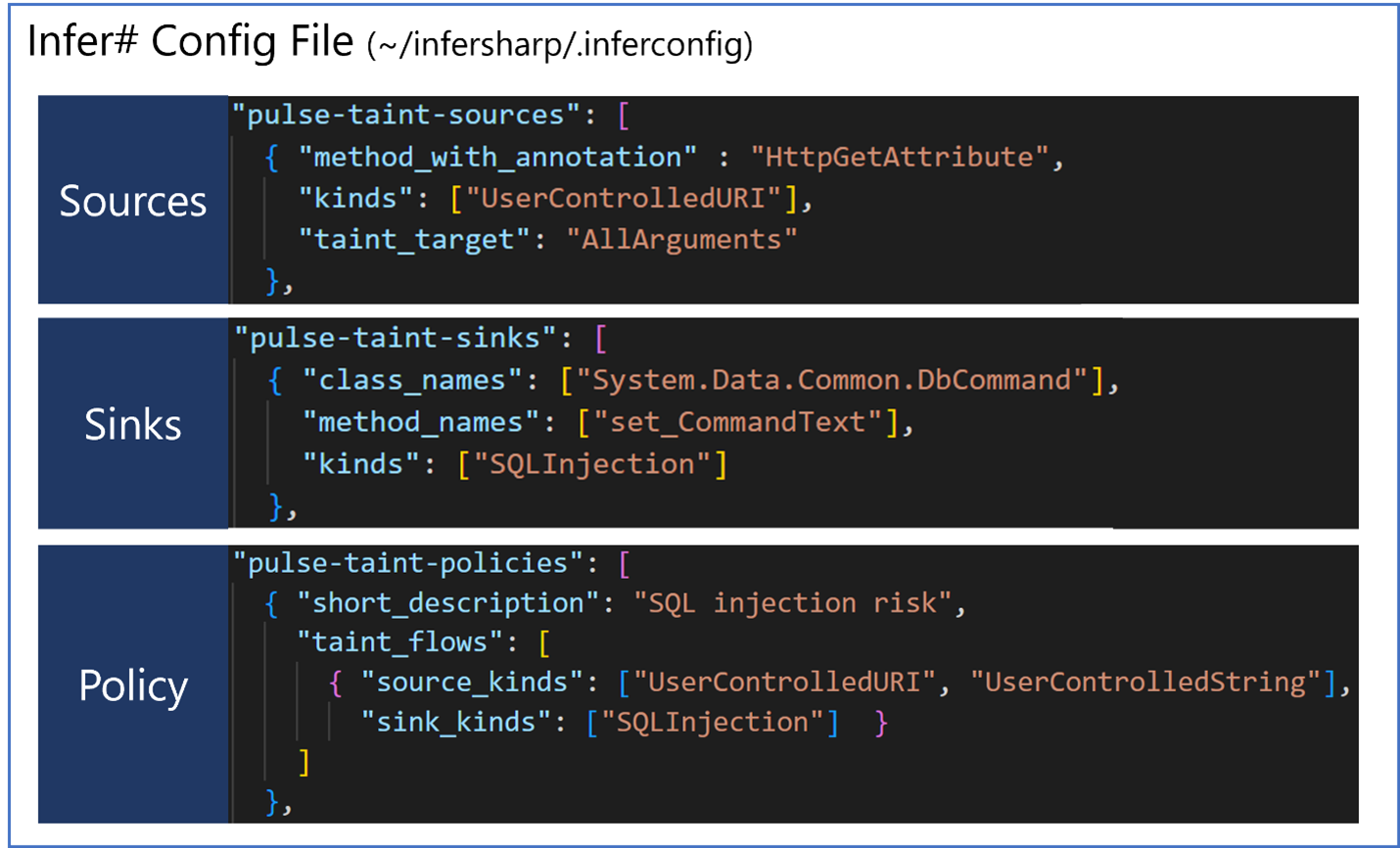

Behind the scenes, Infer#’ starts tracking tainted information when it passes through certain methods, known as “pulse-taint-sources,” specified in the ~/infersharp/.inferconfig file in Infer#’s WSL distro. This JSON specifies parameters to the analysis and is invoked by the extension by default. Infer# alerts when it detects that the tainted information reaches certain methods, known as “pulse-taint-sinks.” Putting it together, just specify the sources and sinks of the taint flow you want to track under “pulse-taint-policies.” Here’s what that looks like for the case above:

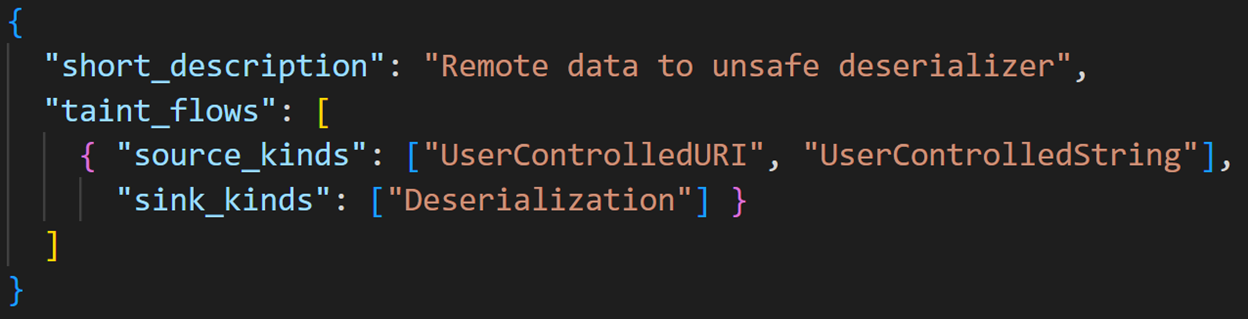

You can easily specify more flows of your own, reusing sources and sinks as needed:

Try it today!

By using a framework whose analysis stretches across all input assemblies, Infer# can detect issues that wouldn’t be easy for a human to spot. We’re excited to continue sharing with you the capabilities of the latest semantic analysis technologies. Become a zombie bug slayer today!

- Come hear our .NET Conf 2022 talk (same title as this article’s) at 7:00 PM PST on November 9

- Questions, feature requests, issue reporting: Infer# GitHub, or email us at ec550f20.microsoft.com@amer.teams.ms

- Developer tool extensions: VS2022 and VSCode

- Build time tools: Github Action, Azure Pipelines

- Configured Environment: WSL and Docker

Hi. Why do we need WSL for Infer#? Does Infer# not work for Windows apps?

We need WSL for Infer# because on the backend we leverage Infer, which is written entirely in OCaml. While OCaml has some Windows support (and according to the link I mention above, in opam 2.2 there will be full support), currently it isn't enough to fully support Infer natively on Windows.

As far as your question as to whether Infer# works for Windows apps: Infer# will analyze any DLLs and PDBs. If you are for example referring to Windows apps which are built on .NET Framework or .NET Core, then Infer# will be able to analyze them. More broadly, we support...

Thanks. You have made your post much clearer.

I thought “Infer” was just a typo for “Infer#,” especially since the article’s first level-2 heading reads “Getting started with Infer.” I concluded that Infer# was something that Meta Platforms, Inc. created but Microsoft later acquired and released as open-source. I was wrong.

For the installation from Visual Studio, can you provide a bit more detail around the expected output of a good install of the WSL portion? I personally have to work on Windows Server 2019, and it seems to hang at “I” …

I can start the WSL command line from a admin powershell window, and tried this install from an admin elevated VS 2022.

The expected timing should be up to a minute for the WSL download to occur, and up to another minute for the installation of the "infersharp1.4" custom distro to complete; the output would just then be a message noting installation success.

The first place I'd look is validating your WSL version/setup. Are you on WSL2? Also, there is the requirement on aka.ms/wslinstall -- "You must be running Windows 10 version 2004 and higher (Build 19041 and higher) or Windows 11."

If that all works, there is can you run

<code>

and examine the output? This validates that infersharp1.4 was correctly setup on your computer.

If...

Surely the LeakOrNot method does have a potential resource leak? If a ThreadAbortException or OutOfMemoryException is thrown between the allocation of the FileStream and the allocation of the OwnsStreamReader class, the resource will never be disposed of.

An out-of-memory exception would normally terminate the process, so that may not be an issue. But a thread abort could happen at any point, without taking the entire process down.

You are correct that there are in general runtime conditions that would cause the failure of the Dispose() to be invoked. Infer# reports when a plausibly predictable/frequent exception can be statically identified among the execution paths of the program. Generally, we try to heavily bias towards high precision in the warnings; Pulse itself reports on "manifest" bugs, whose presence does not depend on certain preconditions that depend on the calling context of the procedure being analyzed (see the paper if you want to learn more on this topic). Things like OutOfMemoryException/ThreadAbortException aren't statically identifiable (except insofar as for example whenever...

May I ask why the

Inferengine doesn’t support windows ?Infer is not native to windows because the whole stack is written in OCaml. Even though there is some compatibility between OCaml and Windows, it isn’t yet mature enough for us to run Infer on Windows — for now, we’ve been trying to mitigate it by invoking Infer via WSL.

Seems like F# might be a good fit to port this then? No idea, but would love to see a native solution. Thank you and the team for your work on this.

Yes — we are hoping that with Opam 2.2 bringing full support for Windows in the coming months that we will be able to leverage that for moving Infer# natively to Windows. Stay tuned!

This is great – not a lot of tools in this space for .NET. I see that this is open source. What metrics do you record if I use this? What data do I need to share to use this?

Hi — we only track start and end events for each of install and analysis. We do not currently track any information whatsoever about the code that was analyzed, nor do we track any warning information.

how do you then continue to improve the analysis and experience?

We gather feedback from users like you and from upvoted issues on our Github. We also regularly evaluate the performance of the analysis against our suite of benchmark repos (both open source repos and repos internal to Microsoft)

It would be very usefull if it is was shipped as a roslyn analysers so there no need to install a VS extensions, and would run in other CIs (like gitlab).

We are looking into further native integrations like that (we are currently limited by the backend Infer being native to Linux, which should change in the future)!

Thanks, I guessed that was the major problem.

Is it possible to have it as a dotnet tool for the time being ? I don’t mind if it use WSL behind the scene like the VS extensions.

That makes sense — we will look into this. Thank you for the feedback! Do you want to open an issue on our github for this? https://github.com/microsoft/infersharp/issues

Yep, I will.

Wow, this is seriously groundbreaking work. I can’t believe I haven’t heard more press about this!