We are improving the caching abstraction in .NET to make it more intuitive and reliable. This blog showcases a sample project with reusable extension methods for Distributed Caching to greatly simplify the repetitive code on object serialization when setting cached values. It also provides guidance on best practices so developers can focus on business logic. We are actively working on bringing this experience into the .NET and our current ETA is post .NET 8, but we wanted to share early progress and get feedback.

Background

Application developers mostly use distributed caches to improve data query performance and to save web application session objects. While the concept of caching sounds straight forward, we heard from .NET developers that it requires a decent amount of experience to use caching properly. The challenges are:

- The required object serialization code is repetitive and error prone.

- It’s still a common error to use sync over async in caching methods with clients such as StackExchange.Redis

- There is too much overhead from “boilerplate” type of work in .NET caching methods.

Ideally, the developer should only have to specify what to cache, and the framework should take care of the rest. We are introducing a better caching abstraction in .NET to optimize for common distributed caching and session store caching scenarios. We would like to show you a concept of our solution, hoping to solve some immediate problems and receive feedback. The sample extension methods are written by Marc Gravell, the maintainer of the popular StackExchange.Redis. You can copy the DistributedCacheExtensions.cs into your ASP.NET core web project, configure a distributed cache in Program.cs, and follow example code to start using the extension methods.

The extension methods sample code is very intuitive if you want to jump directly into it. The rest of the blog dives into the detailed implementation behind the extension methods with an example Web API application for a weather forecast.

Application scenarios for using the new Distributed Cache extension methods

In the extension methods sample code, only GetAsync() methods are exposed. You may wonder where the methods for setting cache values are. The answer is – you don’t need the Set methods anymore. Set methods are abstracted by the GetAsync() implementations when reading value from the data source upon a cache miss. The GetAsync() methods essentially do the following:

- Automate the Cache-Aside pattern. This means it always attempts to read from cache. In the case of a cache miss, the method executes a user-specified function to return the value and save it in cache for future reads.

- Object serialization. The extension methods allow developers to specify what to cache. No custom serialization code needed. The sample code uses Json serializer, but you can edit the code to use protobuf-net or other custom serializers for performance optimization.

- Thread management. All caching methods are designed to be asynchronous and work reliably with synchronous operations that generate the cache values.

- State management. The user-specified functions can optionally be stateful with simplified coding syntax, using static lambdas to avoid captured variables and per-usage delegate creation overheads.

Example for using the sample code

The WeatherAPI-DistributedCache demo shows how to easily re-use the extension methods, with Azure Cache for Redis as example.

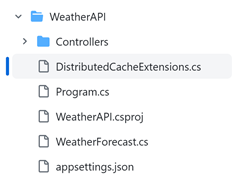

- Copy the DistributedCacheDemo/DistributedCacheExtensions.cs to your ASP.NET Core project in the same folder directory as the

Program.csfile. Per Figure 1, theDistributedCacheExtensions.cscode file is placed alongsideProgram.csin the project folder.Figure 1: Copy and paste the DistributedCacheExtensions.cs file to your web project

- Add the Distributed Cache service in

Program.csby:- Adding Microsoft.Extensions.Caching.StackExchangeRedis to your project

- Adding the following code:

builder.Services.AddStackExchangeRedisCache(options => { options.Configuration = builder.Configuration.GetConnectionString("MyRedisConStr"); options.InstanceName = "SampleInstance"; });

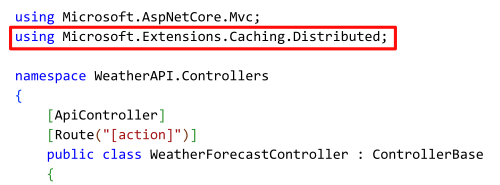

- Use the extension methods in Weather Controller class that contains business logic. Per Figure 2, include the using statement to access the extension methods.

Figure 2: add using statement in the class to access the extension methods

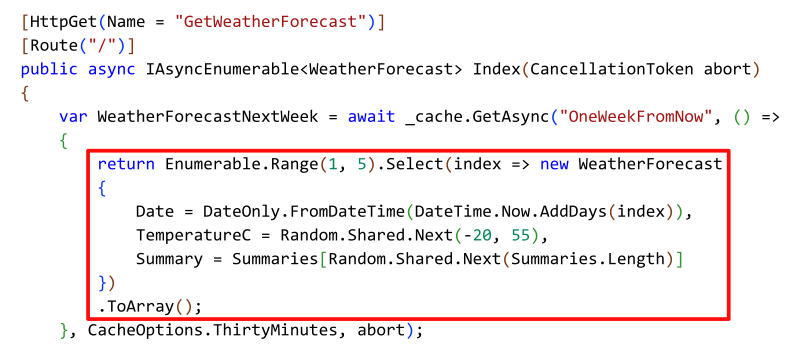

- Refer to the sample code to use the new

GetAsync()methods. The only code needed is the business logic for generating weather forecast data. All caching operations are abstracted by the extension methods. Figure 3 shows a user defined method for generating the weather forecast for the next week, which is the only business logic required from developers as the input to use caching methods.Figure 3: define the business logic to use the new GetAsync() extension methods

Notice that no object serialization code is required to get or set an WeatherForecast object in the cache. And in fact, only caching options and cache key name are needed as parameters to use the GetAsync() method in a web application. Developers can entirely focus on the business logic without any “boilerplate” efforts to get caching operations to work.

Next steps

Try out the concept from our sample code today! You can provide feedback by leaving comments in this blog post or filing an issue in the ASP.NET Core repository. If you are looking for guidance on using a distributed cache to improve you cloud application’s performance, we have an example at Improving Web Application Performance Using Azure Cache for Redis.

A bit late to the party, but this looks great! Being able to just add caching without all the gory details is really a nice thing to have, will save time and bugs.

I noticed that there is currently no way to force eviction from the cache, it this a design decision or just something that was set aside for the demo? Forced eviction has its uses, for instance to quicken eventual consistency after deletion or modification of some data.

If you mean “hard get, ignore cache on fetch, but store new value” – simply not added in demo; for now you could evict and get in turn

Very nice. I'm curious if you have best practices around cache key safety, with respect to multi-tenancy and data isolation.

For context, https://learn.microsoft.com/en-us/azure/architecture/guide/multitenant/service/cache-redis#isolation-models

In the "Cache per tenant" approach, your string cache key is fine, presumably because you would have gotten your IDistributedCache from DI or some factory that has already wired up that tenant's cache settings.

In the "Shared cache, shared database" and "Shared cache, database per tenant" approaches, a string cache key leaves a significant possibility of failure to appropriately prefix your cache key with a tenant discriminator.

Would it make sense to abstract/encapsulate cache key creation, say by only accepting...

Do you mean something beyond the existing

RedisCacheOptions.InstanceName?I’m confused … isn’t the same method on IMemoryCache called GetOrCreateAsync() ?

Why was a different name chose in this case?

Hi MaxiTB,

Thanks a lot for your question! You are right that for IMemoryCache our interface can be much simpler. But using in-memory cache affects an application’s scalability in Cloud environment. i.e. sticky session needed. The recommended distributed cache did not have the equivalent abstraction as the IMemoryCache yet for several reasons like object serialization. The sample extension methods intend to close the gap.

I suppose the question was just about the name chosen for the method: why it’s

GetAsyncwhen there is establishedGetOrCreateAsyncname convention?Naming is hard; there’s also

GetOrAdd[Async](concurrent dictionary),AddOrGetExisting[Async](memory cache), etcThat’s something we’ll consider more acutely in any in-box version.

This is a nice change; a robust means of handling caches would help a lot for deduplicating work across a number of libraries.

I think that we could use another method of the form

<code>

This would allow the to set a different expiration depending on the value that it gets to cache; an example behavior would be to cache error responses for a shorter time period than valid responses (still caching to avoid DoSing a backing service, but not caching as long in order to recover more quickly once the backing service recovers), or setting an expiration of "now" for cases...

Hi Derek,

Thanks a lot for your comments! Good suggestion on expiration time based on conditions. Similar question as I put in previous comments – what would be the conditions you are looking into, returned value type, input parameters, etc?

Hi

Does it handle cache stampede/cache storm when concurrent requests miss the cache ?.

Madhu

Hi Madhu,

Thanks for the comment. Good question on cache stampede/cache storm. Those are out of scope for the sample code, but those should definitely be supported with future implementations.

This is awesome. It would be great though if time-based caching libraries would start supporting the Stale Cache pattern, and then update these abstractions to support it as well. It can greatly help app fault tolerance and is very easy to understand and implement. Instead of only specifying an Expiry time, you also specify a Stale time. If an item is past the Stale time, then try fetching an updated value, and if you can't because the external service is unavailable then return the stale value for the time being. If you want the traditional functionality, then just set the...

This is great! Currently I have a lot boilerplate code to fetch/add/remove things from cache.

However, I'd like to propose a new extension method that will test the value retrieved from getMethodand if the evaluation succeed it's added to the cache.

Currently my use case consists of a FieldValues record which is:

<code>

The getMethod function will check the database and if doesn't find it, it'll return FieldValues.Empty which I don't want to be saved to the cache.

In the current extension method, when I call GetAsync again, it'll fetch an empty value from the cache and not call the getMethod again.

Hi Nicolas, thanks a lot for the comment!

We can definitely add a condition for when to cache the returned value. Any other conditions you think would be useful, i.e. input parameters?

The sample code is just a starting point. We will add the conditional caching feature when we build the support into .NET. But let us know if there is any urgency in your scenario.