Two months ago, at //Build 2018, we released ML.NET 0.1, a cross-platform, open source machine learning framework for .NET developers. We’ve gotten great feedback so far and would like to thank the community for your engagement as we continue to develop ML.NET together in the open.

We are happy to announce the latest version: ML.NET 0.3. This release supports exporting models to the ONNX format, enables creating new types of models with Factorization Machines, LightGBM, Ensembles, and LightLDA, and addressing a variety of issues and feedback we received from the community.

The main highlights of ML.NET 0.3 release are explained below. For your convenience, here’s a short list of links pointing to the internal sections in this blog post:

- Export of ML.NET models to the ONNX-ML format

- Added LightGBM as a learner for binary classification, multiclass classification, and regression

- Added Field-Aware Factorization Machines (FFM) as a learner for binary classification

- Added Ensemble learners enabling multiple learners in one model

- Added LightLDA transform for topic modeling

- Added One-Versus-All (OVA) learner for multiclass classification

Export of ML.NET models to the ONNX-ML format

ONNX is an open and interoperable standard format for representing deep learning and machine learning models which enables developers to save trained models (from any framework) to the ONNX format and run them in a variety of target platforms.

ONNX is developed and supported by a community of partners such as Microsoft, Facebook, AWS, Nvidia, Intel, AMD, and many others.

With this release of ML.NET v0.3 you can export certain ML.NET models to the ONNX-ML format.

ONNX models can be used to infuse machine learning capabilities in platforms like Windows ML which evaluates ONNX models natively on Windows 10 devices taking advantage of hardware acceleration, as illustrated in the following image:

The following code snippet shows how you can convert and export an ML.NET model to an ONNX-ML model file:

Here’s the original C# sample code

Added LightGBM as a learner for binary classification, multiclass classification, and regression

This addition wraps LightGBM and exposes it in ML.NET. LightGBM is a framework that basically helps you to classify something as ‘A’ or ‘B’ (binary classification), classify something across multiple categories (Multi-class Classification) or predict a value based on historic data (regression).

Note that LightGBM can also be used for ranking (predict relevance of objects, such as determine which objects have a higher priority than others), but the ranking evaluator is not yet exposed in ML.NET.

The definition for LightGBM in ‘Machine Learning lingo’ is: A high-performance gradient boosting framework based on decision tree algorithms.

LightGBM is under the umbrella of the DMTK project at Microsoft.

The LightGBM repository shows various comparison experiments that show good accuracy and speed, so it is a great learner to try out. It has also been used in winning solutions in various ML challenges.

See example usage of LightGBM learner in ML.NET, here.

Added Field-Aware Factorization Machines (FFM) as a learner for binary classification

FFM is another learner for binary classification (“Is this A or B”). However, it is especially effective for very large sparse datasets to be used to train the model, because it is a streaming learner so it does not require the entire dataset to fit in memory.

FFM has been used to win various click prediction competitions such as the Criteo Display Advertising Challenge on Kaggle. You can learn more about the winning solution here.

Click-through rate (CTR) prediction is critical in advertising. Models based on Field-aware Factorization Machines (FFMs) outperforms previous approaches such as Factorization Machines (FMs) in many CTR-prediction model trainings. In addition to click prediction, FFM is also useful for areas such as recommendations.

Here’s how you add an FFM learner to the pipeline.

For additional sample code using FFM in ML.NET, check here and here.

Added Ensemble learners enabling multiple learners in one model

Ensemble learners enable using multiple learners in one model. Specifically, this update in ML.NET targets binary classification, multiclass classification, and regression learners.

Ensemble methods use multiple learning algorithms to obtain better predictive performance than each model separately.

As an example, the EnsembleBinaryClassifier learner could train both FastTreeBinaryClassifier and AveragedPerceptronBinaryClassifier and average their predictions to get the final prediction.

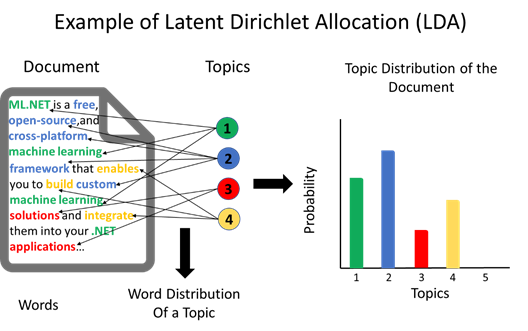

Added LightLDA transform for topic modeling

A transform is a component that transforms data in the dataset used to train the model. For example, you can transform a column from text to vector of numbers.

The LightLDA transform is an implementation of Latent Dirichlet Allocation (LDA) which infers topical structure from text data.

LDA allows sets of observations that explain why some parts of the data are similar. For example, if observations are words collected into documents, it asserts that each document is a mixture of a small number of topics and that each word’s creation is attributable to one of the document’s topics.

The LightLDA transform can infer a set of topics from the words in the dataset, like the following image illustrates.

The implementation of LightLDA in ML.NET is based on this paper. There is a distributed implementation of LightLDA here.

Added One-Versus-All (OVA) learner for multiclass classification

OVA (sometimes known as One-Versus-Rest) is an approach to using binary classifiers in multiclass classification problems.

While some binary classification learners in ML.NET natively support multiclass classification (e.g. Logistic Regression), there are others that do not (e.g. Averaged Perceptron). OVA enables using the latter group for multiclass classification as well.

Previous ML.NET releases

As historic information, here’s a list of the multiple minor releases we have published, as of today:

Sample apps at GitHub

Check out the ML.NET samples at GitHub so you can get started faster. This GitHub repo for code examples and sample apps will be growing significantly in the upcoming months, so stay tuned!

Help shape ML.NET for your needs

If you haven’t already, try out ML.NET you can get started here. We look forward to your feedback and welcome you to file issues with any suggestions or enhancements in the GitHub repo.

https://github.com/dotnet/machinelearning

This blog was authored by Cesar de la Torre.

Thanks,

ML.NET Team

0 comments