We talk with customers who love the command line. Donovan Brown maintains the community VSTeam command for folks that love PowerShell, but we’re pleased to announce that we now have a public preview of Azure DevOps extension for the Azure CLI which is available cross platform.

The extension allows you to experience Azure DevOps from the command line, bringing the capability to manage Azure DevOps right to your fingertips! This allows you to work in a streamlined task/command oriented manner without having to worry about the GUI flows, providing you a faster and flexible interaction canvas.

This looks exciting, how do I get started?

- Install Azure CLI: Follow the instructions available on Microsoft Docs to set up Azure CLI in your environment. At a minimum, your Azure CLI version must be 2.0.49. You can use

az -versionto validate. - Add the Azure DevOps extension:

az extension add --name azure-devopsYou can use eitheraz extension listoraz extension show --name azure-devopsto confirm the installation. - Sign in: Run

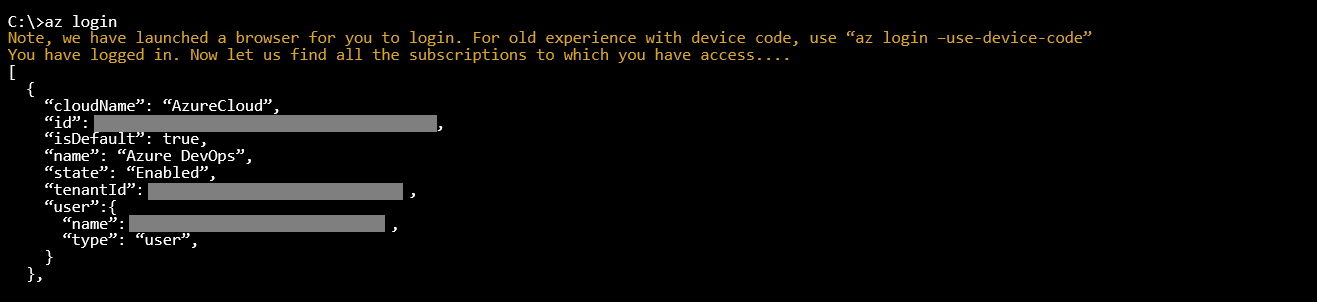

az loginto sign in. - Configure defaults: Although you can provide the organization and project for each command, we recommend you set these as defaults in configuration for seamless commanding.

az devops configure --defaults organization=https://dev.azure.com/contoso project=ContosoWebApp

Now you are all good to go!

Example

Let us look at an example where the Azure DevOps extension can be used to view and trigger a build for an Azure Pipeline.

- Log into your Azure Account

- Configure defaults

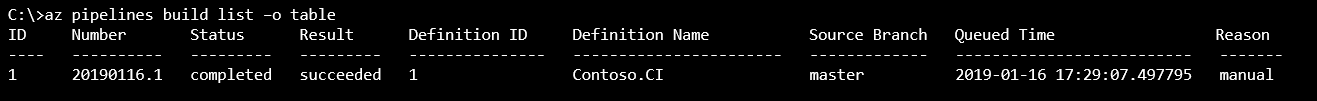

- View the list of builds

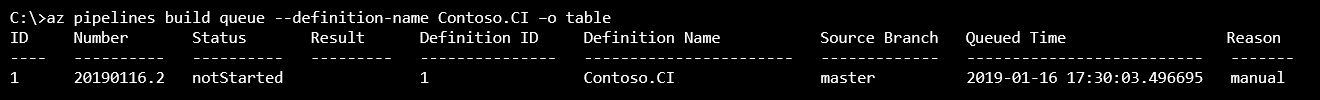

- Queue a build

For the documentation and for more information on the commands currently supported, take a look at the Azure DevOps extension documentation. If you have any changes you’d like or suggestions for features then we’d love your feedback in our Azure DevOps extension GitHub repo – we’re taking PR’s!

Thanks For Adding this feature

Stories

Hello George,

I am trying to trigger a vbs file which is placed in my system from Azure DevOps command line through pipeline but not able to access my system’s drive.

Would you please help me out. how can we provide path which is recognizable from Azure DevOps, for eg if I am writing “C:\VSTS”, it is not accepting and searching in the clouds.

Thanks

Amir

This is great, gives a good starting point.

Only issue I have is that you can export (on screen) –output table also –output yaml when using the pipelines variable-groups but once exported via this means can you use the yaml etc to then create a file to import the Library groups instead of inputting manually via the Azure DevOps console/website?

If not what is the best way to import multiple names/values for a variable group? Please don’t say manually.. 🙂

Hey Tom,

Thank you for reaching out! I am not sure if I got your question correctly. Is this more about "how do I manage variables and variable groups for a pipeline?", then yes, we do have the az pipeline variable and az pipeline variable-group commands that enable you to automate variable management using CLI.

Feel free to create an issue in our GitHub repo Azure/azure-devops-cli-extension in case the above clarification does not suffice.

Hi George,

Thanks for the reply, it was more on adding a large ranges of names and values to a new Pipeline variable-group that you are creating at the same time. Rather than putting these in each time, could a yaml file be used, if this does exist, as with out this you have to do the following each time.

i.e. -

az pipelines variable-group create --name Production Backups --group-id 4 -f c:\Azure\testyaml.yaml

az pipelines variable-group create: error: the following arguments are required: --variables

As variables is required would mean entering in them multiple times.

Hope that has made it clearer.

Tom

Thanks Tom, that provides more clarity.

To answer your question on whether there is a possibility to create a large range of names and values from a file using the az pipeline variable group command or any other command, the answer is unfortunately no.

Follow up question - I am assuming you are looking at an automation scenario. Could you confirm this? Could you also provide some more clarity on your scenario? To provide further context on the question, are you

a. Creating the pipelines interactively where every time you need a pipeline you manually run az pipeline create and expect to...

Hello George,

Thank you for steps and those works fine on my local. However fails on Argo CI pipeline.

My intention is to trigger Azure DevOps pipeline when Argo pipeline completes running. And as per your mentioned steps, I am using except with service principal like -

This steps succeeds and when I try or any command I get error as -

Can you please help?

Hey Swapnil,

We have a similar issue on our GitHub repo – https://github.com/Azure/azure-devops-cli-extension/issues/905. Azure devops, which is an extension to azure cli, does not support service principal. If you are using service principal for automated login, you can use az devops login with PAT to access azure devops. Here is a link to documentation that explains more – Log in via PAT.

So this is to extend the Azure cli with Azure DevOps services, what about on-premises Azure DevOps Server?Is there CLI’s available for that?

Apologies for the delayed response. We do not have any plans as of now to extend this capability to Azure DevOps server.

Nice and convenient. The only thing that I cannot find is how I can deploy a specific stage.I can make a stage (after-release triggered) and from the CLI to create a release but I do not want this.I would like to deploy to a stage which is manually triggered. Does CLI support this?Furthermore, can I set up a Schedule-release trigger using the CLI?Thank you for the post

Apologies for the delayed response. We do not have a command to deploy to a specific stage. Feel free to raise a feature request on our GitHub Repo – Azure/azure-devops-cli-extension and we will take a look into it. Feel free to contribute to the effort yourself. We can guide you in case you run into any challenges.

I’m using azure-cli 2.0.59″az -version” gives an error while “az –version” works as expected

Already had 2.0.32, per “az -version”. Downloaded & installed 2.0.58, rebooted, and… “az -version” errors for missing _command_package and displays the using text. The extension command also errors, adding –debug claims I’m still running 2.0.32 although Programs & Features shows 2.0.58… uninstalled, rebooted, verified that Programs & Features doesn’t show Azure CLI installed, tried the commands anyway, got the same results, so 2.0.32 is opbviously somewhere on my system, although I can’t find any evidence of it other than that running “az” doesn’t give a command not found error.

Hi John,

Sorry to hear that the client version is not being refreshed properly using the download and install method. It did work for me when I tried to move from 2.0.48 to 2.0.52. Are you still facing this issue?

You can run the azure devops extension on cloud shell (https://shell.azure.com) as well.

Thanks,

George Verghese.

This comment has been deleted.

That’s Great….!!!!

How about the repo checkin and checkout….?

Please elobrate.

Thanks

For Git repository operations you should use the Git command line and for TFVC operations the tf command line – we’re not planning on duplicating that functionality with-in the Azure command. Was there anything specific you needed with-in the Azure CLI context?

For the previous VSTS CLI, I have a PowerShell module called posh-vsts-cli that integrates it into PowerShell via tab completion and returning rich objects. I plan to update it to support the new CLI. Watch the repo of the original module for an announcement:https://github.com/bergmeister/posh-vsts-cli

Awesome! Looking forward to this extension. However, Azure CLI does support an interactive mode which places you in an interactive shell with auto-completion, command descriptions, and examples.

Have a look at https://docs.microsoft.com/en-us/cli/azure/interactive-azure-cli?view=azure-cli-latest

Very cool – auto-complete help is a really nice feature to add to the shell.