This blog post was co-authored by Nikisha Reyes-Grange, Senior Product Marketing Manager, Azure Marketing.

UPDATE: Serverless preview support for Azure Cosmos DB APIs for MongoDB, Cassandra, Gremlin, and Table began November 11, 2020.

We are beyond excited to announce that Azure Cosmos DB serverless is now available in preview on the Core (SQL) API, with support for the APIs for MongoDB, Gremlin (graph), Table, and Cassandra coming soon. This new consumption-based model lets you use your containers cost-effectively, without having to provision any throughput.

What is serverless on Azure Cosmos DB?

Announced at the Build conference this past May, serverless radically changes the way you use and pay for your Azure Cosmos DB resources.

Until now, your only option for running a workload on Azure Cosmos DB has been to provision throughput. This throughput, expressed in Request Units per second (RU/s), is dedicated to your usage and available for your database operations to consume. This model comes with low-latency and high-availability SLAs, making it a great fit for high-traffic applications needing guaranteed performance – but not as well-suited to light traffic scenarios.

Serverless is a cost-effective option for databases with sporadic traffic patterns and modest bursts. When your resources sit idle most of the time, it doesn’t make sense to provision and pay for unneeded per-second capacity. As a consumption-based option, serverless eliminates the concept of provisioned throughput and instead charges you for the RUs your database operations consume.

Check this video for a visual comparison between provisioned throughput and serverless:

Differences with provisioned throughput

Serverless containers expose the same capabilities as containers created in provisioned throughput mode. This means that you manage your resources and read, write and query your data the exact same way. Where serverless accounts and containers differ from those using provisioned throughput is:

| Provisioned throughput | Serverless | |

| Maximum number of Azure regions per account | unlimited | 1 |

| Maximum storage per container | unlimited | 50 GB |

| Throughput burstability | unlimited | moderate |

Note: Some of these limitations may be eased or removed as serverless becomes generally available, and your feedback will help us decide! Please reach out and tell us more about your serverless experience.

When generally available, serverless will also offer different performance characteristics as explained in this article.

Use-cases

Because serverless is much more cost-effective in situations where you deal with light traffic and don’t need provisioned capacity, it naturally fits the following use-cases:

- Getting started with Azure Cosmos DB

- Development, testing and prototyping of new applications

- Running small-to-medium applications with intermittent traffic that is hard to forecast

- Integrating with serverless compute services like Azure Functions

To illustrate the advantages of using serverless for these scenarios, let’s take the example of a workload that is expected to burst to a maximum of 500 RU/s and consume a total of 20,000,000 RUs over a month.

- In provisioned throughput mode, you would have to provision a container with 500 RU/s for a monthly cost of: $0.008 * 5 * 730 = $29.20

- In serverless mode, you would only pay for the consumed RUs: $0.25 * 20 = $5.00

(not accounting for the storage cost, which is the same between the 2 modes)

Getting started

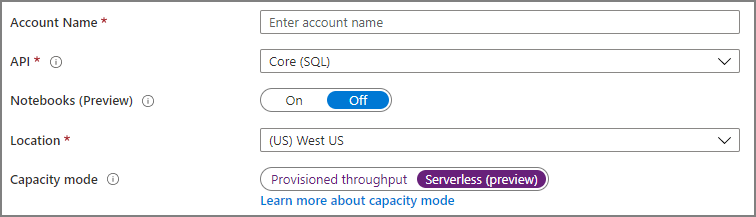

You must create a new Azure Cosmos DB account from the Azure portal to get started with serverless. When creating your new account, select Core (SQL) as the API type, then Serverless (preview) under Capacity mode:

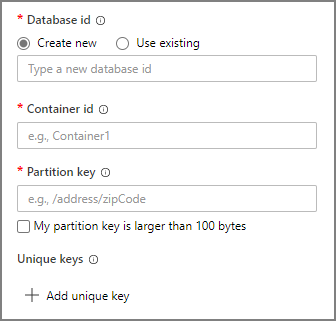

Once your new serverless account is created, you manage your Azure Cosmos DB resources and data just like you would in provisioned throughput mode. The only difference is that you don’t have to specify any throughput when creating a container:

We can’t wait to see what you will build on Azure Cosmos DB serverless! Get started today with the following resources:

Just to know if I’m correct. The 50 GB are per container. So I could have more than one container in the same database, or having more than one database insn’t?

My idea would be split the data amond years into different container so I wouldn’t get the 50 GBs. would it be possible?

Wishing the oficial release

Hi Antonio, yes you’re correct, that is possible.

Great news! I love CosmosDB. One question tho. Will it be possible to start with serverless to prototype the application and then switch to provisioned later when the app takes off and leaves prototype phase? Or do i have to make some hand made migration work?

Cheers!

Thanks Marco! For now you would have to migrate your data to a provisioned throughput account. We’re evaluating whether it will be possible to transform a serverless account into a provisioned throughput one at GA; if not, we’ll provide clear guidance on how to easily migrate your data.

Would be really awesome if you could switch seamlessly.

Both directions provisioned -> serverless or serverless -> provisioned

Good suggestion!

Great news, do you have a date planned for release version?

We have not announced a GA date.

Are there any cold starts using cosmosdb serverless?

There is no lag due to cold starts.

It is awesome news, when is it be available the MongoDB API support?

Support for all other APIs is coming very soon – probably in a couple of months or less.

Cassandra also coming in couple of months ? Will we be able to use in production ?

(replying here as apparently I can’t reply to your last comment below!)

We plan to extend serverless support to all APIs next month (October).

Yes we’ll support all APIs – including Cassandra – very soon. Like any Azure preview, serverless is currently not covered by our SLA and is not recommended for production workloads until it goes GA.

Thanks we are waiting for Cassandra, Do you have any tentative date please ?

Are the 5,000 RU/s and 50GB limits self imposed or limits of the technology? It would be great to see them removed. You hint that they may be removed.

These are technical limits that we believe are suitable for dev/test activities and small apps. Indeed, we may increase them based on the feedback we’ll get from our users during the preview.