Abstract

Data Collection has become a daunting task with the ever-growing amount of data. The growth of data makes reporting tasks an operational challenge due to the processing time requirements imposed by technical limitations in many business intelligence tools. The paper outlines the incremental refresh capability of Microsoft’s Power BI and its impact on analytics processing and reporting.

Scenario Overview

In this blog post, we want to document a customer engagement we worked on where we learned about a unique feature in Power BI that can be used when using Azure Cosmos DB’s SQL API. The result of the solution here provided performance benefits that were impressive, and we wanted to share our learnings.

We worked with a large IoT customer that was doing daily reports on all of their networks in a NoSQL database. As the NoSQL database grew, it caused massive increases in processing time by the Business Intelligence tools they were using. Not much different from many state-of-the-art BI tools, an incremental refresh was not possible. In short, the complete contents of the database were queried and fetched for daily reporting.

To address this challenge, the recommendation was to leverage Azure Cosmos DB SQL API along with Power BI and implement incremental refresh. Power BI can connect directly to Azure Cosmos DB to build analytics and visualize information. With Power BI, there are 2 modes of connectivity: 1. direct query and 2. import. Each has its pros and cons and depending on your scenario, one might make more sense than the other if you don’t fall neatly into one or the other, then you can leverage a combination of both modes known as composite models.

Import Mode

In this article, we will focus on import mode to unlock all the features of Power BI. The contents below will provide a step-by-step on how to set up Power BI incremental refresh when connecting to an Azure Cosmos DB. Please note, at the time of writing this post the ability to do incremental refresh is only available with the Azure Cosmos DB SQL API. Azure Synapse Link for Azure Cosmos DB is a good use case for overcoming the API limitations plus it can also be used to set up incremental loads to Power BI if needed.

The value of Power BI incremental refresh is that it can avoid doing a full refresh of the data. It will only fetch data that has been changed or added from the previous data load. There are many advantages to implementing the Power BI incremental refresh including:

- More efficient processing and faster refresh operations

- Faster PBI publishing after a refresh

- More reliability since there are fewer long-running connections

Getting started

Let’s begin with a step-by-step incremental refresh setup between Power BI and Azure Cosmos DB.

These are the major steps we will go through:

|

|

Resources

If you want to reproduce the architecture in this blog, you can set up your own incremental refresh with Azure Cosmos DB by following this GitHub Repo that contains files using a demo of Power BI’s Incremental Refresh capability. The following tools were used:

- Data Simulation: mgenerate.js/Chance.js (template to generate this is provided)

- Azure Data Factory: Loaded Azure Cosmos DB with simulated data

- Azure Blob Storage: Staging for data to be loaded into Azure Cosmos DB

- Azure Cosmos DB: Utilized Cosmos SQL API for our Document Model

- Power BI Desktop: Created Data Model

- Power BI System: Deployed Data Model, could be used for Data Flow

- DAX Studio: Used to display partitions proving incremental

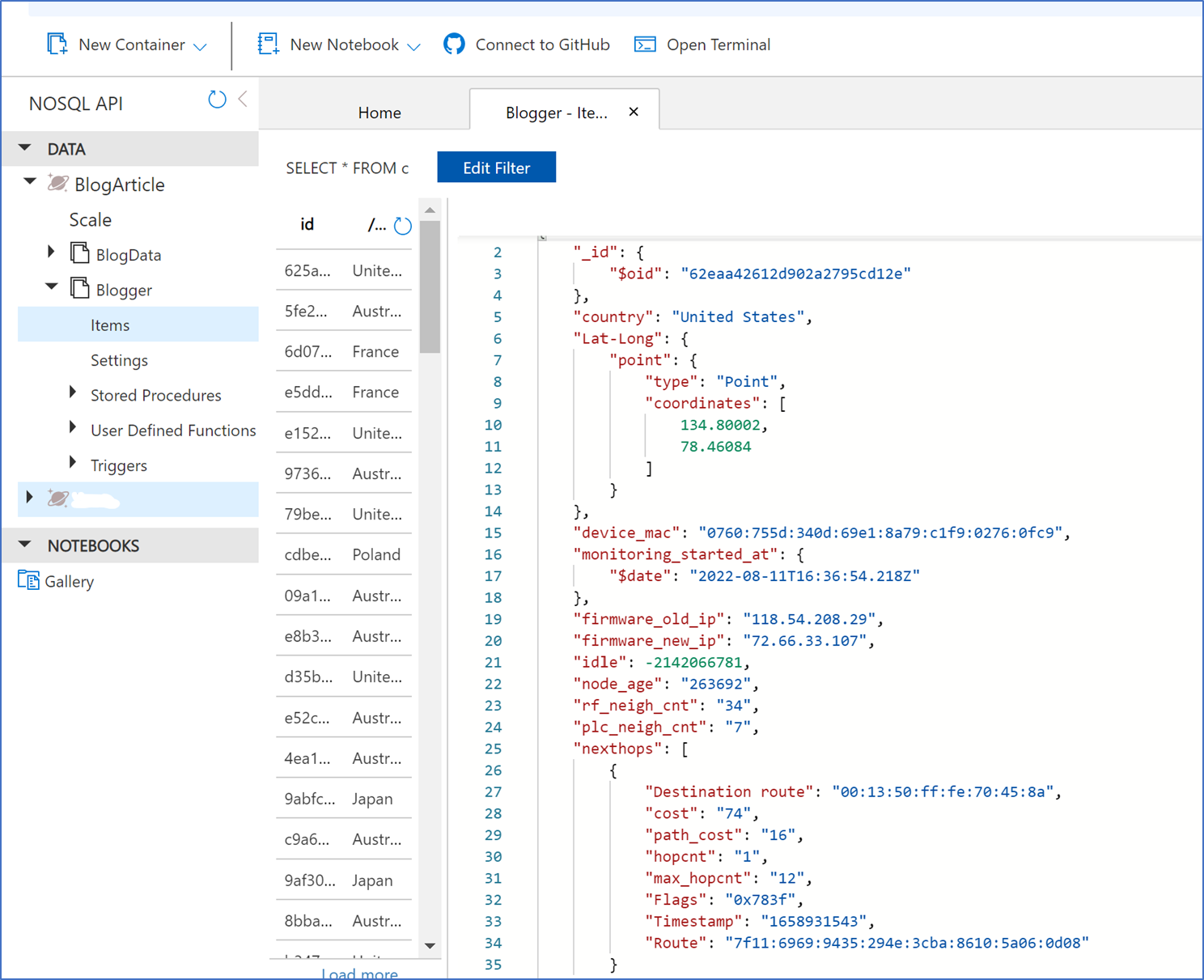

Step 1 – View Source Azure Cosmos DB

Go to the Azure Portal and into the Azure Cosmos DB blade. The following is a sample of a single document on August 3rd, 2022:

We first created a historical/initial load with the August 3rd, 2022, date in Power BI. After the August 3rd, 2022, PBI load was completed, we manually added documents from August 10th to August 17th before setting up the incremental refresh. After manually adding the future dated documents from August 10th to August 17th, the data distribution of documents by date in Azure Cosmos DB could be summarized as follows:

On August 9th, 2022, we had the following distribution of documents in our source Azure Cosmos DB.

| Date | Number of documents added |

| August 3rd, 2022 | 1000 |

| August 10th, 2022 | 1 |

| August 11th, 2022 | 2 |

| August 13th, 2022 | 1 |

| August 14th, 2022 | 1 |

| August 15th, 2022 | 3 |

| August 16th, 2022 | 4 |

|

August 17th, 2022 |

3 |

Here is an example of the document we created for August 16th – note all other manually added documents are similar:

Step 2 – Setup Power BI Connectivity & Incremental Refresh

Let’s open up Power BI desktop and click on Get Data. After that, let’s enter the Azure Cosmos DB URL which you can get from the Azure Cosmos DB Overview blade.

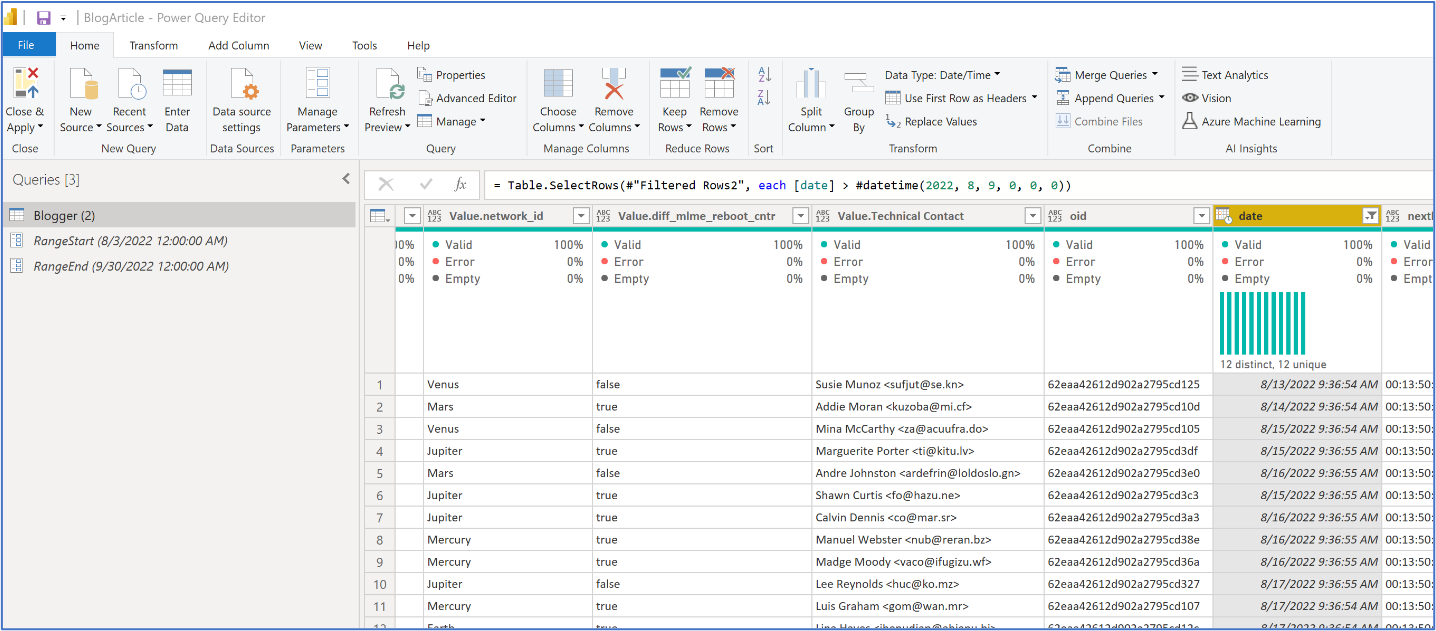

Once connected to our Azure Cosmos DB, we moved to transform the data in Power Query to prepare the document. In our case, the Document Model was used with 70+ embedded arrays and sub-documents in this data model for this IOT sink repository. We leveraged Power BI to flatten embedded JSON by creating a quasi-normalization with tables. — See below. After connecting to our Azure Cosmos DB SQL source, we clicked on the double arrows and clicked on OK to flatten the “Record” view into a tabular view. In order to work with the data and create reports, we need to flatten the JSON document into rows and columns — See below.

After connecting to our Azure Cosmos DB SQL source, we clicked on the double arrows and clicked on OK to flatten the “Record” view into a tabular view. In order to work with the data and create reports, we need to flatten the JSON document into rows and columns.

After going through the flattening process, the initial JSON document was deconstructed as follows:

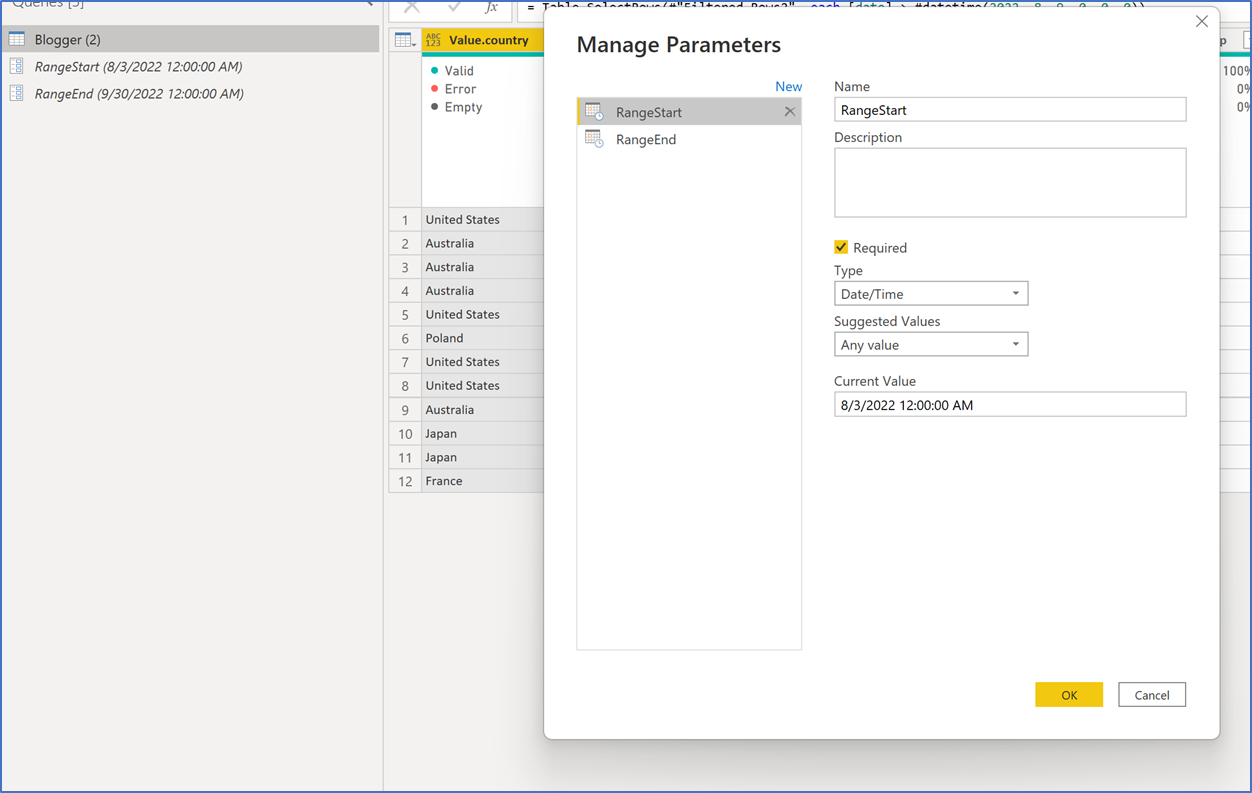

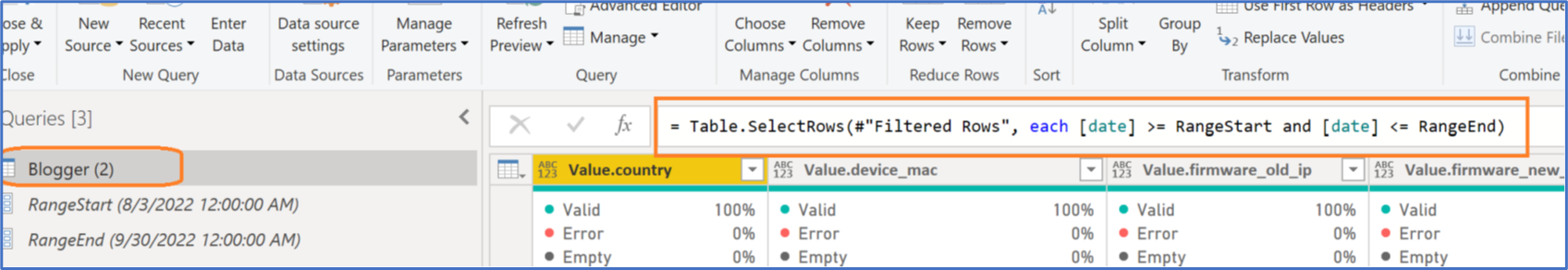

Once we had the JSON document denormalized, we followed the instructions on how to set up incremental refresh. Please note, we first did setup the initial load with the August 3rd date and published this to the Power BI service. After the initial load, we went back to Power BI in order to configure the incremental refresh portion. In order to achieve that, we first created 2 variables in the form of

- RangeStart – set to August 3rd, 2022

- RangeEnd – set to end on September 30th, 2022

After creating the RangeStart and RangeEnd variables, we applied them to the source data as shown below so that only records greater than RangeStart would be selected but less than RangeEnd.

= Table.SelectRows(#”Filtered Rows”, each [date] >= RangeStart and [date] <= RangeEnd)

After creating the logic described above on August 9th, we published our Power BI model and set up an incremental refresh to run daily starting on August 10th at 6am PST. See the screenshot below:

Recap – Data Distribution in Azure Cosmos DB

Once again, as of August 9th, there were only the 1000 documents that were loaded/available on August 3rd, 2022.

We set up the initial incremental load on August 10th, 2022, and so on August 11th at 6am PST, the scheduled refresh would kick off every day at the same time. Before the August 11th run, we added the following documents to Azure Cosmos DB:

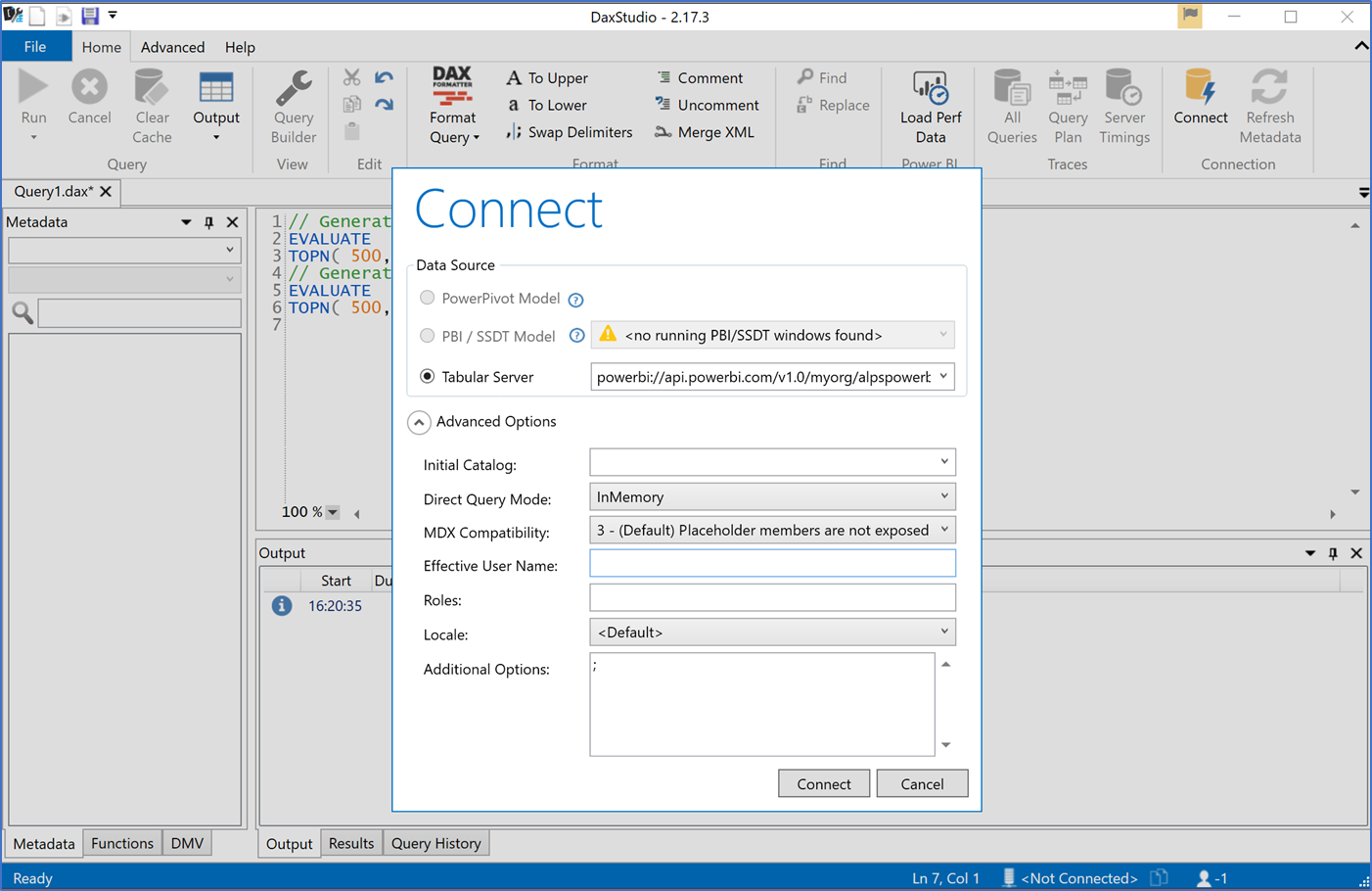

Step 3 – Validate Power BI Incremental Setup

There are a few ways we can validate that our incremental refresh is working as we had intended. One of those tools is a free download in the form of DAX Studio. We can leverage DAX Studio and connect to the power BI XMLA endpoint. We want to see the partitions created and the row counts associated with each partition. The partitions and row counts need to match what we saw in our original source data (please see screenshots earlier in this blog). The XMLA endpoint can be found in the Power BI workspace and in our case, resembles:

- powerbi://api.powerbi.com/v1.0/myorg/yourworkspace;initial catalog=BlogArticle

Connecting to your Power BI XMLA endpoint in DAX Studio appears as follows:

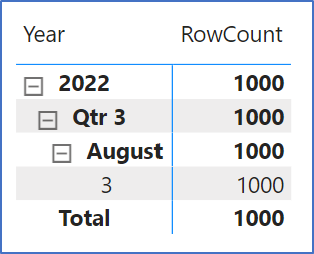

Once in DAX Studio, we can click on View Metrics of our BlogArticle database and then click on partitions to see what our model looks like:

We can see our partitions for the dates between August 10th to August 17th along with the row counts are a match to our source. Let’s take the final step and confirm that these were indeed created daily and on an incremental basis. In order to do that, we will leverage SSMS (SQL Server Management Studio)

SSMS – SQL Server Management Studio

Let’s now validate that those partitions were indeed incremental. We want to see the processed time when those newly created partitions were created. For that, we go to SQL Server Management Studio and connect to the XML endpoint of our PBI model. In our case – the endpoint looks something like this:

- powerbi://api.powerbi.com/v1.0/myorg/yourworkspace;initial catalog=BlogArticle

After connecting to our model via SSMS, we can click on the BlogArticle database and right click on the Blogger collection, and click on “partitions” as shown below:

We can now see that each of these partitions between August 10th and August 17th was created on the day indicated by the “Last Processed” time at around 6am PST as per our initial schedule. This confirms that the loads are indeed incremental and full refreshes are not being executed.

Conclusion

Incremental refresh is a powerful capability for a business analytics tool. In the case where there are relatively larger amounts of data, there is a significant improvement in the processing required to refresh the data into Power BI memory. In addition to incremental refresh, processing complex document model sources can be done in place removing the need for complex queries to reassemble the data for report processing.

About Azure Cosmos DB

Azure Cosmos DB is a fast and scalable distributed NoSQL database, built for modern application development. Get guaranteed single-digit millisecond response times and 99.999-percent availability, backed by SLAs, automatic and instant scalability, and open-source APIs for MongoDB and Cassandra. Enjoy fast writes and reads anywhere in the world with turnkey data replication and multi-region writes.

To easily build your first database, watch our Get Started videos on YouTube and explore ways to dev/test free

0 comments