Azure SQL Database is a fully managed platform as a service (PaaS) relational database service. With Azure SQL Database, you can create a highly available and high-performance data storage layer for the applications and solutions in Azure. SQL Database can be the right choice for a variety of modern cloud applications because it enables you to process both relational data and non-relational structures, such as graphs, JSON, spatial, and XML. You can also take advantage of advanced query processing features, such as high-performance in-memory technologies and intelligent query processing.

Database caching is a very well-known concept, when applications want to boost their performance further. Services like Azure Cache for Redis complement database services like Azure SQL DB, by allowing your data layer to scale. Using some standard caching techniques, you can store and distribute the most common application queries and improve the performance and scale of your application. Not only does caching help with improving latency, but it also reduces the load on the database, lowering the need to overprovision and thereby saving costs.

Distributed caching is a technique that allows the cache to scale across multiple nodes, allowing it to grow both in terms of size and throughput. Globally distributed cache is a network of geographically separated processing nodes, each having access to a common replicated database such that all nodes can participate in a common application ensuring local low latency with each region being able to run in isolation.

In this blog we will use a scalable, real-time leaderboard application to see how you can use Azure Cache for Redis Enterprise as a write-behind cache, in combination with Azure SQL Database to speed up your data tier performance. We will also see how you can use the active geo-replication (Active-Active) feature of Redis Enterprise to globally distribute your application while enjoying the low, local Redis latencies.

What is a leaderboard?

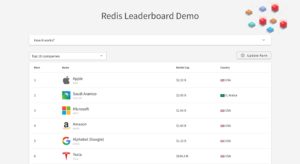

Leaderboard is nothing but a scorecard, showing a ranked order of names and current scores of leading competitors. Traditionally, leaderboards were essential for gaming applications to boost engagement. However, leaderboards are now used at many places to boost engagement and encourage healthy competition. Gamification of goals or tasks via leaderboards is a great way to motivate and engage people.

In this example, we will use a leaderboard to display (imaginary) market cap of several companies.

Architecture of the leaderboard application

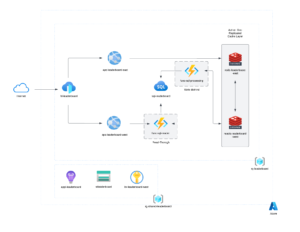

Here is how this whole application is configured. We are using a backend Azure SQL Database to store the leaderboard data. We have deployed one Azure SQL DB instance. Since, the leaderboard data is frequently accessed (mostly reads), we cached it in Azure Cache for Redis – Enterprise instance to improve application latencies and avoid accessing the backend storage layer all the time.

This is market cap data for companies, which can be accessed globally, we distributed our cache in 2 regions – US East and US West. Note that you can distribute the cache in up to 5 regions. But, for this sample we have distributed in 2 regions only. Active geo-replication creates multi-primary instances, that is, the application can read / write to any of these instances. The application can be configured to interact with the closest cache instance and therefore, enjoys local read / write latencies. The Redis software takes care of maintaining the consistency of data and replicating the data across all the instances.

Since we are using a write-behind caching technique in this example, the application connects only to the cache. We monitor any changes / updates that were introduced, whenever a change is identified an Azure function is triggered to update the underlying SQL Database with the changes.

Installation and Pre-requisites

You can read the pre-requisites and installation steps for this sample application on our github repo.

Here is an ARM template to deploy the entire solution to Azure.

Now let’s dive a little deeper into some of the services and features that we used in this example:

What is Azure Cache for Redis?

Azure Cache for Redis is in-memory data storage based on Redis software. Redis provides a high-throughput and low-latency data storage solution, which can be used to enhance an application’s performance and scale.

Azure Cache for Redis is a fully-managed service that hosts both open-source (OSS Redis) and Redis Enterprise software (Redis Enterprise software is hosted in collaboration with Redis Inc.©). Any application, whether inside or outside of Azure, is allowed to use the service, which is run by Microsoft and hosted on Azure. Azure Cache for Redis can be used as a distributed data or content cache, a session store, a message broker, and more. It can be deployed as a standalone. Or, it can be deployed along with other Azure database services, such as Azure SQL DB.

In our leaderboard example, we used an Enterprise tier Azure Cache for Redis instance in combination with Azure SQL DB.

What is a write-behind cache?

Write-behind is a caching strategy in which the cache layer itself connects to the backend database. The application needs to connect to the cache only; the cache then reads from or updates the backend database whenever required.

Here is how this caching pattern works:

- Your application uses the cache for reads and writes.

- I/O completion is confirmed when data is written in cache, hence it doesn’t block the incoming requests from being processed.

- The cache syncs any changed data to the backing database asynchronously.

We used Redis Streams and Azure Functions to implement this write-behind caching pattern. Note that, this may not be the best caching pattern for every application. Depending on your application requirements, you might want to choose a different caching pattern like cache-aside, write-through, etc.

What is active geo-replication in Azure Cache for Redis?

The Enterprise tiers of Azure Cache for Redis support active geo-replication, which allows developers to create geo-distributed applications that enjoy local sub-millisecond Redis read/write latencies with much better resilience to failure.

Active geo-replication enables managed multi-primary replication across Azure regions. Whether deploying a nationwide multi-region application or a globally distributed one, active geo-replication addresses key use-cases such as global session management, world-wide fraud detection, geo-distributed search, and real-time inventory management.

We used a geo-distributed Azure Cache for Redis Enterprise instance. The instance is distributed across US-East and US-West regions. User application can connect to the geographically closest cache instance and write or query data from that instance. Redis’ CRDT based active-active geo-replication takes care of replicating and synchronizing the data across all instances.

Summary

Caching is a useful technique when it comes to reducing application latencies and saving costs, by lowering the need to overprovision the database layer for better scale. In this example, we saw how you can configure a globally distributed write-behind cache with Azure Cache for Redis Enterprise for your Azure SQL Database. This not only helps improve application latencies, but it also helps you make your underlying database globally available. The application always enjoys local read/write latencies, by reading/writing to the closest cache instance, while also taking advantage of Azure SQL database’s security, performance, high availability, and other great capabilities.

We have also extended the example to show how you can use Redis as a read-through cache. If you have a read heavy application, your application may benefit from this caching pattern.

As a next step, check how you can boost your database performance by up to 800% using Azure Cache for Redis and some other caching architectures.

0 comments