Introduction

The first (false) assumption mentioned in the infamous Fallacies of distributed computing states that “the network is reliable”. Unfortunately, it turns out that distributed applications leveraging services running on multiple compute nodes can suffer from transient connectivity issues, and this is true for on-premises, hybrid and cloud-based deployments. Applications that are not implementing well-known Retry pattern on generic service-to-service communications will usually experience timeouts and exceptions when connectivity issues will manifest themselves.

In addition to network blips. services like Azure SQL can dynamically reconfigure themselves in response to situations like heavy workloads, planned events (like software upgrade or maintenance) and unplanned issues, like hardware or software failures. When events like these happen, client applications can lose connection to the database and receive specific error codes providing an indication of what’s going on in the system. To get a comprehensive list of error messages that can usually be considered as transient and retriable, we recommend you take a deeper look at this other article.

Over the last few years, we provided guidance on how customers could create their own retry logic or, as an alternative, reuse existing libraries aimed to simplify this task for them, More recently, we decided to provide a better experience by incorporating configurable retry logic capabilities directly in our client drivers portfolio starting with Microsoft.Data.SqlClient for .NET 5, .NET Core and full .NET Framework (although we’re planning to cover other major drivers like JDBC, ODBC and more over the next months).

Introducing Configurable Retry Logic

In essence, configurable retry logic lets developers and app managers specify, through code or app config files, how operations like opening a connection or executing a command should react when they encounter a connectivity issue manifesting itself with a certain exception number considered transient or “retriable”. In addition to error codes treated as transient (those published in this list), they can also add new error codes that are part of their business logic through the configuration file and specify regular expression patters of commands that should be considered or excluded from automatic retries, without writing a single line of code for that.

Digging down into details, this new feature is composed by three main components:

- A core API that developers can use when they create new data access applications, or refactor existing ones, to define their own retry logic provider with custom tailored behaviors and characteristics.

- A default, out of the box, retry logic provider with some pre-defined behaviors (e.g. fixed interval retry, exponential retry, etc.) that developer can reuse as-is without the need of re-implementing their owns.

- A configuration file schema that developers can use to enable default retry logic provider and configure what behaviors they want to associate to connection opening and command execution when a connectivity issue happens, without changing a line of code in their existing applications.

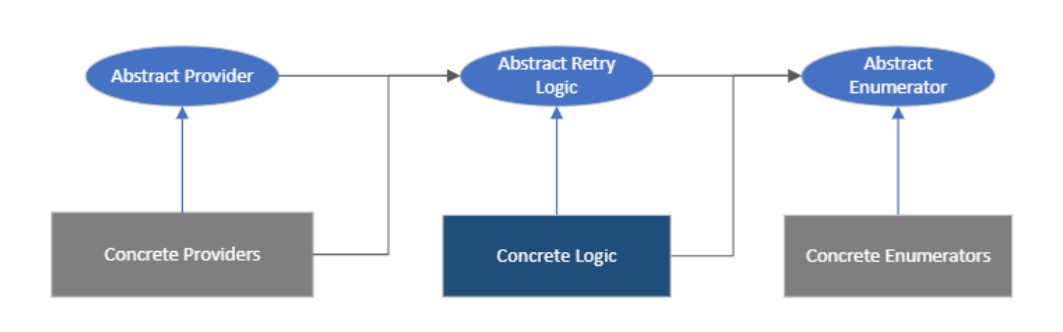

The Core API itself is designed around these three interfaces:

- Enumerator: generates a sequence of time intervals that depends on a given retry logic.

- Retry logic: retrieves the next time interval, considering max number of retries, if a transient condition happens.

- Retry provider: applies a given retry logic to connection and command operations.

As this is an extensible design, application developers with very peculiar requirements can create their own retry logic provider, containing a custom implementation of these concrete classes, where specify all the details of how operation will be retried.

For most developers though, they can just use the default base provider that offers out-of-the-box implementation of most common retry patterns like fixed, incremental and exponential retry intervals.

How to use Configurable Retry Logic?

By adding a reference to Microsoft.Data.SqlClient v3.0 Preview NuGet package in your existing project, and creating specific sections in your configuration file, you can enable Retry Logic by turning on the safety switch we have during Preview phase and define what retry method you want to use for SqlConnection and SqlCommand operations like connection opening and command execution.

Here’s an example of such a configuration file:

<?xml version="1.0" encoding="utf-8" ?>

<configuration>

<configSections>

<section name="SqlConfigurableRetryLogicConnection"

type="Microsoft.Data.SqlClient.SqlConfigurableRetryConnectionSection, Microsoft.Data.SqlClient"/>

<section name="SqlConfigurableRetryLogicCommand"

type="Microsoft.Data.SqlClient.SqlConfigurableRetryCommandSection, Microsoft.Data.SqlClient"/>

<section name="AppContextSwitchOverrides"

type="Microsoft.Data.SqlClient.AppContextSwitchOverridesSection, Microsoft.Data.SqlClient"/>

</configSections>

<!--Enable safety switch in .NET Core-->

<AppContextSwitchOverrides value="Switch.Microsoft.Data.SqlClient.EnableRetryLogic=true"/>

<!--Retry method for SqlConnection-->

<SqlConfigurableRetryLogicConnection retryMethod ="CreateFixedRetryProvider" numberOfTries ="3" deltaTime ="00:00:10" maxTime ="00:00:30"

transientErrors="40615" />

<!--Retry method for SqlCommand containing SELECT queries-->

<SqlConfigurableRetryLogicCommand retryMethod ="CreateIncrementalRetryProvider" numberOfTries ="5" deltaTime ="00:00:10" maxTime ="00:01:10"

authorizedSqlCondition="\b(SELECT)\b" transientErrors="102, 4060, 0"/>

</configuration>

Once .NET AppContext safety switch is on, you can define a retry logic for SqlConnection and SqlCommand independently, together with a set of options like:

- Number of retries

- Min/max/delta times for generating retry intervals

- List of comma-separated error codes that you want to be treated as retriable in addition to pre-defined ones

- A regular expression string representing authorized command texts. As an example, a string like “\b(SELECT)\b” will only retry commands containing SELECT operations while skipping everything else.

The value of retryMethod attribute determines which enumerator is used to generate time intervals:

- CreateFixedRetryProvider: will use a class called SqlFixedIntervalEnumerator, which is generating a constant time interval defined by deltaTime (with a max overall defined by maxTime).

- CreateIncrementalRetryProvider: will use SqlIncrementalIntervalEnumerator to generate time intervals, and will basically accumulate delays defined by deltaTime linearly (e.g. first retry 10 sec, second retry 20 sec, etc., until it reached maxTime).

- CreateExponentialRetryProvider: uses SqlExponentialIntervalEnumerator which, as the name implies, will basically increment time delays with power of 2 ration (e.g. first retry 10 sec, second retry 30 sec, third retry 70 sec, etc.)

The approach based on configuration file is great for those who are interested in getting automatic retry logic out-of-the-box without the need for changing a single line of code in their applications: they just need to add a reference to the new SqlClient package, recompile and voilà!

If instead you’re developing a brand-new application and want to get the most of all the available retry logic behaviors and settings, you can reuse the base classes now available and configure your own retry logic based on your requirements.

Here is an example of how to configure retry logic options and create a new provider to assign to a SqlConnection instance:

class Program

{

static void Main(string[] args)

{

AppContext.SetSwitch("Switch.Microsoft.Data.SqlClient.EnableRetryLogic", true);

SqlRetryLogicOption rto = new SqlRetryLogicOption() { NumberOfTries = 3, DeltaTime = TimeSpan.Parse("00:00:10"), MaxTimeInterval = TimeSpan.Parse("00:00:30"), TransientErrors = new[] { 40615 } };

SqlRetryLogicBaseProvider prov = SqlConfigurableRetryFactory.CreateFixedRetryProvider(rto);

using (SqlConnection cnn = new SqlConnection("server=.;"))

{

cnn.RetryLogicProvider = prov;

cnn.RetryLogicProvider.Retrying += new EventHandler<SqlRetryingEventArgs>(OnRetrying);

cnn.Open();

}

}

protected static void OnRetrying(object sender, SqlRetryingEventArgs args)

{

Console.WriteLine("Retrying for {0} times for {1:0.00} sec - Error code: {2}", args.RetryCount, args.Delay.TotalMilliseconds/1000.0, (args.Exceptions[0] as SqlException).Number);

}

}

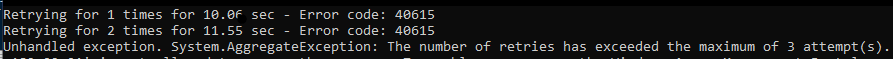

When executed against an Azure SQL Database with firewall rules blocking this client machine (this is where the error code 40615 is coming from), here is the result:

As you can see, connection opening has been retried for 3 times before returning an exception to the application.

As a side note, you can see that despite DeltaTime property was set to 10 seconds, the real delay before retries can be slightly shorter or longer. This is because we’re introducing a small random delay (or anticipation) to make sure that, in case multiple clients are trying to connect using the same retry logic, not all of them are hitting the backend database at the same exact time, causing potential concurrency issues.

Scenarios where configurable retry logic can be a game changer

For new, cloud-native data applications using Azure SQL as backend database, having a configurable (and extensible) retry logic framework baked into client drivers like Microsoft.Data.SqlClient is critical to create a more reliable user experience and respond to unexpected connectivity issues.

For existing applications moving to the cloud, the ability to add automatic retry logic by just configuring few sections in the app config file will remove many barriers and simplify migration projects of all sizes.

One really critical scenario is where both new and existing applications want to target Azure SQL Database Serverless tier to benefit from capabilities like auto-scaling and auto-pausing to adapt to variable workloads and optimize costs. Configurable retry logic is, in fact, capable of eliminating completely the side effect of connection issues during warm up condition (when a serverless database is waking up after auto-pausing, usually between 30 and 60 seconds) by configuring proper number of retries covering that time interval. Here’s an example of how to configure retry logic to use an exponential logic that will retry that initial connection for 5 times starting from a 10 seconds interval up to 2 minutes and half to cover warm up time:

<SqlConfigurableRetryLogicConnection retryMethod ="CreateExponentialRetryProvider" numberOfTries ="5" deltaTime ="00:00:10" maxTime ="00:02:30" />

A word of caution

Next steps

We’re planning to make configurable retry logic generally available over the next few months, as soon as the initial validation process by internal and external customers will tell us that it is ready for the prime time.

In addition, we’re planning to keep working over the next months to introduce configurable retry logic for all other major client drivers for Azure SQL, like JDBC, ODBC and OLE DB so that all customers will benefit from more resilient data access applications.

Go ahead and give it a try, and let us know what you think on our GitHub repo!

Not only with Azure there are connection problems. Over the years, using the application Insert Bulk Bestia that makes a very intensive use of the network and SQL Server I have experimentally found that the following errors they occur frequently, and for which, programming the appropriate retry technique solves many problems. The errors are obtained with the System.Data.SqlClient library:

<code>

Thanks for your comment, Francisco! We’re aware that there may be specific use cases where retrying on other error codes makes sense, but those may be platform specific (e.g. OLE DB or Named Pipes are only available on Windows) or depends on business logic (e.g. deadlocks). For this reason we didn’t include them in the default list, but customers can easily add them through config file’s attribute (transientErrors).

Indeed.

The only thing is that I cannot find the equivalence between the "Number" and "Class", shown in the previous code, with the new field "SqlState" of the new "SqlException" to be able to add the appropriate codes in "TransientErrors".

I've been reading your comments on errors categorization and problems with other database providers. The conclusion is that valid error codes for retries have to be retrieved with the use of applications, just like before. Work already done is lost.

However, I consider the work you are doing a great advance. It is about time that the network was considered the most unstable...

Great new capability. Look forward to using it…

Please guys open this website and try out this!Download the html file and run it on your browser, you will see the magic here..

Very good development! When this hits GA, will de driver be incorporated into SSMS and also Azure Data Factory? ADF does have retry logic per activity, but that is a restart of the whole process…

Hi Johannes, we’re working with other partner teams to get this improvement adopted in their apps, but we still don’t have an ETA for that. Stay tuned!

Is there an existing list of errors that are safe to retry with Azure SQL?

The list of errors that are considered “transient” from an Azure SQL perspective is already baked-in the base retry logic provider, and can be found here: https://github.com/dotnet/SqlClient/blob/c37092a378a972c3799bdf79db6fa58f779ba9fd/src/Microsoft.Data.SqlClient/src/Microsoft/Data/SqlClient/Reliability/SqlConfigurableRetryFactory.cs#L41

Customers can add their owns, depending on their specific requirements/logic, through configuration.

How do you configure an .NET Core app?

Hi Erik,

you can use the same app.config file format across all .NET flavors.

Thanks

Silvano