On the Sustainable Software blog, there have been a lot of great articles around reducing the environmental impact of software, from Energy Optimization with GPU to creating Energy efficient Progressive Web Apps. This article covers a software platform built for helping sustainability.

Catching plastics before they reach the ocean

We are frequently reminded of all the plastic that goes into the ocean, and the massive impact it has to marine life – and ultimately us. Plastic pollution is the second biggest threat to our ocean after climate change! While there are a lot of creative ideas and research into capturing this plastic from the oceans, it’s still a tricky challenge. For example, 70% of the marine debris sinks to the bottom of the ocean, which is more difficult to remove.

Is there another solution? Yes, we just must catch this plastic at the source! About 80% of the marine plastic come from rivers, but even the largest rivers are significantly smaller than the earth’s oceans.

Enter the Surfrider Foundation Europe, a non-profit organization fighting for the protection of the ocean and its users. A few years ago, they launched the Plastic Origins Project. The aim of this project is to create a global map of the sources of plastic litter from rivers . With that data, they can inform other non-profit organizations and public organizations to better use existing funding to protect the rivers. This data can also be used by scientists and research organizations.

If you want a complete overview of this project, please checkout this 10 minute video.

Scaling the “scientific observer”

Surfrider employees used the “observer” technique – widely used in wildlife scientific studies – to build an initial data set. An observer is simply a trained person spending time at an area of research, in this case a beach or a riverbank, and collecting data by seeing the objects of interest, such a fish, plants, or debris.

In the case of Surfrider, an observer spent time kayaking in a few rivers in South of France and writing down each item of trash seen with pen and paper, along with the type of trash, quantity, and GPS coordinates. They quickly graduated this approach by using an open-source GPS tracking app – OSM Tracker from OpenStreetMap project – to manually collect this info but from a smartphone.

Surfrider eventually joined a Microsoft organized hackathon – ShareAI – focused on non-profit organization challenges. For two days, myself and the Surfrider team designed and prototyped a few solutions to help Surfrider scale this observer technique to a whole new level. The goal was to be able to map all the rivers in a country like France , about 200,000 km, each year.

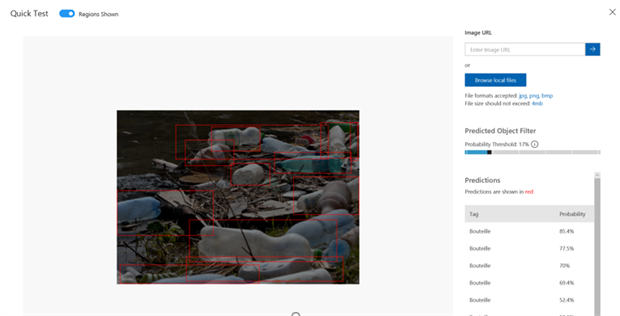

Our intuition was that we can use AI to automatically count trash items from a video. If we achieve this goal, we then can use simple action cameras to record the shoreline of rivers. We built a quick prototype with Azure Custom Vision, and within a couple of hours, we had our first model to help us automatically recognize and count plastic bottles in an image.

This small experiment told us that we can probably replace the human eye for counting trash in the wild for our project. We now needed a plan to get more images from the field, and then analyze them to build our trash map.

After hackathon, we came up with a plan: leverage the Surfrider network of volunteers (more than 2000 people in 11 European countries, and other chapters all around the globe) to capture this data in a scalable way.

For the first iteration of the project, we have decided to build several components:

- A mobile application allowing volunteers and citizens to record trash positions when they see them in the nature. This app will record pictures and videos, so the counting is automated.

- An advanced computer vision application that will automatically detect and count trash items.

- Database and visualization tools to expose this data to public agencies, other NGOs, and researchers

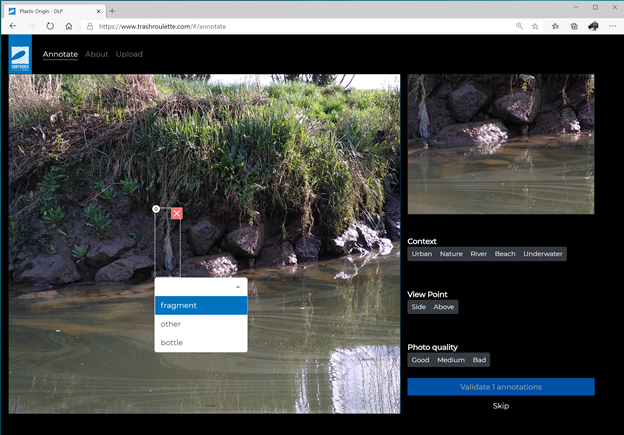

- A labelling platform to help us build a labelled dataset to train and improve our computer vision AI.

Creating an open-source platform to capture this data

We assembled a team of open-source volunteers to work on the various parts of the solution.

One of the biggest challenges we had was creating a more advanced AI that will be able to count all the kind of trash items that we wanted to count. To train this AI, we needed more images, which is why we created an online labelling platform.

While several tools already exist to do this task – including Microsoft VoTT – we didn’t find an opensource, web platform that allows us to let any volunteer label these images. The trashroulette website will be launched soon, and we expect to label tens of thousands of images in the coming months.

This platform will also be used to send videos collected from action cameras or phones for people not using our mobile apps.

An interesting AI Challenge

While computer vision has made tremendous progress in the past few years, Plastic Origins AI is an interesting AI challenge: detecting and counting moving objects with a moving camera from a video feed is not yet a fully solved challenge! We took a staged approach to solve it.

First, we’ve partnered with the French Microsoft AI School. We’ve tasked their students to test different approaches to identify trash on still images. This is a challenge, as the context of images we got can be quite complicated. You can see below two images we captured during one of our kayaking sessions. How many litter items can you spot? There are more than three items in each picture:

Then we partnered with computer vision experts to apply these experiments to video feeds. There is already plenty of machine learning algorithms to count moving objects in videos. One of our unique constrain here is the camera is also moving. Our current AI is doing a two-steps process:

- Detecting litter from still images: We’re sampling images from the video feed, and then using a Faster RCNN algorithm to detect litter items on each image.

- Calculating trajectories of each litter item and using it for counting: When each image is tagged by the previous step, we compare these tags with tags from adjacent images – using a Deep SORT-inspired algorithm – to get the trajectory of each litter item in the video. Then the number of trajectories is equals to the number of litter items.

You can see below the result of our current AI.

This process is a bit over-engineered for what we need for this project. We do not need to track litter items; we just want to count them. That’s why we’re now entering the third stage – with a PhD student working on his thesis – to come with a more efficient AI algorithm.

Finding a better solution is especially important for several reasons. First, getting a lightweight algorithm will enable us to move trash detection to the devices. This will allow us to create new products, like a “Surfrider box” – an embedded camera we can add onto barges to get continuous and up to date data from the biggest rivers. It will also probably help us to get a more sustainable solution. Right now, volunteers need to upload gigabytes of video data to Azure. This is something we can considerably reduce with an embeddable AI in our mobile application, only sending relevant images from the video feed.

Building sustainable software for sustainability

It would make no sense to build software to protect the oceans without thinking about the environmental cost of our solution. Because it’s hosted on Azure, our hosting is carbon-neutral. However, it’s only one side of the equation. The best way to reduce our footprint is to only use the resources we need.

Within our architecture, we’re defaulting to serverless offerings (read this article to understand why it’s important). Here is a list of services we use and why:

- Static Web Apps to host the public institutional website and the frontend of the labelling platform

- Azure Functions for all public & internal APIs and for some part of our ETL process

- Docker & VMSS for the ETL. We spin-up our VMSS cluster only when the ETL is running.

- API Management in Serverless mode for our API Proxies

- GitHub Actions for our CD/CI pipeline

We still have some challenges in the future to get even better:

- Our main database is a PostgreSQL instance, due to a lot of geo-coding features needed by our data scientists. We have a lot of optimizations to do here – including in our development environment – to better use the latest features of Azure SQL for PostgreSQL like Flexible Server.

- We still have few internal apps hosted on App Service that will be migrated to Azure Functions early 2021.

- Create a more Carbon-Aware ETL: Right now, we launch our ETL when new images and videos are uploaded onto the platform. In our case, our business would allow to delay video processing for 24 hours without any issue. Thus, we could delay our ETL to runs when the global demand in the Azure datacenters is low, or when the amount of usable renewable energy is at a peak. For some applications, implementing such a process in conjunction with Azure Spot VMs could also leads to some monetary savings!

It’s just the beginning of the story

We still have a lot of work to do to achieve our goals. We now have all the tools to produce our first plastic litter map in 2021. Meanwhile, if you want to follow the project, or volunteer, follow Surfrider GitHub organization.

0 comments