Azure Cognitive Search is now Azure AI Search, and semantic search is now semantic ranker. Visit here for more details about Azure AI Search.

In today’s digital landscape, efficient and effective customer support is necessary for the success and sustainability of business. Advances in artificial intelligence and machine learning help companies improve their customer experiences, such as the Retrieval Augmented Generation (RAG) pattern. RAG empowers businesses to create ChatGPT-like interactions tailored to their specific data sets.

In this post, we demonstrate how, using Azure OpenAI Service and Azure AI Search SDK, the RAG pattern can revolutionize the customer support experience. Our case study focuses on Contoso Real Estate, a fictitious company providing real estate solutions to Contoso employees. You can find the sample documentation here.

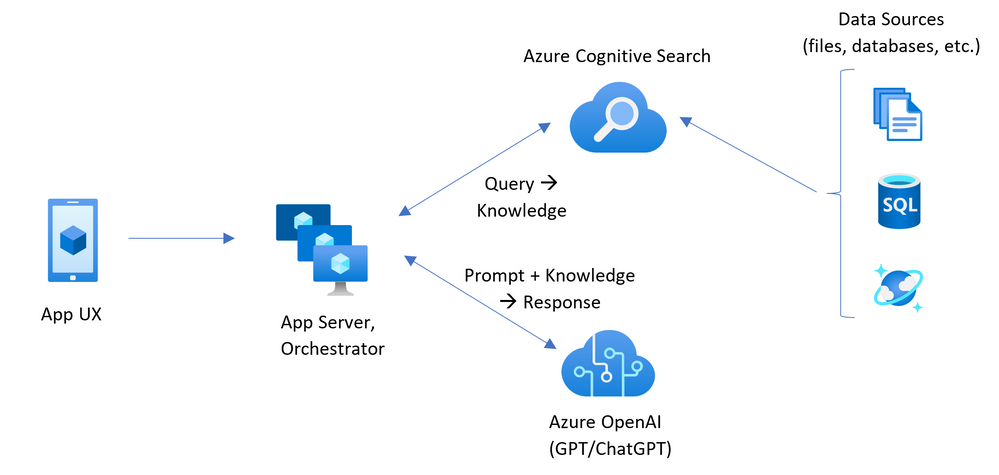

Understanding the Retrieval Augmented Generation Architecture

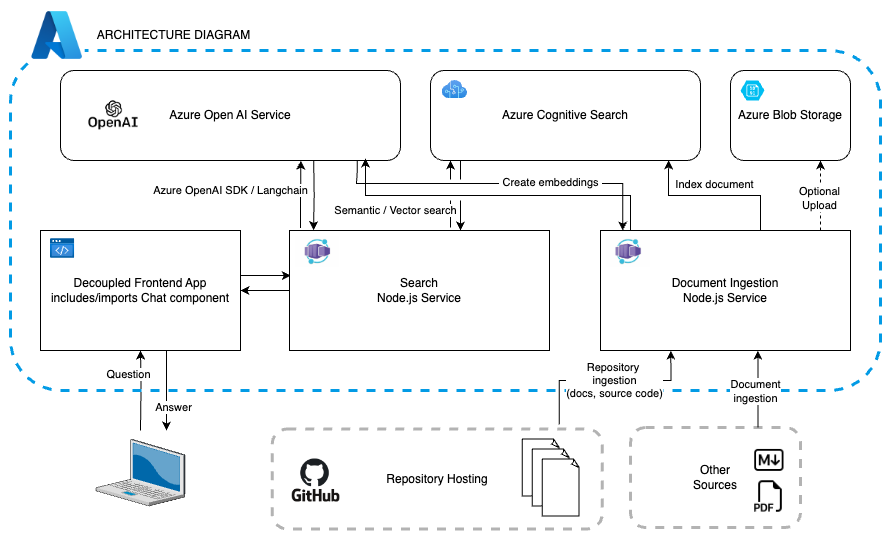

The core of this innovative approach lies in its comprehensive architecture, comprising several key components that seamlessly integrate to deliver a fluid and intuitive customer support experience:

Search Service: This pivotal backend service is designed to facilitate robust search and retrieval capabilities, enabling users to swiftly access relevant information from the company’s extensive knowledge base.

Indexer Service: By meticulously indexing data and creating search indexes, this service efficiently organizes and categorizes information, ensuring that the retrieval process is optimized for maximum efficiency.

Web App: The web app serves as the user-facing frontend application, presenting a dynamic and user-friendly interface. This setup allows customers to interact with the system effortlessly. Acting as a bridge between users and backend services, the web app enhances the support experience by using natural large language models, also known as LLMs, for intuitive interactions.

Unveiling the Contoso Real Estate Experience

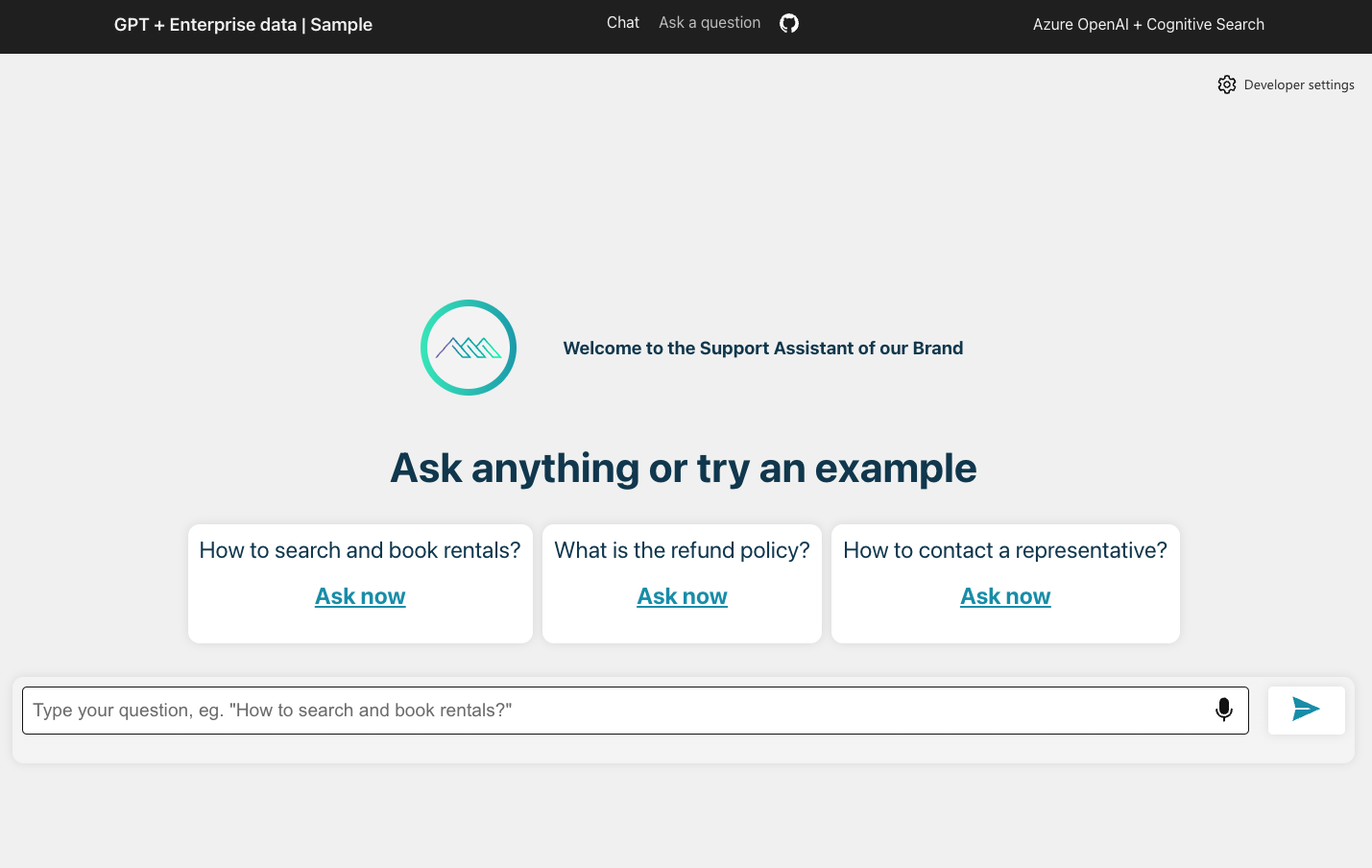

The application’s seamless integration of the RAG pattern transforms the customer support journey for Contoso Real Estate, a fictitious real estate portal created to provide a real-life architecture reference and application sample, for JavaScript developers working on Azure. The Contoso Real Estate customer support use-case allowed us to display the power of Azure OpenAI Service and Azure AI Search SDK, as an example of how companies of all sizes may be able to provide their customers with a personalized and comprehensive support system.

The system incorporates the following features:

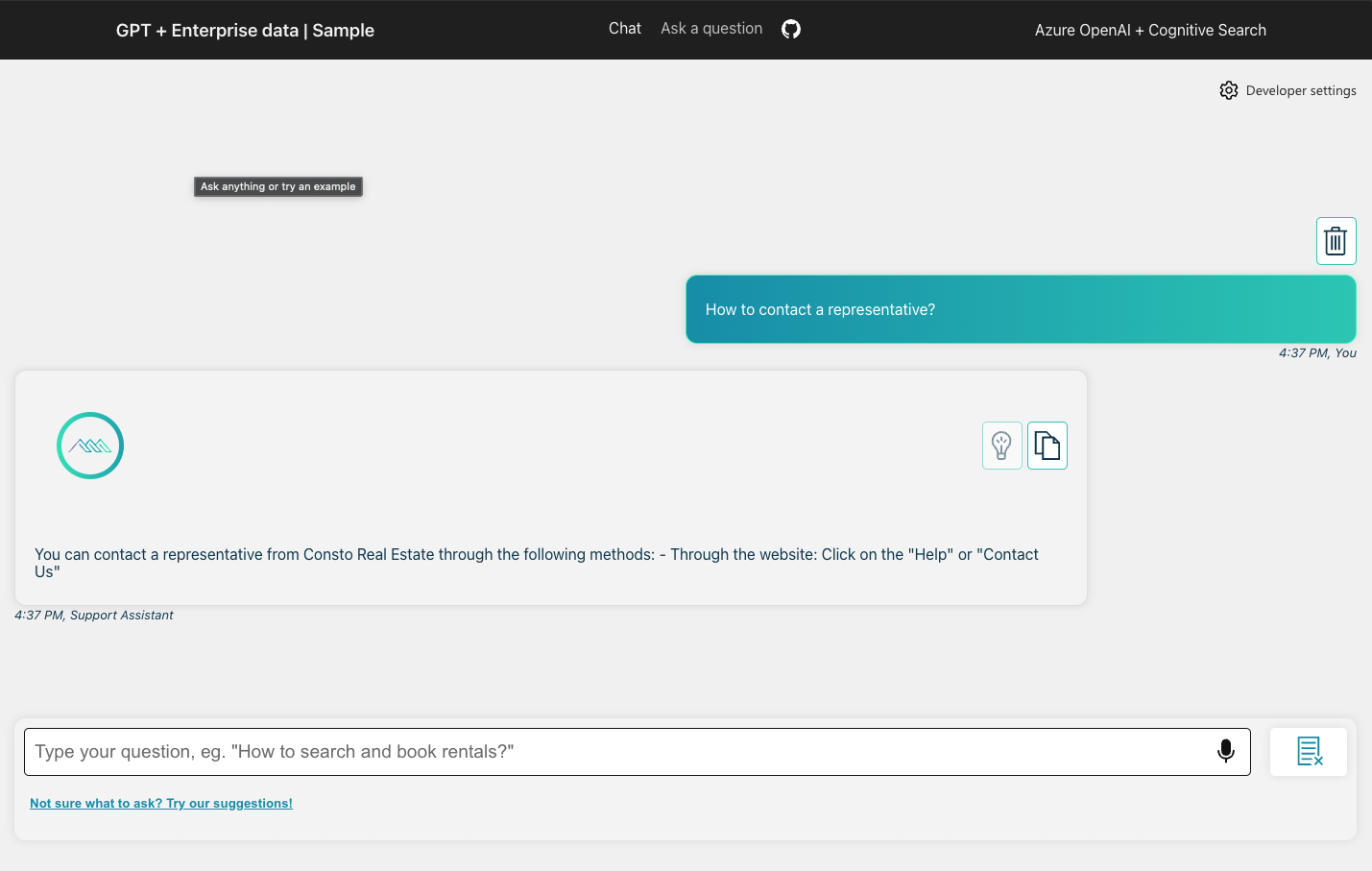

Chat and Q&A Interfaces: Users can engage with the system through interactive chat and question-and-answer interfaces, facilitating swift access to crucial information and resolutions for their queries, while business stakeholders make sure the information is always updated and relevant.

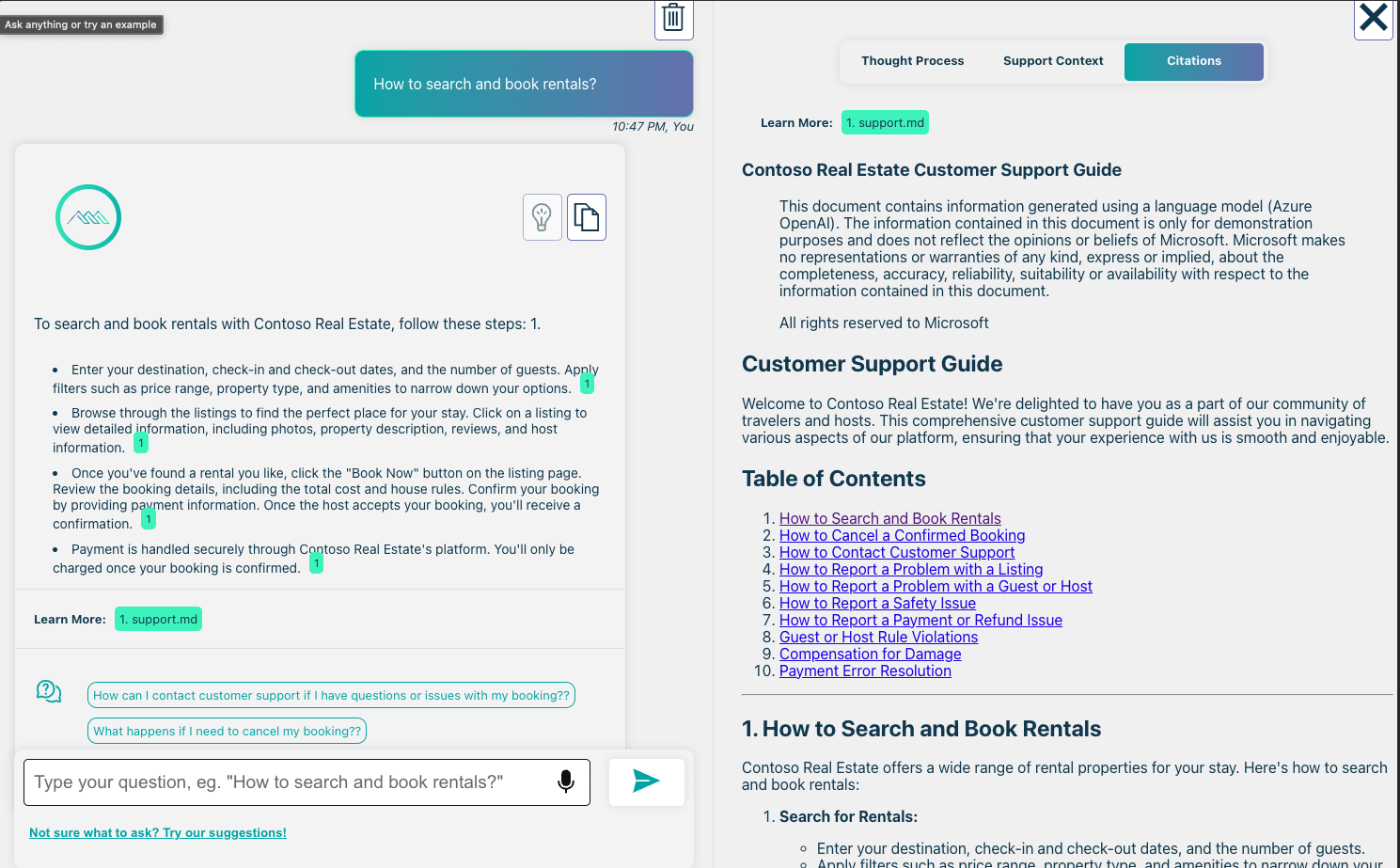

Trustworthiness Evaluation: The application offers various mechanisms for users to evaluate the trustworthiness of responses, including citations, source content tracking, and other credibility-enhancing features. These features ensure customers can always rely on accurate and reliable information and visit the sources for extended context.

Data Preparation and Prompt Construction: The application demonstrates various approaches for meticulous data preparation and prompt construction, ensuring that the system can effectively understand and respond to user queries with precision and relevance. The sample offers a debugging feature, to better understand the thought processes and data points used to elaborate each response.

Orchestration of Interaction: The application plays a key role in orchestrating the interaction between the ChatGPT model and the Azure AI Search retriever. This coordination ensures a smooth and user-friendly experience, making it effortless for customers to access pertinent information. Additionally, the sample integrates with LangChain, a highly favored AI framework among JavaScript developers. At a high level, the architecture supporting this Retrieval-Augmented Generation pattern running on Azure looks like this:

All the components of this application, the static frontend and the backend services are deployed as a composable architecture unit, to their respective managed infrastructure services. We selected Azure Static Web Apps for the web app, and Azure Container Apps for the services.

App Architecture

During an initial deployment or successive workflow executions, the Azure AI Search indexer service ingests and indexes data to make the application functional.

When the end-user submits a question using the frontend web component to the assistant the request, including a set of headers, will hit a backend Azure Open AI service that will then process it according to the configured options like the context approach for example “retrieve then read,” the retrieval mode, which can be full-text or vector, or even hybrid, the use of semantic features, like “semantic ranker” for optimized relevance of the responses or “semantic captions,” and implementation of streamed responses using HTTP Readable Streams in order to formulate a response that the frontend can render.

UX Settings Customization: This application sample is designed specifically for JavaScript and TypeScript developers, to easily integrate it with any existing application, built with modern frameworks. It consists of a web component that can be embedded and wired to any frontend, and seamlessly integrates to any backend that conforms to the Chat Protocol specification. The original sample is built with JavaScript/TypeScript end-to-end, but it can also be connected to the Python backend showcased here.

Because the web component is built with distribution and customization in mind, it implements a slim design system that can be easily adapted to any existing branding definitions.

Performance Monitoring and Tracing: With the optional integration of Application Insights, the application offers comprehensive performance monitoring and tracing, allowing businesses to gain valuable insights into user interactions and system performance for continuous optimization and refinement.

Embracing the Future of Customer Support

In conclusion, the convergence of advanced AI technologies and the RAG pattern offers a promising glimpse into the future of customer support, where seamless interactions, personalized assistance, and reliable information converge to create unparalleled customer experiences. With the continuous evolution of AI and machine learning, businesses can look forward to a future where customer support transcends traditional boundaries, ushering in a new era of personalized and efficient support services.

Here’s a sample repo and a follow-along article to help you get started on Azure. For more resources, including documentation and samples, visit Intro to Azure AI for JavaScript developers. These resources will assist you in learning how to develop applications using Azure OpenAI Service and other Azure AI Services.

0 comments