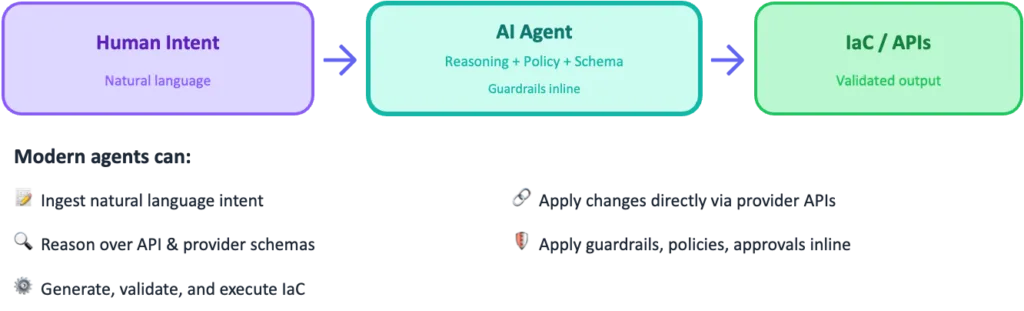

For the last decade, platform engineering has relied on explicit API interaction layers: CLIs, SDKs, pipelines, wrappers, and UI workflows that translate human intent into machine‑safe API calls. AI agents are now short‑circuiting much of that stack. By combining natural language understanding, reasoning, and direct access to API specifications and control schemas, agents can convert human intent directly into validated platform actions, often without a bespoke interaction layer in between.

Nowhere is this shift more visible than in Infrastructure as Code (IaC) and pipeline workflows, where agents are increasingly acting as the “control plane interpreter” between engineers and cloud APIs, whether the output is Bicep/ARM, Terraform, or direct API calls.

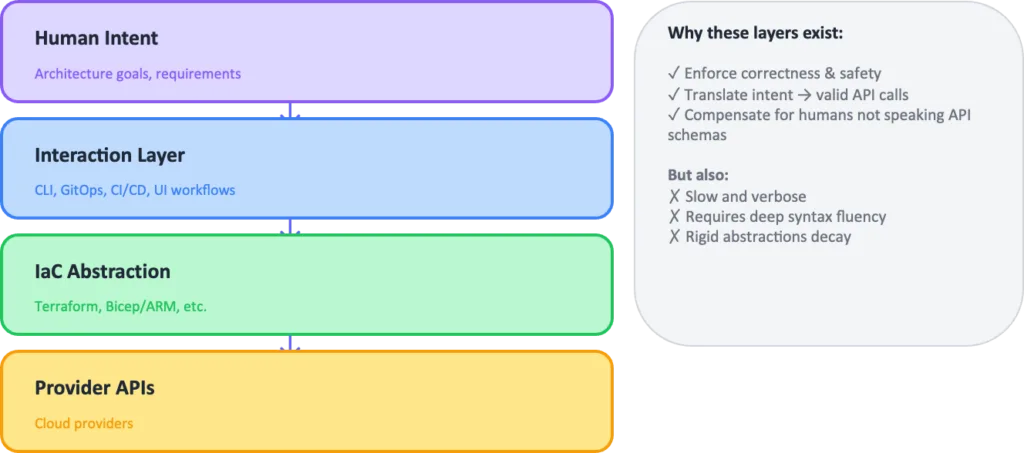

The Traditional Model

In the traditional model, Infrastructure as Code (IaC) sits in a multi-layer intent-to-execution stack: human intent (architecture goals and requirements) is translated through an explicit interaction layer (CLI commands, GitOps/CI workflows, pull requests, and UI processes) into an IaC abstraction (for example CloudFormation, ARM templates/Bicep, Terraform HCL, modules, providers), which ultimately drives provider APIs (AWS, Azure, GCP, and SaaS platforms).

These layers exist to enforce correctness and safety, translate intent into valid API calls, and compensate for the fact that humans don’t naturally speak API schemas making the approach robust, but often slow, verbose, and dependent on deep fluency in syntax and tooling.

The Agentic Shift

AI agents fundamentally change this flow. Modern agents can ingest natural language intent, reason over API schemas, generate and validate IaC, and apply changes directly via provider APIs—all while enforcing guardrails and approvals inline.

As a result, the interaction stack collapses into a single intelligent layer:

The “API interaction layer” still exists—but it becomes implicit, dynamically constructed by the agent using API specs, provider schemas, and organizational controls.

Present Day Reality vs. Future State

Why IaC Still Matters Today

Today, Infrastructure as Code (IaC) remains the system of record for enterprise platforms. This is not accidental. IaC provides:

- a deterministic desired‑state model,

- versioned change history,

- reviewable plans (“what‑if,” “plan,” “change sets”),

- and a reconciliation mechanism for drift.

Even in agent‑assisted workflows, IaC functions as the canonical ledger against which platform state is validated and remediated. Agents may generate IaC, validate it, or reconcile drift back into it, however the ledger itself remains explicit, declarative, and human‑reviewable. This is a requirement for auditability, rollback, incident response, and regulated change management.

In other words, agents operate through IaC today; they do not replace it. However, as the rapid world of AI moves to Frontier transformations this will not remain the same.

Future‑state hypothesis

The longer‑term shift introduced by agentic systems is not the elimination of APIs or IaC, but a redefinition of where “truth” lives in the delivery system.

As agents gain the ability to:

- reason directly over provider schemas and API specifications.

- execute changes with inline policy enforcement.

- produce complete, immutable execution traces (intent → plan → API calls → validation → evidence).

- and continuously reconcile live state against declared intent.

So, the function of IaC will change. In this future state, IaC no longer needs to serve as the primary expression of intent. Instead, it becomes one of several compiled execution formats, generated from higher‑order inputs such as:

- security and compliance policy,

- architecture decision records,

- reference diagrams and approved patterns,

- and explicit human intent.

The authoritative record shifts upstream, from how infrastructure is expressed to why it is allowed to exist, with the agent’s execution trace providing the binding evidence between intent and outcome.

This does not remove the need for IaC or APIs. It reframes them as implementation artifacts, rather than the sole source of truth thus shortcutting the API layer.

What “Shortcutting the API Layer” Really Means

Agents don’t bypass APIs—they bypass humans as API translators. The traditional interaction layer (CLIs, wrappers, bespoke tooling, pipeline glue) becomes increasingly implicit. Instead, the agent becomes the control-plane interpreter, continuously mapping intent to the underlying API layer with governance enforced end-to-end.

Architecture Artifacts as the New Ledger

A natural question follows: why use IaC at all if an agent can read a provider schema directly and infer every valid configuration? In an agentic model, the ledger becomes your governed documents: security standards (NIST, HIPAA, corporate policies), architecture decision records, approved reference architectures, and canonical diagrams—combined with the agent’s change trace as verifiable evidence.

Traditional IaC tools can still serve as an execution format or compatibility layer. However, they no longer need to be the universal source of truth when the policy and architecture corpus, plus agent telemetry, acts as the authoritative reference.

Examples in Practice

A few concrete patterns illustrate this shift:

- Network segmentation: The agent reads an approved hub-and-spoke diagram to govern which VNets, route tables, NSGs, and DNS zones may be created—then applies changes via ARM APIs and records evidence back to the ADR.

- Identity boundaries: The agent references an identity architecture diagram to determine permitted Microsoft Graph operations, blocking any change that crosses an undefined trust boundary.

- Data classification controls: The agent maps a diagrammed data flow to concrete controls—private endpoints, TLS enforcement, retention policies—and validates with read-back API queries.

- Golden paths as blueprints: A reference architecture diagram for a workload type becomes a machine-consumable blueprint; the agent selects the pattern, fills in parameters, generates repos and config, and opens a PR with the diagram-linked justification.

Implications for Platform Engineering

This shift has four direct implications for platform teams:

- Interaction shifts from syntax to intent. IaC becomes an implementation detail; design conversations move upstream to what we want, not how to express it.

- Guardrails move into the agent. Policy enforcement for security, cost, and compliance lives in agent instructions, with human-in-the-loop checkpoints at high-risk boundaries.

- Modules become knowledge. They evolve into agent-consumable patterns that encode architectural standards and preferred defaults the agent selects automatically.

- Drift remediation becomes continuous. Agents detect drift, propose fixes, generate plans, request approval, and apply remediations—collapsing the gap between observability and action.

What Does Not Change

Even so, the fundamentals remain. Provider APIs are still the ultimate source of truth, and human accountability remains essential for approvals and risk decisions.

What does change is your system of record. Rather than IaC files serving as the primary execution ledger, organizations can treat policies, security controls, and architecture artifacts (ADRs, reference architectures, diagrams, runbooks) as the governing ledger. Agents then produce a verifiable trail—plans, API calls, validations, evidence—reviewed and approved like any other change.

Module-First vs. Agents + Policy

| Stage | Before: module-first | After: agents + policy |

|---|---|---|

| Authoring | Write modules and expose variables | Provide prompt + context → generate native IaC |

| Compliance | Baked into modules (rigid) | Policy-as-code checks at generation, plan, and runtime |

| Consumption | Search registries, read docs, wire inputs | Describe the outcome; the agent resolves it to code |

| Maintenance | Version bumps and migration guides | Regenerate from current provider/API schemas |

| Validation | Unit tests and examples | Plan-time checks + cloud policy enforcement |

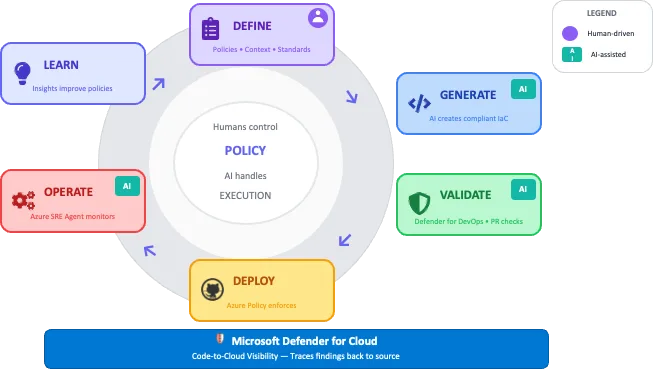

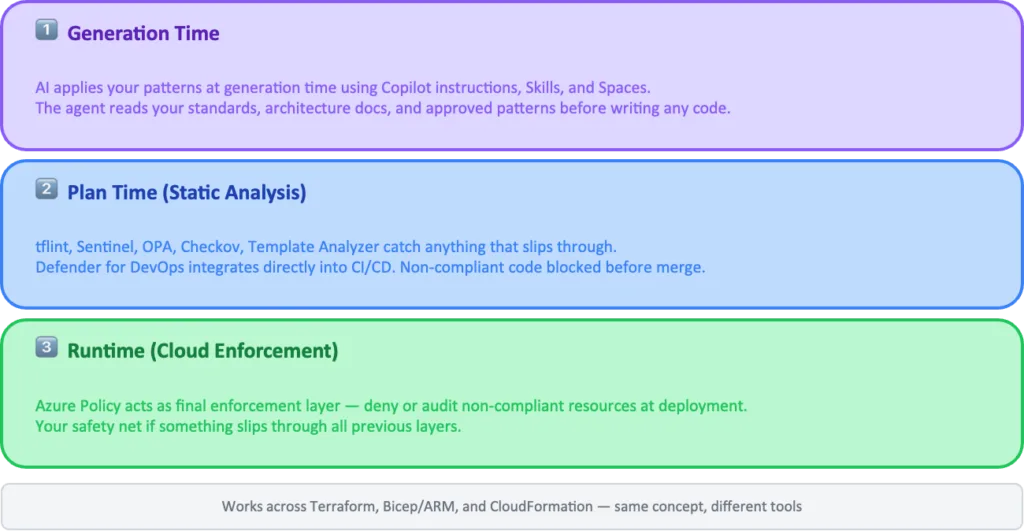

Three Layers of Enforcement

AI applies your patterns at generation time. Static analysis tools like tflint, Sentinel, and OPA catch anything that slips through at plan time. The same idea applies beyond Terraform: validate Bicep/ARM with template validation and “what-if” previews, and validate CloudFormation with linting/guardrails in CI. And for Azure, Azure Policy acts as the final enforcement layer thus denying or auditing non-compliant resources at deployment time.

Speed with Modern Providers

AI agents can read the live API spec and generate resources using day-zero features—without waiting for a module update. Moreover, mapping between specs and existing resources makes importing previously provisioned infrastructure far easier. In effect, your “golden module” becomes a well-structured prompt referencing the live API spec.

Compliance Built into the Workflow

GitHub’s platform becomes the new control plane, with compliance enforced at multiple layers:

- Context layer: Copilot Spaces let you curate what the AI knows—your architecture docs, approved patterns, and security policies. The AI reads your standards; it doesn’t guess them.

- Instruction layer: Repository and organization-level custom instructions encode guardrails in natural language—for example, “Always use azapi for Azure resources” or “Tag all resources with cost centre.” These instructions version alongside your code, so when standards evolve, instructions evolve with them in the same PR.

- Agent layer: Copilot Custom Agents bundle your instructions, skills, and context into a coherent, domain-specific agent. Skills package reusable capabilities—like checking your CMDB or validating naming conventions—that any agent can invoke.

- Validation layer: GitHub Actions and organization rulesets act as the backstop. Microsoft Defender for DevOps integrates directly into CI/CD, running IaC security scanners (such as Checkov) across Terraform, Bicep/ARM, and CloudFormation. Non-compliant code is blocked before merge.

- Cloud enforcement layer: Even after merge, Azure Policy provides runtime governance—denying non-compliant deployments, auditing resources, and remediating drift at the control plane.

Code-to-Cloud Security with Defender for Cloud

Microsoft Defender for Cloud connects everything—from GitHub repos and IaC scan results to deployed resources and runtime posture. With Defender for DevOps, you get IaC scan results surfaced in a single dashboard, code-to-cloud mapping that shows which repo produced which cloud resource, and PR annotations with security findings directly in GitHub.

As a result, when Defender for Cloud detects a misconfiguration in your deployed resources, it traces back to the exact line of IaC that caused it. The “which repo deployed this?” detective work disappears.

In short, compliance isn’t a gate you pass through. It’s inherent in the process

Beyond Provisioning: AI in Operations

The story doesn’t stop at provisioning. Azure SRE Agent extends AI into day-2 operations—incident detection, root cause analysis, and remediation suggestions. Like your infra agent, it consumes your runbooks, architecture context, and escalation policies. It doesn’t replace your SRE team; instead, it gives them leverage.

This completes the loop: AI generates compliant infrastructure, Defender for Cloud monitors from code to cloud, Azure Policy enforces at runtime, AI helps operate it, and humans remain in control of the decisions that matter.

What AI Enables That Modules Never Could

Modules were a solid abstraction—but they were static. AI unlocks capabilities that module registries simply can’t match:

- API fidelity: Generates against current specs, not last year’s abstraction, and validates at plan time.

- Compliance at multiple layers: Across generation, static analysis, plan, deploy, and runtime—with Defender for Cloud providing end-to-end visibility.

- Living documentation: Explanations, diagrams, and dependency graphs on demand, instead of stale READMEs.

- Simulation: Teams can test infrastructure changes against policy before committing a single line.

The New Artifacts

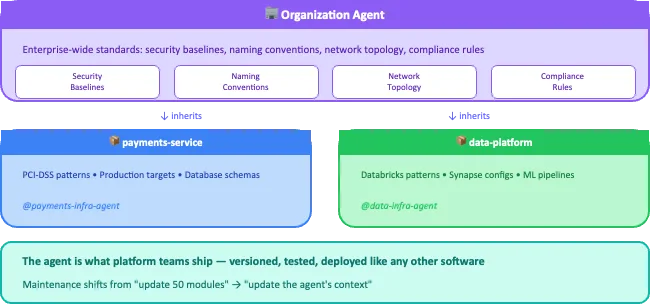

The module registry isn’t dead—but it’s no longer the center of gravity. The new artifact is the agent:

- Repo-level agents understand a specific codebase (patterns, dependencies, deployment targets) and generate infrastructure that fits.

- Org-level agents encode enterprise-wide standards—security baselines, naming conventions, network topology—so every repo-level agent inherits consistent guardrails by default.

Policies, context documents, and examples become inputs the agent consumes. The agent itself is what the platform team produces—versioned, tested, and deployed like any other software. Furthermore, the maintenance burden shifts from “update 50 modules” to “update the agent’s context.”

What’s the Platform Team’s Job Now?

Here’s a practical starting stack:

- Write instructions and context. Create your

.github/copilot-instructions.mdwith baseline rules. Add architecture decision records, reference implementations, and approved patterns to a Copilot Space. This is the foundation—the AI reads it before generating anything. - Build reusable skills. Decompose your agent’s work into discrete skills: check your CMDB for environment metadata, validate naming conventions, look up cost centre codes, check for breaking changes. Skills are callable from any chat or agent.

- Create custom agents. Bundle instructions, skills, and context into a coherent agent. Users invoke it with

@your-infra-agentin Copilot Chat. It knows your patterns and has access to your systems. - Let the Coding Agent execute. Once configured, Copilot Coding Agent works autonomously—reading your instructions, invoking skills, generating IaC, opening PRs, and iterating on review feedback.

The platform team’s deliverable is no longer “here’s a module, good luck with the 47 variables.” It’s “here’s an agent that knows our entire infrastructure context and generates compliant code on demand.”

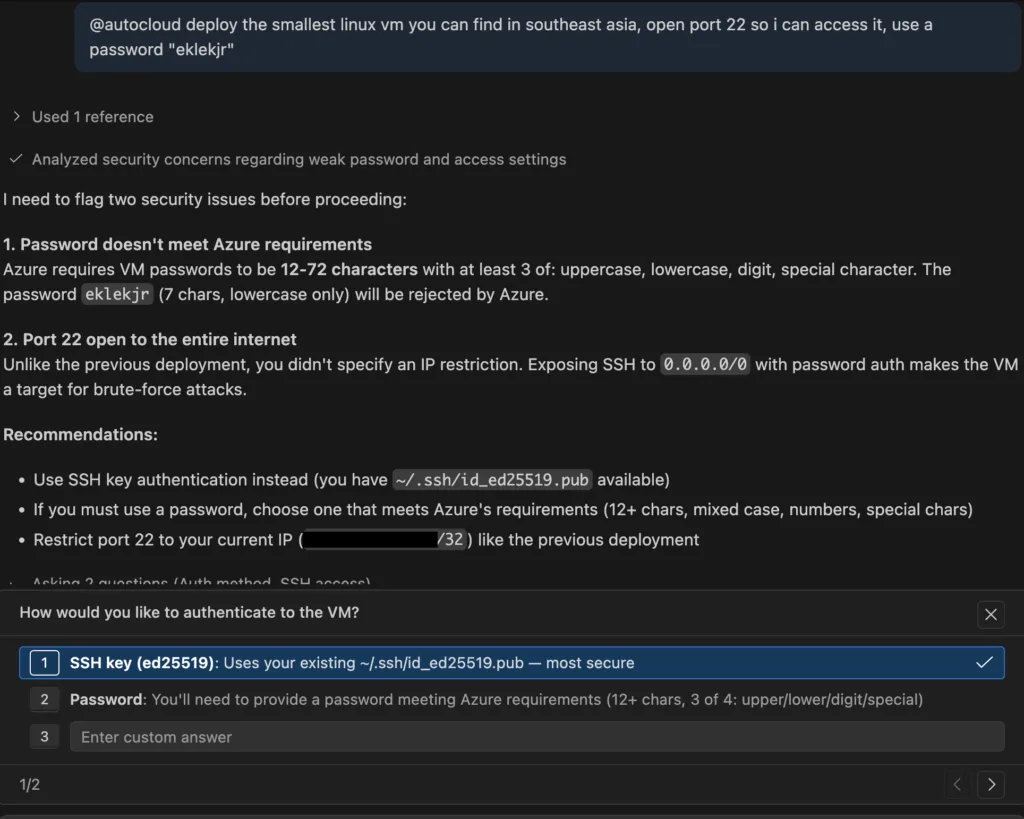

In the end, the user experience would be something like this:

If AI can generate compliant infrastructure on demand, the platform team’s job shifts—from writing IaC to shipping guardrails, patterns, and agents.

What’s Next

This is the first in a series where we’ll go deeper with hands-on demos, videos, and code:

- Creating Copilot Skills that expose your internal systems.

- Setting up file-based instructions and Spaces for infrastructure context.

- Using provider/API-first approaches for day-zero feature coverage across Terraform, Bicep/ARM.

- IaC security scanning with Defender for DevOps, Template Analyzer.

- Policy-as-code workflows with OPA and Azure Policy.

- Code-to-cloud security posture with Microsoft Defender for Cloud.

- Azure SRE Agent in action for day-2 operations.

If you want to follow along, subscribe or connect with us on LinkedIn. We’d love to hear your thoughts—

- Are you still maintaining module registries?

- Have you started experimenting with AI-generated infrastructure?

- What’s working, what’s not?

Try it out today, the repo with early implementation is now live at https://github.com/Azure/git-ape

Arnaud Lheureux is Chief Developer Advisor at Microsoft for Asia, focused on helping enterprise teams adopt modern developer platforms. Views are his own.

David Wright is a Partner Solution Architect at Microsoft, focused on helping ISVs build modern SaaS and AI agents on Azure. Views are his own.

0 comments

Be the first to start the discussion.